Storage Protocol Basics: iSCSI, NFS, Fibre Channel, and FCoE

9 Comments

Wanna get your storage learn on? VMware has a well-laid-out explanation of the pros and cons of different ways to connect to shared storage. The guide covers the four storage protocols, but let’s get you a quick background primer first.

iSCSI, NFS, FC, and FCoE Basics

iSCSI means you map your storage over TCPIP. You typically put in dedicated Ethernet network cards and a separate network switch. Each server and each storage device has its own IP address(es), and you connect by specifying an IP address where your drive lives. In Windows, each drive shows up in Computer Manager as a hard drive, and you format it. This is called block storage.

NFS means you access a file share like \\MyFileServerName\MyShareName, and you put files on it. In Windows, this is a mapped network drive. You access folders and files there, but you don’t see the network mapped drive in Computer Manager as a local drive letter. You don’t get exclusive access to NFS drives. You don’t need a separate network cable for NFS – you just access your file shares over whatever network you want.

Fibre Channel is a lot like iSCSI, except it uses fiberoptic cables instead of Ethernet cables. It’s a separate dedicated network just for storage, so you don’t have to worry as much about performance contention – although you do still have to worry.

Fibre Channel Over Ethernet runs the FC protocol over Ethernet cables, specifically 10Gb Ethernet. This gained niche popularity because you can use just one network (10Gb Ethernet) for both regular network traffic and storage network traffic rather than having one set of switches for fiber and one set for Ethernet.

Now that you’re armed with the basics, check out VMware’s PDF guide, then read on for my thoughts.

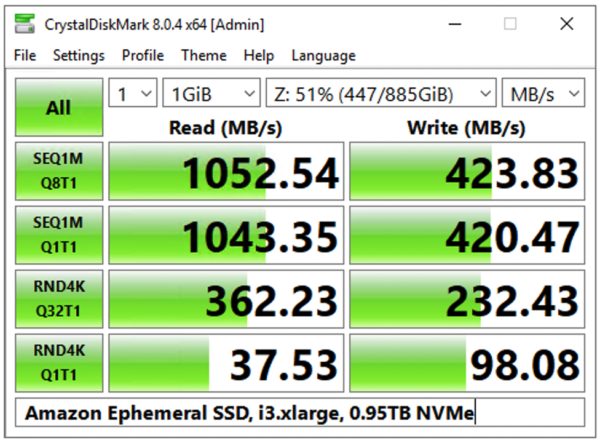

What I See in the Wild

1Gb iSCSI is cheap as all get out, and just as slow. It’s a great way to get started with virtualization because you don’t usually need much storage throughput anyway – your storage is constrained by multiple VMs sharing the same spindles, so you’re getting random access, and it’s slow anyway. It’s really easy to configure 1Gb iSCSI because you’ve already got a 1Gb network switch infrastructure. SQL Server on 1Gb iSCSI sucks, though – you’re constrained big time during backups, index rebuilds, table scans, etc. These large sequential operations that can easily saturate a 1Gb pipe, and storage becomes your bottleneck in no time.

NFS is the easiest way to manage virtualization, and I see a lot of success with it. It’s probably an easy way to manage SQL clusters, too, but I’m not about to go there yet. It’s just too risky if you’re using the same network for both data traffic and storage traffic – a big stream of sudden network traffic (like backups) over the same network pipes is a real danger for SQL Server’s infamous 15 second IO errors. Using 10Gb Ethernet mitigates this risk, though.

Fibre Channel is the easiest way to maximize performance because you rule out the possibility of data traffic interfering with storage traffic. It’s really hard to troubleshoot, and requires a dedicated full time SAN admin, but once it’s in and configured correctly, it’s happy days for the DBA.

Want to learn more? We’ve got video training. Our VMware, SANs, and Hardware for SQL Server DBAs Training Video is a 5-hour training video series explaining how to buy the right hardware, configure it, and set up SQL Server for the best performance and reliability. Here’s a preview:

https://www.youtube.com/watch?v=S058-S9IeyM