sp_Blitz® v14 Adds VLFs, DBCC, Failsafe Operators, and More

11 Comments

Today, we’ve got a big one, and it’s all thanks to you.

For the last couple of versions, I haven’t added any big features because I’d been focused on the plan cache improvements. Today, though, we’ve got a big one with all kinds of health-checking improvements – and they’re all thanks to your contributions.

- Lori Edwards @LoriEdwards http://sqlservertimes2.com – Did all the coding in this version! She did a killer job of integrating improvements and suggestions from all kinds of people.

- Chris Fradenburg @ChrisFradenburg http://www.fradensql.com – added a check to identify globally enabled traceflags

- Jeremy Lowell @DataRealized http://datarealized.com added a check for non-aligned indexes on partitioned tables

- Paul Anderton @Panders69 added a check for high VLF count

- Ron van Moorsel tweaked and added a couple of checks including whether tempdb was set to autogrow, and checking for linked servers configured with the SA login

- Shaun Stuart @shaunjstu http://shaunjstuart.com added several changes – the much-desired check for the last successful DBCC CHECKDB, checking ot make sure that @@SERVERNAME is not null, and updated check 1 to make sure the backup was done on the current server

- Sabu Varghese fixed a typo in check 51

- We added a check to determine if a failsafe operator has been configured

- Added a check for transaction log files larger than data files suggested by Chris Adkins

- Fixed a bunch of bugs for oddball database names (like apostrophes).

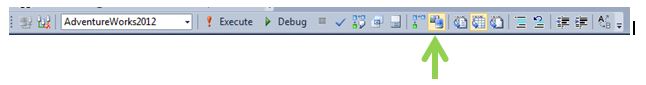

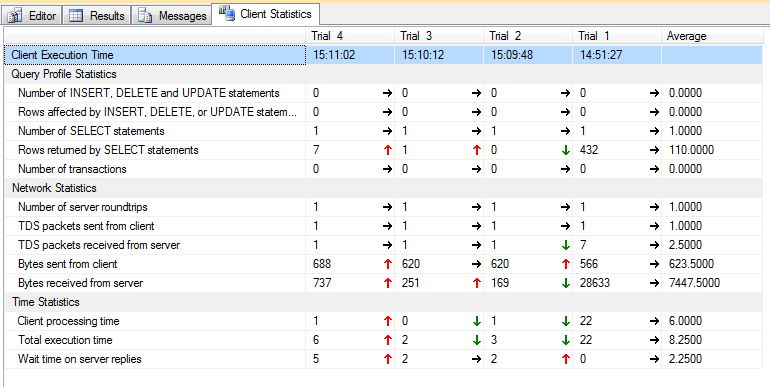

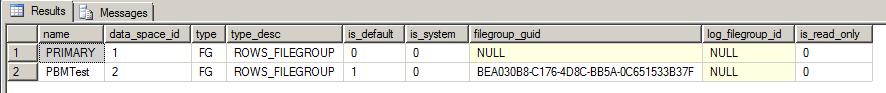

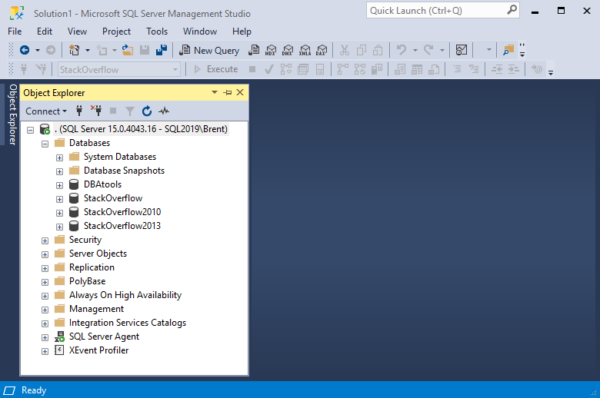

Here’s how to use it:

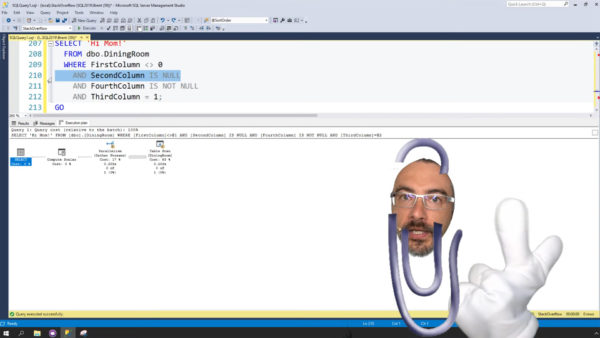

https://www.youtube.com/watch?v=2tgAAcA3Me8

Head on over to BrentOzar.com/blitz and let us know what you think. Enjoy!