There’s a Bug in sys.dm_exec_query_plan_stats.

6 Comments

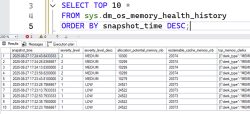

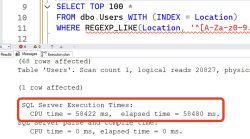

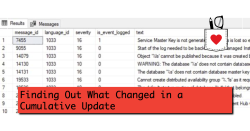

When you turn on last actual plans in SQL Server 2019 and newer: Transact-SQL ALTER DATABASE SCOPED CONFIGURATION SET LAST_QUERY_PLAN_STATS = ON; 1 ALTER DATABASE SCOPED CONFIGURATION SET LAST_QUERY_PLAN_STATS = ON; The system function sys.dm_exec_query_plan_stats is supposed to show you the last actual query plan for a query. I’ve had really hit-or-miss luck with this…

Read More