Microsoft’s Query Tuning Announcements from #PASSsummit

3 Comments

Microsoft’s Joe Sack & Pedro Lopes held a forward-looking session for performance tuners at the PASS Summit and dropped some awesome bombshells.

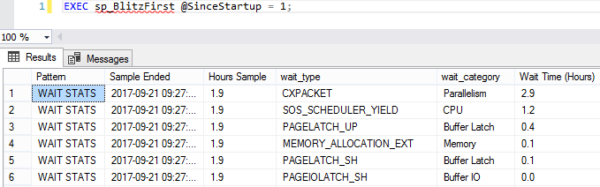

Pedro’s Big Deal: there’s a new CXPACKET wait in town: CXCONSUMER. In the past, when queries went parallel, we couldn’t differentiate harmless waits incurred by the consumer thread (coordinator, or teacher from my CXPACKET video) from painful waits incurred by the producers. Starting with SQL Server 2016 SP2 and 2017 CU3, we’ll have a new CXCONSUMER wait type to track the harmless ones. That means CXPACKET will really finally mean something.

Joe’s Big Deal: the vNext query processor gets even better. Joe, Kevin Farlee, and friends are working on the following improvements:

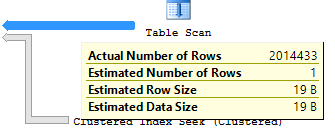

- Table variable deferred compilation – so instead of getting crappy row estimates, they’ll get updated row estimates much like 2017’s interleaved execution of MSTVFs.

- Batch mode for row store – in 2017, to get batch mode execution, you have to play tricks like joining an empty columnstore table to your query. vNext will consider batch mode even if there’s no columnstore indexes involved.

- Scalar UDF inlining – so they’ll perform like inline table-valued functions, and won’t cause the calling queries to go single-threaded.

These are all fantastic news. If you’re in Seattle and you wanna learn more, Kevin Farlee will be doing a 20-minute demo at 1PM in the Microsoft Theater in the exhibit hall. See you there!