We need your help.

2 Comments

Doctors Without Borders is a truly awesome charity. Medical professionals volunteer their own personal time to go to war-torn countries and solve issues of world health.

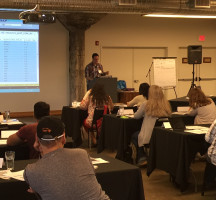

SQL Server community member, MCM, and all-around-good-guy Argenis Fernandez organizes Argenis Without Borders, a giving event to help. Last year, the SQL Server community helped raise $13,000 for this well-deserving charity.

Now it’s your turn. Please donate $25 if you can. It would mean a lot to me, and I know it’d mean a lot to people all over the world who urgently need medical help. You’ve got a great job – it’s your turn to give back.

Now it’s your turn. Please donate $25 if you can. It would mean a lot to me, and I know it’d mean a lot to people all over the world who urgently need medical help. You’ve got a great job – it’s your turn to give back.