Nodes Offline: Why Your SQL Server VM Can’t Use All Its Virtual Hardware

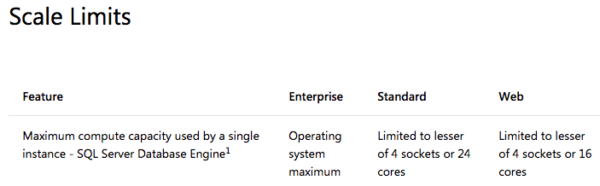

When you install SQL Server Standard or Web Edition in a virtual machine with more than 4 cores, things can look like they’re just fine – when in reality, you’re not using anywhere near your server’s potential. See, these editions have a tricky limitation – let’s look at the documentation:

Here’s the catch: the lesser of 4 sockets or 24/16 cores. Not the greater – the lesser. So if you configure a virtual machine with, say, 8 virtual processors, you have to be really sure that you don’t accidentally end up with 8 sockets. Here’s a screenshot of Task Manager in a poorly configured VM:

At the right, see how it says “Sockets: 8”? That means we set the VM up with 8 CPUs, each of which has a single core. Standard Edition will only use the first 4 cores here, and that’s it. No matter how much load our SQL Server gets, it’ll only use 4 cores – meaning CPU will only hit 50% busy. Our wait stats will show SOS_SCHEDULER_YIELD out the wazoo, but our sysadmins will say, “There’s plenty of available CPU power – you’re just not using it.”

Here’s an example of a reader who ran into the problem after reading this post – note how their first four cores are getting hammered, and the rest are sitting idle:

@BrentO saved my day. Thank you so much https://t.co/y4xS1T3nyW pic.twitter.com/Pay2X3yuPw

— Jose Miguel (@reckenna) June 14, 2018

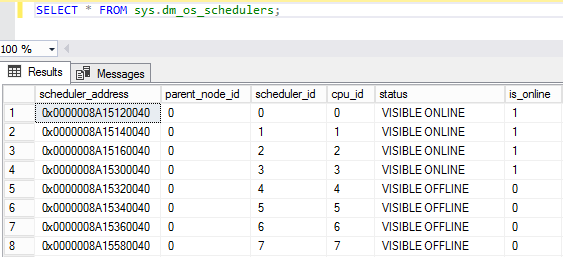

This is notoriously tricky because if you open SQL Server Management Studio, right-click on the server, and click Properties, it looks like everything’s okay. You see all 8 cores – you just can’t use ’em because they’re offline, as shown in sys.dm_os_schedulers:

There’s another clue buried in the SQL Server startup log:

SQL Server detected 8 sockets with 1 cores per socket and 1 logical processors per socket, 8 total logical processors; using 4 logical processors based on SQL Server licensing. This is an informational message; no user action is required.

Just informational. No big deal. Carry on.

How to tell if you’re having this problem – and fix it

Just run sp_Blitz, and look for the warning of “CPU Cores Offline” or “Memory Nodes Offline.” This is one of those things I found once in the field, figured I’d better check for it, and now I find it all over the place. I totally understand why – from the SQL Server side, nothing looks out of the ordinary. (SQL ConstantCare® finds this problem on dozens of servers per month, for free!)

The solution is easy:

- Shut down the VM

- Change the number of cores per socket – in my case, I could set it to a single-socket, 8-cores-per-socket VM, or a 2-socket quad-core, or a 4-socket dual-core

- Turn the VM back on

And enjoy your newfound power. Be aware, of course, that your CPU percent-busy may suddenly get higher (worse) – but that’s a good thing, because it means you’re using all that horsepower you paid for.

Another cause: running Standard Edition on big servers

SQL Server Standard Edition is limited as to how many cores it can access: 2014 & prior cap out at 16 cores (32 with hyperthreading), and 2016 & newer cap out at 24 cores (48 with hyperthreading.) If you try to run one of these on, say, a 64-core server, you’ll encounter this error.

You could use all of your cores by upgrading to Enterprise Edition, but…really, who wants to pay $7,000 per core to do that? Instead, use affinity masking to configure alternate cores as active so that you can balance the workloads more effectively across the underlying physical CPUs.

For example, say you’ve got 2014 Standard (which maxes out at 16 cores, 32 hyperthreading), and you’re running it on a 2-socket, 16-core-per-CPU server with hyperthreading enabled (for 64 total cores.) You would configure SQL Server to use every other core so that it uses 32 of the 64 cores, but just every other core. That way, both of your 16-core CPUs will get activity, and it’ll balance out the heat & memory better between the processors (instead of only lighting up the threads on the first 16-core processor.)

Related

Hi! I’m Brent Ozar.

I make Microsoft SQL Server go faster. I love teaching, travel, cars, and laughing. I’m based out of Las Vegas. He/him. I teach SQL Server training classes, or if you haven’t got time for the pain, I’m available for consulting too.

Get Free SQL Stuff

"*" indicates required fields

31 Comments. Leave new

Thank you so much for this! I just saw this message in the error logs of a SQL Server VM, the other day. Your post did three things.

1. Reminded me that I saw the error message (I forgot because, SQUIRREL!)

2. Told me what it is

3. Told me how to fix it.

I can’t thank you guys enough for all you do.

I’m curious if there are any pros/cons for the different ways that a VM could be set up in regards to cpus/#cores/#sockets. IE, does it make a difference if SQL Server sees 1 socket with 4 cores vs 4 sockets with 1 core. Unfortunately I don’t really have a setup to test this with at the moment.

The biggest differences come down to the underlying physical architecture of the host, and Non-Uniform Memory Architecture (NUMA).

For physical servers with multiple sockets, the memory banks are separated per socket, so that each socket has a “preferred” memory node – all sockets can access memory in all nodes, but there’s higher latency to access memory from a non-preferred node. SQL Server is a NUMA-aware application, so if you’re running SQL on a physical machine, it will keep memory allocations for a given query in the same memory node as the CPU core(s) the query is running on.

Your hypervisor is also NUMA-aware, but it’s also designed to make things as simple for configuration as possible. When it gets a request to start up a new virtual machine, it will look at the current load on each CPU socket and on each memory bank, and try to find the best fit for the virtual machine (possibly rebalancing running VMs to other sockets & NUMA nodes if necessary). However, the default settings implicitly assume the CPU vs memory requirements of a standard web/application server, which will have a relatively small number of CPU cores & small RAM allocation relative to the total power of the machine, such that they’ll easily fit within a single NUMA node. For SQL guests (or other high performance servers, like an Exchange backend), it’s much more likely that you’ll run into guests that are too large to comfortably fit on a single NUMA node. In that case, VMWare and Hyper-V both provide a way that you can specify the socket layout for the guest, and then they’ll allocate the physical resources accordingly (so you can have your SQL server spread across 2 sockets & 2 NUMA nodes, taking 4 cores and 128GB of RAM from each node out of the 20 cores & 256GB per node your server has, instead of trying to max out the RAM allocation on a single node and being unable to since the hypervisor OS needs a little plus any other VM guests).

Basically… if you have a relatively small guest (less than 25% of the resources of a single NUMA node on the underlying physical hardware), there’s little to no performance benefit for overriding the default behavior of the hypervisor. If you have a larger guest, especially if you have multiple larger guests on the same host, splitting them up so that they balance across multiple nodes will help resource contention (since the hypervisor can’t arrange 3 guests that all need 50% of a single NUMA node’s RAM capacity across 2 NUMA nodes without one of them getting “non-preferred” and thus higher-latency memory), and thus will help with performance of the guests.

Hello 🙂

Thank you Brent for the original post, and thank you Noah for all that information on NUMA.

A while ago I heard a rumour (myth?) that if you assign a large number of cores (or it could have been vCPUs) to a virtual machine it can slow the machine down because it will wait for that number of cores (or vCPUs) to become available on the host before granting the guest CPU cycles on the host. Just wondering if you had heard of this or knew of any truth in this rumour please? 🙂 **It was in relation to VMware

Your question is old, but I have definitely seen that behavior – as recent as version 6.7.

In older versions of VMWare – early 5.x and earlier, there was a point where you could keep adding more processors to a VM and it wouldn’t perform any better. It would just use smaller and smaller percentages of CPU. At the time I only had one client that had the money for SQL enterprise (2012), but their thing was to spend a massive amount of money on cool software, under-invest in hardware, and I never saw a SQL VM with a ton of cores. In other applications that handled high concurrent usage (Terminal Servers, Exchange Servers, busy web servers), we observed that adding more processors would actually make performance take a nose dive. That was usually about 6 or 8 cores, but it depended on the OS and the application it was running. It seemed to be worst on RDS servers, best on Exchange servers, and worse when the OS was 2008 VS 2012.

Just a couple weeks ago I had this same issue with a ‘data warehouse’ (Really just a copy of the data structure in the OLTP server, but with full data history) server that runs SQL 2012 in Server 2012 on a slightly overprovisioned ESXI 6.7 box. It uses extremely complex and horribly implemented views so just about everything goes parallel even with a ctfp of 50. With 6 cores and a max DOP of 6, it was struggling, would hit massive CX packet waits all the time, Idera would alert of waits on CPU resources as long as 30 milliseconds dozens of times per day, but would hang out around about 30% CPU all the time – not obviously CPU bound. Decreasing the max DOP to 3 instantly dropped the CPU to about 10%, reports became notably faster and idera still alerts a few times per day on waiting on CPU, but it isn’t going out 30-40 times per day anymore and usually only with waits in the teens and occasionally the low 20s.

How does NUMA Nodes play into this?

If you have the SQL Server DB Engine on an 8 socket machine as well as SSRS, are both the DB Engine and SSRS locked down to the first 4 sockets? Could they be configured such that DB uses the first 4 and SSRS uses the other 4?

Eric – I don’t do SSRS, sorry. It’s SQL Server in name only – Microsoft just throws it in the box as a free prize, but it’s not like it shares the same code base or admin tools. (Same thing with SSIS, SSAS, etc.)

Thanks Brent! After reading this I checked our SQL Server VM configurations and contacted our hosting company to get them to reconfigure any offending VMs. Result, I improved performance on the offending machines for free.

I will remember this, and add it to the specifications for any future SQL Server VMs we order.

Alexander – great, glad we could help!

I’m seeing this with SQL 2008 R2 Enterprise Edition, on a 16 NUMA nodes VM. Each node has 2 VCPUs and SQL Server brings online only nodes 0 to 7. Is this a fixed limit in 2008?

Ricardo – for personal help on a given server, your best bet is to hit a Q&A site like https://dba.stackexchange.com, or hire a consultant.

Excellent article, Brent. I ran into it by chance w/blitz, and have since learned it is a factor on a LOT of my customer’s VMs… and it even explains some of the performance troubles they’ve been seeing!

Thank you very much. Good stuff.

Dude…this stupid thing just got us yesterday with a virtual prod server with 8 sockets and 8 cores. Why is the default 8:8? We changed that setting and queries that were taking over 2 hours (because of all the freakin’ paging) took 10 seconds…

This article is what was referenced and used to address the configuration. As always, thanks for sharing your knowledge!

My pleasure, glad I could help!

Just wanted to say thanks for this. I was testing a SQL server on a VM host with faster CPUs and couldn’t understand why my queries were running slower. Fixed the socket layout and now the licensing choke-hold has been released!

Bob – awesome, glad I could help!

Question: Im running a VM with SQL standard. It has 6 sockets. I will only have to pay SQL license for 4 CPUs, right? 2 Cores has the “Visible Offline” status.

You have to license all of the cores visible to the operating system. You’d license 6 cores, and reconfigure the VM to be 1 socket, 6 cores (or 2 sockets, 3 cores each.)

If you only want to license 4 cores, then reconfigure the VM to be only 4 cores.

Question: is it true that starting in SQL Server 2016 one scheduler is created per one socket regardless of how many cores the socket has? So that, for example, if my VM has 4 sockets, 1 core each then SQL Server 2016 will create 4 schedulers. If my VM has 4 sockets, 2 cores each then SQL Server 2016 will still create only 4 schedulers?

Marina – for questions, go ahead and hit a Q&A site like https://dba.stackexchange.com.

Hi all

I encountered 2 x SQL2017 Standard Edition running on one VM with a 8:8 setup.

No affinity masking is set up either, I can see 4 x CPU being smashed and 4 not. Any recommendations on how to get better use of cores and should i also use affinity masking?

Thanks

Client has lots of resources on underlying hardware so basically “I can go for it” and assign what is needed.

Hi! For personalized help with your client’s production systems, click Consulting at the top of the page.

If a 2016 server running databases are set to 2012 compatibility mode will this have an effect on the number of processors that SQL standard edition can use?

ie. will it be limited by 2014 and prior or (16 cores, 32 hyperthreading) or 2016 24 and 48 HT?

No.

Hello,

Very nice post. I found it thanks to SQLConstant Care!

btw… I know this is not VMWare but your link to fix it, VMware instructions, is broken.

Thanks

great info here and led us to resolve our issues where only 4 cpus were being used instead of 24 that the standard edition allows.

was scratching my head as to what is going on.

i still dont fully get why the default cpu topology option in vmware doesnt work but hardcoding the settings to 8 cores per socket / 8 numa nodes fixed the issue.

we’ll need to check all vms now and adjust accordingly if needed.

many thanks!

Thanks a lot for the post, the hint with the first entries in the server log helped to find my problem! I had the same behaviour on an inerited Enterprise SQL 2019, used “only” 20 Cores out of 32. Why??? Makes no sense, should be unlimited. But the log showed me the issue: “Warning: Enterprise Server/CAL license used for this instance. This edition limits SQL Engine CPU utilization to 20 physical cores, or 40 logical cores with Hyper-threading enabled”.

So it was the wrong edition…

Great post! As usual! Thanks Brent! FYI, link http://www.virtualizationsoftware.com/virtual-cores-virtual-sockets-vcpu-explained/ is dead. It leads to https://www.actualtechmedia.com/, or at least I can not find the instructions

Thanks for the heads up, fixed!