These improvements go great with cranberries. The food, not the b – actually, they go pretty well with the band, too.

sp_Blitz Improvements

- #568 – @RichBenner added a check for the default parallelism settings

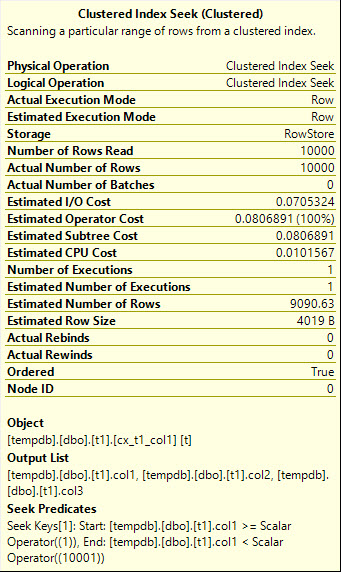

sp_BlitzCache Improvements by @BlitzErik

- #495 – add warning for indexed views with missing stats

- #557 – bug fix – don’t alert on unused memory grants if query is below the server’s minimum memory grant

- #583 – add warning for backwards scans, forced index usage, and forced seeks or scans

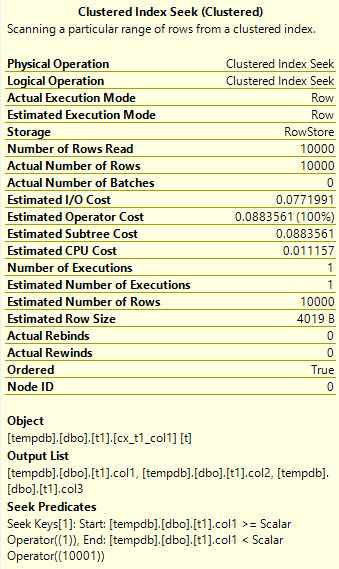

sp_BlitzIndex Improvements, Mostly by @BlitzErik

- #566 – new @SkipStatistics flag enabled by default. This means you only get the stats checks if you ask for it. (We were having some performance problems with it in last month’s version.)

- #567 – bug fix – now adds persisted to a field definition if necessary

- #571 – bug fix – better checks for computed columns using functions in other schemas

- #574 – bug fix – long filter definitions over 128 characters broke quotename

- #578 – bug fix – @RichBenner made sure SQL 2005 users understand that they’re unsupported

Go download it now. Enjoy!