Moving Databases with ALTER DATABASE

20 Comments

True story

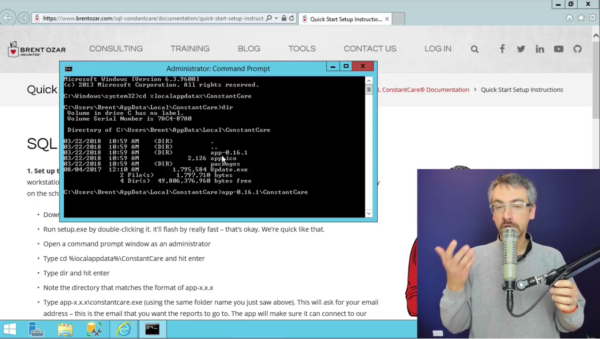

A long time ago, I had to actually do stuff to databases. One thing I had to do was move data files around. Maybe some knucklehead had put system databases on the C: drive, or a LUN was filling up, or we got a new LUN. You know, whatever. Natural curiosity oft leads one to the internet. If one does not succumb to food and cats, one may find useful information. Or anonymous message boards. Sort of a toss up. What I found was this article. Weird, right? 2009. Brent said to use ALTER DATABASE. It’s new and pretty and smart people do it. What Brent didn’t do was explain how it’s done. Or link to how it’s done. I felt cold and alone. Abandoned. Afraid. “Great post, Brent”, I said sarcastically, and set out to figure out how to work this magic on my own.

I turned to BOL, the destination of all self-loathing people. If you scroll down to the bottom, way down at the bottom, the syntax is there. Of course, moving system databases is a horse of a different color. But hopefully you don’t need that one. For user databases, it’s rather more simple:

- Alter the file metadata to the new path

- Set the database offline

- Physically move the file

- Set the database back online

Easy enough!

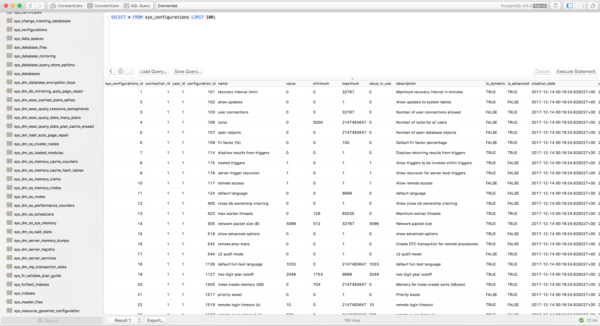

Run ALTER DATABASE with the new location. We’re moving the data file. If we were moving the log file, it would probably end in “_log” or something. You can find all this information in sys.master_files, except where you’re moving the file to. Just don’t actually move it to C:\Whatever. You may run into problems later. Also, you need the filename. If you don’t include it, SQL won’t complain until you try to set the database back online. Yay!

|

1 |

ALTER DATABASE [Sample] MODIFY FILE ( NAME = Sample, FILENAME = 'C:\Whatever\Sample.mdf' ); |

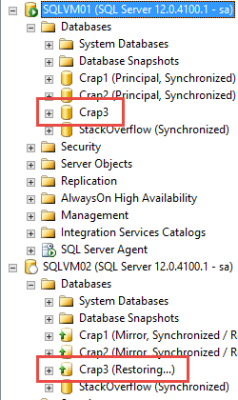

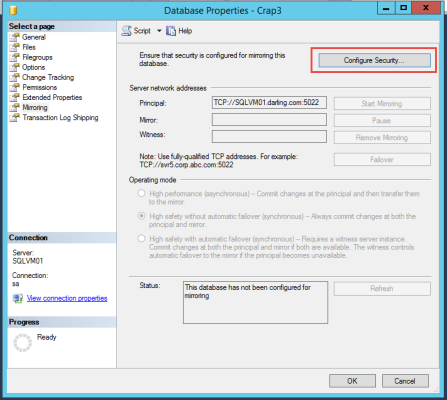

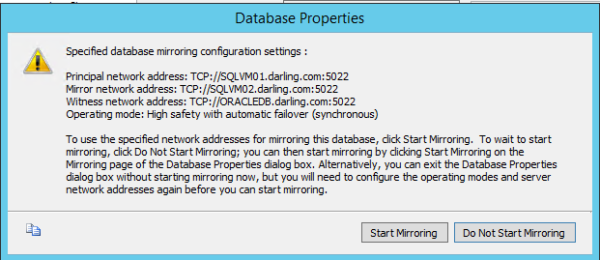

This is the part that you need to think through. People have to be cool with the database being offline while you move the physical file. This is not a seamless transition. If you’re moving large enough databases, you may want to consider an alternate method, like Mirroring or Log Shipping. They take more work, but you get the whole near-zero-downtime thing out of it. You may want to stage a mock file move to test LUN to LUN copy speeds. See how many GB you can move per minute. That way you’ll at least be able to estimate how long the outage will last. Assuming all that is cool, go ahead and take the database offline.

|

1 |

ALTER DATABASE [Sample] SET OFFLINE; |

Now you gotta hurry up and get that file moved. How you do that is up to you. You may prefer to just use Windows Explorer, since it has a status bar, and tells you copy speeds. Good stuff to know if people ask for updates, right? Just to fill space, here’s a PowerShell command. I still hate PowerShell.

|

1 |

Move-Item -Path "D:\Data\Sample.mdf" -Destination "C:\Whatever" -Force |

Once that finishes, put your database back online.

|

1 |

ALTER DATABASE [Sample] SET ONLINE; |

If you find yourself having to do this often, or if you have to migrate a group of databases, it’s probably worth scripting out.

There you have it

It’s that easy to do. Just make sure you have adequate backups, in case something goes wrong. I take no responsibility for what happens to your data files when they copy across your SAN, or anywhere else.

Thanks for reading!