One of my favorite things about my job is being able to take the pulse of how real companies are managing their databases. I don’t wanna be locked in an ivory tower, preaching down to you, dear reader, about how you should be doing five million things a day – when in reality, you’re struggling to get an hour of real work done because you’ve got so many meetings.

But sometimes I wonder – am I out of touch? When I was a DBA, I remember struggling with backups and corruption checking – has that gotten easier? Have today’s DBAs started using more automation and tools to protect their estate? Is Transparent Data Encryption catching on? Did DBAs start using Extended Events for monitoring when I wasn’t looking?

And it’s important because I wanna build the right training material and scripts for our customers. I see a problem trending, I want to be able to give people the right information to fix the problem, fast.

When we launched SQL ConstantCare®, I was excited to see what the data would reveal. 86 users have opted into public data sharing for 285 servers hosting 11,521 databases.

Here’s some of the interesting things we’ve learned so far.

SQL Server adoption is still slow.

It’s spring 2018, but SQL Server 2017 still has less adoption than SQL Server 2008, let alone 2008R2. I’m really curious to see how this progresses as we move towards the end of support for 2008 and 2008R2 next year.

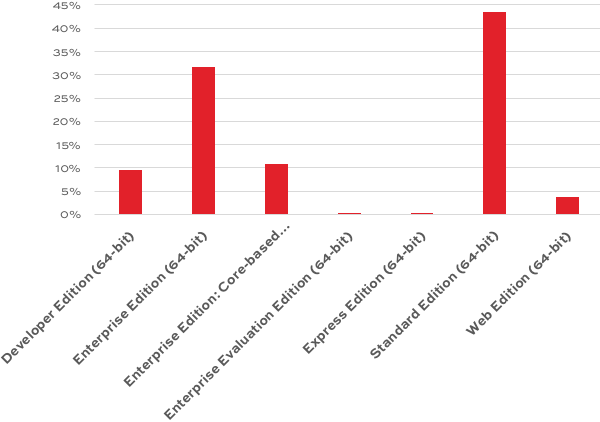

In terms of edition, I went in with no expectations – I really have no idea what our readership looks like, and it’s interesting to see numbers:

Over 50% of us had basic backup issues.

58% of all us had at least one database (37% of databases overall) that hadn’t had a full backup in the last week. Now this is a little tricky: in the initial round of collection, I noticed a trend that a lot of people would add a development server first, then look at the email advice to decide whether they wanted to add more servers.

However, I also noticed a trend amongst the replies – paraphrasing:

“You know, I’d forgotten about those databases. We restored that a while ago to get some data out of it, and then I guess I forgot to delete it. I’ll go delete those now.”

Similarly, 38% of all servers had databases in full recovery model, but weren’t doing transaction log backups on them.

Between dropping databases that shouldn’t be around (shout out to the multiple folks that had AdventureWorks in production), plus suddenly clearing away unnecessarily giant log files, I can see how the database size tends to drop quickly on servers within the first few days of setting up SQL ConstantCare. (We’ll do an ROI study on that over time.)

We have a mixed record on corruption checking.

93% of all databases had a CHECKDB in the last 2 weeks! That’s awesome!

However, things were a lot worse when it came to enabling checksums for page verification. 5% of databases didn’t have checksums turned on, which sounds small, but it was spread across 54% of the users. Look at the person sitting next to you: either this issue affects you, or it affects them. One of you needs to buckle up.

We’re still not patching.

- 16% of customers are running a completely unsupported build of 2008 or newer (meaning they haven’t applied a service pack in a few years)

- 10% of customers are running builds with known corruption or security escalation bugs

- Hardly anyone is patched for Meltdown/Spectre

As a teacher and consultant, I gotta think hard about that. I don’t have easy answers. It’s not like I can just build a presentation and magically get the business to agree to outage windows.

Long term, I’m thinking of it as a data problem: can I tie peoples’ server problems to a specific CU that has a fix for their issues? That won’t be on the horizon for the product in 2018, but it’s an interesting long term challenge.

37% of us are experiencing poison waits.

When RESOURCE_SEMAPHORE, RESOURCE_SEMAPHORE_QUERY_COMPILE, and THREADPOOL strike, it can feel like your SQL Server service is completely locked up – even though you can remote desktop into the base OS and it responds just fine.

When I talk to training classes about that, I’ve been saying that most of you will be able to go your entire career without having to troubleshoot those issues. Turns out I’m completely wrong, and I need to start talking about these more often, like blogging about how to recognize the symptoms even if you’re not monitoring wait stats.

On a related note, 16% of us have had memory dumps recently. My old advice was to install the SSMS memory dump upload utility, but Microsoft shut that down – likely due to security issues around GDPR, since memory dumps can include PII. Before I write new advice there, I’m going to dig deeper into the data – like if the dumps are correlated to specific builds/versions – to improve my advice.

We still have priority boost turned on.

It’s hard for me to believe that Microsoft still exposes this as an option in SSMS 17.6. Users should be protected from themselves and from really bad Internet advice – this should be deprecated, burned, and pushed to the bottom of the ocean.

Here’s the real kicker, though: ten percent of us have a server with Priority Boost on.

Another way to think of it: when you’re in a user group session with 40 other people, 4 of them have Priority Boost on. Or maybe you, and 3 other people.

The exciting thing is that we can track what happens to wait stats as people turn that feature off, and then prove if it made things better or worse. (Over 20% of us have either auto-close or auto-shrink enabled on databases, too, but thankfully it’s confined to about 11% of our servers.)

I’m barely scratching the surface here of what we’re learning. As the data grows, I’m really looking forward to showing users comparison data of how they rank related to other shops, how their database health stacks up, and the easy stuff they can do to get better.

Read more of my SQL ConstantCare posts, or sign up now.

2 Comments. Leave new

What??? That prio boost wasn’t the turbo button the interwebz was claiming? 😉

Dagnabbit !

This is so interesting, thanks for sharing those details! I’d be personally curious about whether people are using Hekaton tables, and if so how this affects their overall server health (or if there’s no correlation).

This isn’t really related to performance or reliability, but I think it would also be neat to know if folks are using SQL Server on Linux (or how quickly that’s being adopted compared to SQL Server 2017 on Windows).