Announcing the SQL Server Theme Song Winners

13 Comments

We asked you to pick theme songs to play when SQL Server has issues, and holy cow, you sent in a ton of submissions. We couldn’t possibly pick just one in each category, so each of us picked our own winners – all of whom get a free training video class of their choice. (Folks who won in multiple categories really cleaned up – they got a free Everything Bundle to recognize their spectacular achievement.)

Best Song to Play During a Server Outage

- Beatles – Hard Days’ Night – submitted by Karen Downing, picked by Angie

- Billy Joel – Pressure – submitted by Lori H, picked by Doug

- Megadeth – Sweating Bullets – submitted by Rob, picked by Tara

- REM – It’s the End of the World – submitted by Brian Berry, picked by Brent

- Talking Heads – Burnin Down the House – submitted by Tim Burnett, picked by Jessica

Best Song to Illustrate Your Predecessor’s Skills

- Bee Gees – Jive Talkin – submitted by Mike S, picked by Brent

- Billy Joel – We Didn’t Start the Fire – submitted by Brian Berry, picked by Angie

- Cake – I Will Survive – submitted by Brandon, picked by Doug

- Gorrilaz – Kids with Guns – submitted by Maxime Gauthier, picked by Jessica

- Nirvana – The Man Who Sold the World – submitted by Jared, picked by Tara

Best Song to Accompany an Index Rebuild

- Beatles – Come Together – submitted by Rob, picked by Jessica

- Jackson 5 – ABC – submitted by Matthew Darwin, picked by Brent

- Radiohead – Jigsaw Falling Into Place – submitted by Mike Miller, picked by Tara

- Red Army Choir – Korobeiniki (AKA the Tetris Theme Song) – submitted by Drew Furgiuele, picked by Doug

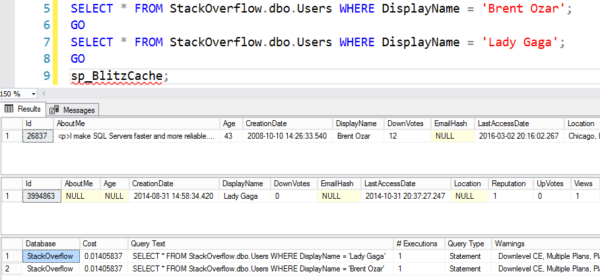

Best Song to Play When Examining a Slow Query

- Buster Puppet – Everybody Poops – submitted by Drew Furgiuele, picked by Jessica

- Daft Punk – Technologic – submitted by Christopher Stoll, picked by Brent

- The Ramones – I Wanna Be Sedated – submitted by Tony Fangman, picked by Tara

- U2 – I Still Haven’t Found What I’m Looking For – submitted by Andrey, picked by Angie and Doug

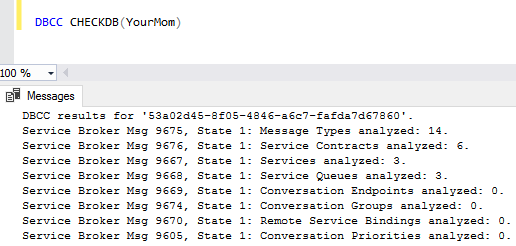

Best Song to Play When Corruption is Found

- AC/DC – Highway to Hell – submitted by Marcy Ashley-Selleck, picked by Jessica

- Disturbed – Down with the Sickness – submitted by Jeff Stebbens, picked by Tara

- Iggy Pop – Corruption – submitted by Matthew Darwin, picked by Erik

- Meat Loaf – Two Out of Three Ain’t Bad – submitted by James Chorlton, picked by Brent and Doug

- The Isley Brothers – It’s Too Late – submitted by Stefano Gioia, picked by Angie

Best Song for a SQL Server with 4GB RAM

- Guns n Roses – Patience – submitted by Andrey, picked by Brent

- Jungle Book – Bare Necessities – submitted by Ian, picked by Angie (Ian also deserves a round of applause for submitting only Disney song entries)

- Skee-lo – I Wish – submitted by Jeff Stebbens, picked by Doug

- Skrillex – Kill Everybody – submitted by Christopher Stoll, picked by Jessica

- Twenty One Pilots – Stressed Out – submitted by Brad Mason, picked by Tara

Best Song to Play While a Cursor Runs

- Dead or Alive – You Spin Me Round – submitted by Brett C, picked by Brent

- Lambchops – The Song That Doesn’t End – submitted by Sebron, picked by Jessica and Tara

- Lionel Richie – All Night Long – submitted by Tim Burnett, picked by Doug

- OK, Go – Here It Goes Again – submitted by Gareth, picked by Angie

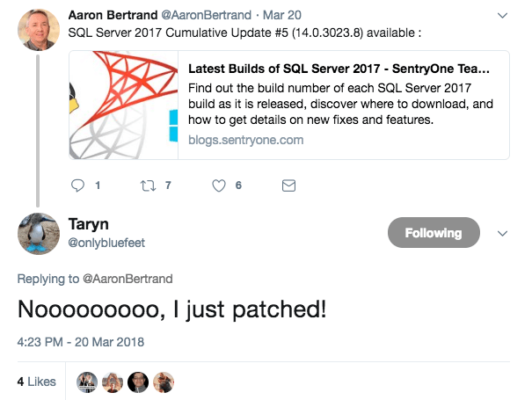

Best Song to Prepare for a SQL Server Service Pack

- Beatles – Don’t Let Me Down – submitted by Jeff York, picked by Tara

- Cage the Elephant – Ain’t No Rest for the Wicked – submitted by BrettC, picked by Angie

- Salt N Pepa – Push It – submitted by Sebron, picked by Brent

- Survivor – Eye of the Tiger (Rocky Theme Song) – submitted by Chris Kramer, picked by Jessica

- Willy Wonka Wondrous Boat Ride – submitted by Sean Killeen, picked by Doug

Best Overall Match Winner

The best song match has to be playing Meat Loaf’s Two Out of Three Ain’t Bad when corruption is found. For finding this incredible match, James Chorlton wins himself an Everything Bundle. Check out these lyrics:

Baby we can talk all night

But that ain’t getting us nowhere

I told you everything I possibly can

There’s nothing left inside of here

And maybe you can cry all night

But that’ll never change the way I feel

The snow is really piling up outside

I wish you wouldn’t make me leave here

I poured it on and I poured it out

I tried to show you just how much I care

I’m tired of words and I’m too hoarse to shout

But you’ve been cold to me so long

I’m crying icicles instead of tears

And all I can do is keep on telling you

I want you

I need you

But there ain’t no way

I’m ever gonna love you

Now don’t be sad

‘Cause two out of three ain’t bad

Now don’t be sad

‘Cause two out of three ain’t bad

You’ll never find your gold on a sandy beach

You’ll never drill for oil on a city street

I know you’re looking for a ruby

In a mountain of rocks

But there ain’t no Coupe de Ville hiding

At the bottom of a Cracker Jack box

I can’t lie

I can’t tell you that I’m something I’m not

No matter how I try

I’ll never be able to give you something

Something that I just haven’t got