Azure SQL Managed Instances Brain Dump on CPU, Memory, HA/DR, and Backups

Normally when we write blog posts, we try to explain something or tell a story. If you’re looking for a solid educational post, stop here, mark this one as read, and go on about your day.

This post is just a brain dump of unorganized notes from our experimenting with Azure SQL DB Managed Instances Preview. Buckle up.

Update 2018/05/05 – Microsoft’s Dimitri Furman shared how CPU and memory allocation works for Managed Instances.

CPU & memory configs

Let’s start with this: both 8 and 24-core MIs all have ~170GB RAM. (I haven’t deployed a 16-core VM.) When you provision a Managed Instance (MI), you choose between 8, 16, or 24-core VMs. Interestingly, they all appear to have 24 cores. I’m guessing Microsoft is keeping deployment simple for now.

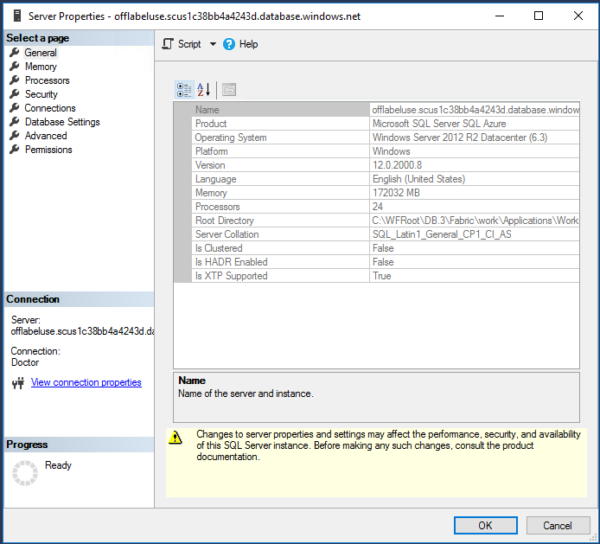

Screenshots for the 8 and 24 core MIs:

For the 8-core MI, here’s what sys.dm_os_schedulers looks like – it really does only have 8 online visible schedulers:

That’s an awful lot of hidden online schedulers – but note that they all still tie to the same parent_node_id and cpu_ids (0-7).

Yes, it still has a Dedicated Admin Connection (DAC), and yes, as of right now you can connect to it – and here’s where things get a little cool. Instead of just 1 query connected to the DAC, you can have at least 2. That’s new.

So how are they restricting us to 8, 16, or 24 cores? The conventional way would be affinity masking, but they did something different: if you go into Processors, only 8 cores show up as options under the 8-core MI. The core restriction is being enforced differently, and I’m guessing it has to do with these startup messages in the error log:

If you want to spelunk through error logs and see what’s new, here’s one Managed Instance error log, and here’s another.

In the opening server properties screenshots, the eagle-eyed amongst you will notice Win 2012R2 and SQL Server v12.0.2000.8. I wouldn’t put a whole lot of stock in these numbers right now – exact version numbers are starting to matter less here. More on that when I talk about feature switches.

You’ll also notice that Is Clustered = False, Is HADR Enabled = False. So with what kind of black magic are they replicating your data?

Enter the HADR Fabric.

Your Azure SQL Managed Instance consists of 5 servers:

This is why MIs have such tricky network requirements: this ain’t one server or service. Microsoft is creating, fixing, deleting, redeploying VMs to maintain the service. Together they make up a cluster, but this isn’t a Windows cluster. All kinds of new DMVs here:

Here’s the applications that show as running on these nodes:

You don’t see SQL Server here directly, and you might also remember that in the Managed Instance restore tutorial, you saw RestoreService performing restores for me.

Look a little closer at Worker.CL, and he’s hosting the databases:

In this screenshot, my Managed Instance has 4 user databases, and they’re represented by 4 GUIDs. Erik wrote about how you’ll need these for some of the DMVs.

If I scroll across sys.dm_hadr_fabric_partitions to the right, there are some interesting columns:

Remember, my user databases are near the bottom of this list. If target_replica_set_size = 1 and min_replica_set_size = 1, you might guess that only one node is hosting my databases. (Especially with partition_kind_desc = singleton.) That would be putting a lot of eggs in one basket, but it doesn’t appear to reflect how many nodes are actually hosting the database. Remember during the restore tutorial, I showed how something akin to Direct Seeding is running in the background.

Based on the MSDB tables, it looks like you’re getting log backups every 5 minutes:

Which takes me back to work. I’m working on getting sp_Blitz to be fully compatible with Managed Instances, and I gotta go fix the backups query. (sigh) The server_name reported in these DMVs doesn’t match things like SERVERPROPERTY(‘ServerName’). No rest for the wicked.

Related

Hi! I’m Brent Ozar.

I make Microsoft SQL Server go faster. I love teaching, travel, cars, and laughing. I’m based out of Las Vegas. He/him. I teach SQL Server training classes, or if you haven’t got time for the pain, I’m available for consulting too.

Get Free SQL Stuff

"*" indicates required fields

3 Comments. Leave new

Thanks for sharing Brent. I’m always dying to know how stuff works. With Azure however, it seems I’ll be dying without ever knowing 🙂

I know this is an old post but for anyone else finding it, managed instances no longer record their automated backups into msdb.dbo.backupset. I’ve checked with support who’ve confirmed with the product team.

Ha! Dang it, they’re trying to hide the truth from us. 😀