The 9 Letters That Get DBAs Fired

39 Comments

I’m proud to say that I’ve only lost one job in my life. I was a grocery store bagger when the store came under pressure to increase their percentage of minority workers. They laid off all of the Caucasian baggers, but I’m not bitter. I actually loved bagging groceries, and if I hadn’t been laid off, I’d still be the guy asking you “Paper or plastic?”

I probably didn’t deserve to lose that job, but I’ve deserved to lose others. I’ve just been lucky. Through my career, I’ve seen a lot of database administrators go through some dicey situations that cost them their jobs. Maybe not right away – but once these situations happen, it’s only a matter of time until management finds a legally defensible reason to swing the axe. These situations often involve just nine letters.

The First Three Letters That Get you Fired: RPO

Recovery Point Objective (RPO) measures how much data you’d lose if your server went down and you brought up the secondary server. It’s measured in time, not megabytes. The less time you have, the more frequently you have to get the data to a secondary location.

In theory, if you’re doing transaction log backups every 5 minutes to a network share, so you’ve got a 5-minute RPO.

In theory, “in theory” and “in practice” are the same. In practice, they are different.

In practice, you’re unlucky, and your transaction log backups will fail before the engine itself does. I know this because it’s happened to me and my clients. In one case, the volume for backups filled up and the transaction log backups stopped working. Since the log backups didn’t run, the transaction logs didn’t clear, and they continued to grow. The Gremlin of Disaster arrived, sowing corruption in his wake. By the time the dust cleared, we’d lost over an hour of data – permanently.

If you’re backing up to a local drive and the RAID controller fails, check out this helpful article. You’ll be needing it, because with a failed RAID controller, you can’t access any of the data on the local drives. Before you try pulling the hard drives out of the failed server and putting them into another server, check out this article. After all, you’re gambling – gambling that the RAID controller firmware matches between servers and that it’ll be able to read and rebuild the newly inserted drives. Guess wrong, and it will format the drives, thereby erasing your backups.

Savvy readers will detect a sense of acerbic humor in the post so far – even more biting than my usual pieces. Brace yourself, because it’s going to get worse.

The Next Three Letters: RTO

Recovery Time Objective is how much time you’ve got to get your server’s act together. Some companies measure it from the time you learn about the problem, some measure when the service is first unavailable.

The clock’s starting point matters a lot. When things go wrong, SQL Server Agent might just email the failsafe operator – if you’ve gone to the trouble of setting one up and configuring alerting. Even then, you probably won’t notice the email because you’ll be drunk at the pub having a nutritious dinner with your lovely family. An hour later, when you realize that your cell phone is set on vibrate, you’ll panic, run to the car, fire up your laptop, and try connecting over your phone’s 1-bar internet connection. Should we start the clock when the server started failing, when the email was sent to you, when you saw the email, or when you finally connected to the VPN? If the business wants to start the clock when the server stops, you have to account for the meatbag reaction time, and with most of us, there’s a pretty long delay. (If you’re the kind of DBA who sets up rules to move automated alerts into a separate email folder, you’re off to a bad start.)

If our idea of disaster protection is restoring the databases onto another server, we might assume that the RTO just needs to cover the length of time to do a full restore. Not so fast, Speedy McPinto – here’s a few things you’ll need to do to get the server online:

- Provision enough space to do the restore – do you know how much space you need on each drive?

- Make sure it’s on the right version/build of SQL Server – sometimes we have to install service packs, cumulative updates, or hotfixes to get production to work right. There’s no guarantee our standby box has those same binaries.

- Get the sp_configure settings right – if production had a certain maxdop, for example, it might fail terribly on the new hardware.

- Get security working correctly – you did script out the logins and passwords, didn’t you?

- Configure TempDB correctly – I’ve seen people restore production databases onto dev environments where TempDB was just configured with a few gigabytes of storage, and it instantly started growing when handling production load. TempDB ran out of space, and the server went Pete Tong.

- Restore the database – and hope to hell it restores correctly the very first time.

The less time you have, the more tasks you have to perform ahead of time, and the more you have to document, test, and rehearse the steps you’re going to perform at the moment of disaster.

The Most Important Three Letters: CYA

Right now, all of your users believe your RPO and RTO is zero. They believe you’ve got everything under control, and that when disaster strikes, you’re going to instantly bring up another server with no data loss whatsoever. We both know the truth, though – you spend your time at work checking blogs, not backups, so we’ve got some work to do.

You have to put it in writing – and that’s where our worksheet comes in:

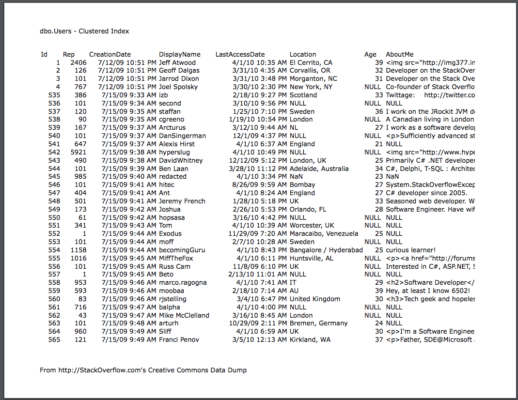

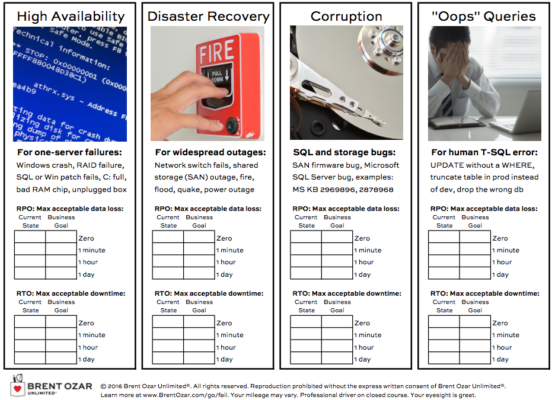

There are four columns: high availability, disaster recovery, corruption, and “oops” queries.

For each column, you have to define the maximum amount of data you’re willing to lose, and the amount of downtime you’re willing to have. Start by filling out the current state. (If you’re not familiar with calculating your current RPO/RTO, that’s where our DBA’s Guide to HA/DR class comes in.)

I know, you’re going to think the business says “ZERO!” but that’s where the next page of the worksheet comes in:

Down the left side, choose how much data loss you’re willing to tolerate. Across the top, choose your downtime. Where they meet, that’s the cost.

When the business users understand the costs, they’re able to make better decisions about RPO and RTO. But it all starts with getting them in writing.

The HP DL580 G7 holds up to 2TB of memory, but even if you cheap out and only get 1TB of memory, you’ll pay under $50k for that memory. While that might sound expensive at first, keep in mind that SQL Server Enterprise Edition is roughly $30k per socket, so you’ll spend $120k just licensing the four CPU sockets in that DL580. The memory isn’t the biggest expense in that server – 1TB of memory is cheaper than the SQL Server licensing. If you need four CPU sockets worth of power, you’re probably going to be caching a great deal of your database in memory.

The HP DL580 G7 holds up to 2TB of memory, but even if you cheap out and only get 1TB of memory, you’ll pay under $50k for that memory. While that might sound expensive at first, keep in mind that SQL Server Enterprise Edition is roughly $30k per socket, so you’ll spend $120k just licensing the four CPU sockets in that DL580. The memory isn’t the biggest expense in that server – 1TB of memory is cheaper than the SQL Server licensing. If you need four CPU sockets worth of power, you’re probably going to be caching a great deal of your database in memory. Database administrators think of SQL Server’s memory as the cache. That is completely and utterly wrong. The database is the persistence layer – the place things go where they need to be permanently saved somewhere.

Database administrators think of SQL Server’s memory as the cache. That is completely and utterly wrong. The database is the persistence layer – the place things go where they need to be permanently saved somewhere.