New High Availability Planning Worksheet

7 Comments

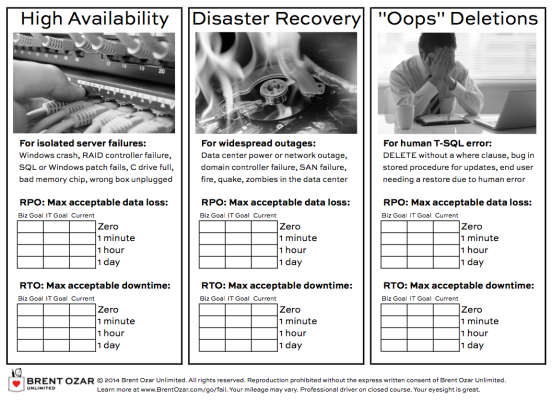

One of the questions we ask every new client is, “How much data is this application allowed to lose, and how long is it allowed to be down for?”

This leads to some really fun conversations.

The business users assume that the database will never lose data and cannot go down. The IT admins assume that the business understands all systems can lose data, and that the business will be okay with some data loss during a crash.

To get the business users and the IT team onto the same page, I had to build that page for them to get onto! And here it is:

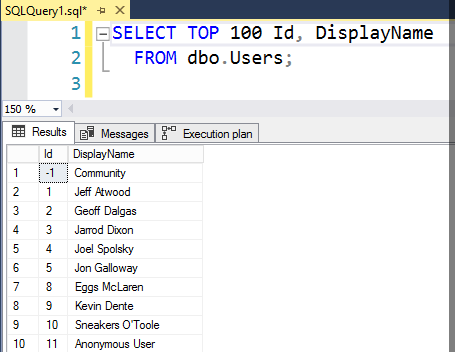

Step 1: the it team fills out the current state.

We start by filling out the “current” column for each of the three scenarios, documenting how much data the business could lose in the event of an outage, and how long the system could be down.

Say we’ve got a single physical standalone SQL Server, and we’re doing transaction log backups every hour to a network share. That means we could lose at least an hour’s worth of data, and be down for several hours (if not a day). To get the system back online, we would need to:

- Provision hardware and storage space

- Install the right version/edition of Windows, plus patches

- Install the right version/edition of SQL Server, plus patches

- Set up the same sp_configure options (maxdop, any trace flags, etc)

- Set up security (users, passwords)

- Restore the databases

Often I find that just getting everybody to agree on the “current” column risks can take an hour, and the business users are horrified by how much work is involved. It’s nobody’s fault – this is just the current state of the system.

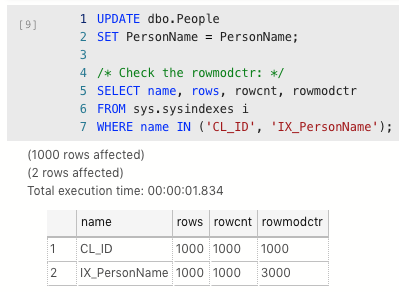

Step 2: The business users set a goal.

They’ll say, “We’re not allowed to lose any data and we’re never allowed to go down”, and that’s where page 2 of the worksheet comes in:

Down the left side, pick how much data we’re allowed to lose.

Across the top, pick how long we’re allowed to be down.

Where they meet, that’s a rough cost of the solution, and the list of options that can meet the business goals. These costs are wide ranges that give the business a rough idea of what it’ll take to achieve their objective – don’t get hung up on the exact dollar amounts. The business users just need a rough idea to help guide their choice.

I strongly, strongly encourage business users to pick one of those 4 categories, not something like 15 minutes or 4 hours. SQL Server’s high availability and disaster recovery methods line up really well with these 4 buckets, and if they choose 15 minutes, they’re not really doing me any favors – I’m going to need to design a 1-minute solution.

Step 3: The IT Team designs a solution to meet the business goals.

In our DBA training classes, we coach DBAs on:

- How to get real answers in writing from business users

- How to set the “IT Goal” column of this worksheet

- How to give the business users the right recommendation

- How to handle cost objections

- How to build a solution that meets the business users’ needs

When I was a DBA, that was the hardest part of my job. The business kept telling me, “You’re not allowed to lose data and you’re not allowed to go down, but you don’t have a budget.” Now, in our classes, we arm DBAs with worksheets and guides to go toe-to-toe with the business. You look like a pro because you get to leverage all these cool worksheets that we built for our own consulting projects.

You can grab this planning worksheet plus much more in our First Aid resources. Enjoy, and hope it makes your next HA/DR project easier!