Managing Oracle Performance with Statistics

4 Comments

Oracle maintains statistics about data stored on disk. The statistics and histograms help guide the optimizer during query plan compilation. These statistics are usually helpful. Sometimes the statistics are not helpful.

As data changes, the statistics collected by Oracle may become less accurate. If I’ve created a histogram on the column transaction_date, my histogram won’t know about data that’s been added since the histogram was last created or updated. Over time, that histogram becomes less helpful. DBAs can update statistics, but another problem can arise – the new statistics might be worse than the old statistics. DBAs need tools to manage stats and verify success before moving new statistics into production.

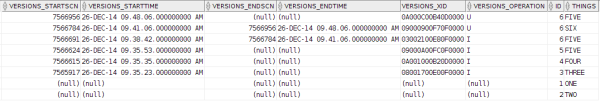

Historical Statistics

Oracle gives DBAs a powerful tool in managing statistics – historical statistics retention. By retaining historical copies of statistics, a DBA can ensure that there’s always a plan to back out changes that have caused a performance problem. You can view the current historical retention period by running:

|

1 |

SELECT dbms_stats.get_stats_history_retention() FROM dual; |

On my Oracle 12c install (12.1.0.2), the retention period is set to 31 days. Larger systems may want to set up smaller retention periods.

Changing the statistics retention is as easy as running:

|

1 |

EXEC dbms_stats.alter_stats_history_retention(5); |

Once you have identified the statistics that you want to restore, you can put them back in place using DBMS_STATS.RESTORE_TABLE_STATS:

|

1 2 3 4 5 6 |

EXEC dbms_stats.restore_table_stats ( ownname => 'SH', tabname => 'CUSTOMERS', as_of_timestamp => TO_DATE('2015-01-01 11:38', 'YYYY-MM-DD HH24:MI') ); |

Of course, you would need to know when statistics were last collected. Thankfully, you can review when statistics were collected with the DBA_TAB_STATS_HISTORY view.

Keep in mind that the historical statistics have to go somewhere. Monitor the size of the SYSAUX tablespace and adjust the retention period as needed. You may find that you don’t need all of those historical statistics.

Locking Statistics

Once you’ve found the good statistics, you probably don’t want to keep restoring them over and over again. The next step you can take is to lock the statistics in place. Statistics can be locked at the schema, table, or partition level. Once an object has been locked, anything depending on that object will be skipped during system wide statistics updates.

Locking the statistics on a table is as easy as:

|

1 |

EXEC dbms_stats.lock_table_stats('SH', 'CUSTOMERS'); |

If there were an index on the CUSTOMERS table that needed a statistics update, we can force Oracle to update statistics using the force flag:

|

1 2 3 4 5 |

EXEC dbms_stats.gather_index_stats( ownname => 'SH', indname => 'IX_CUST_FNAME', force => TRUE ); |

By locking some stats in place, we can make sure that important queries don’t slip off a good plan. Using dbms_stats.restore_table_stats makes it possible to restore known good stats. There has to be a way to know if your statistics are going to be a problem before you move them into production, right?

Pending Statistics

Some tables are more volatile than others. When data changes and statistics are re-analyzed it may be possible that data is sampled in a way that can cause problems. This is why we have the ability to lock statistics. In some cases, you may want to test changes to statistics before they go into production, rather than locking statistics in place or rolling back to your last known good statistics. In this case, you can create pending statistics.

To create pending statistics, the first step is to disable publishing statistics for a table:

|

1 |

EXEC dbms_stats.set_table_prefs('SH', 'SALES', 'PUBLISH', 'false'); |

Once publish is set to false, newly gathered statistics wiill be placed in a holding area rather than being immediately made available for use by Oracle. At this point, the statistics are present, but unusable. Pending statistics can be used by changing the optimizer_use_pending_statistics variable to TRUE.

|

1 2 |

ALTER SESSION SET optimizer_use_pending_statistics = TRUE; /* amazing query goes here */ |

Once it’s been determined that stats are good, they can be be published. DBAs have the option to publish all stats or just stats for a single table:

|

1 2 3 4 |

/* publish all stats */ EXEC dbms_stats.publish_pending_stats(NULL, NULL) ; /* publish single object stats */ EXEC dbms_stats.publish_pending_stats('SH', 'SALES') ; |

Or, if the statistics stink, you can delete them with the dbms_stats.delete_pending_stats procedure.

Exporting Statistics

There’s one other tool DBAs have for managing stats – exporting statistics. After creating a stats table using dbms_stats.create_stat_table, a DBA can export statistics using on of severalEXPORT_*_STATS stored procedures. Statistics can be exported for columns, indexes, schemas, and tables as well as several other database and system level statistics.

The EXPORT_*_STATS procedures will push data into the statistics table and that data can be exported to another Oracle system where an IMPORT_*_STATS procedure can be used to import stats to the other Oracle system. This can be important for development, testing, and staging environments where it may not be possible to restore a large volume of data, but developers need to assess how queries will run in the production environment.

Statistics Maintenance Doesn’t Have to be a Drag

DBAs have powerful tools in Oracle to make sure statistics don’t cause performance problems. By combining these tools, it’s possible to create a stable set of statistics that lead to predictable performance. To learn more about managing Oracle database statistics, head over to the Managing Optimizer Statistics: Basic Topics or refer to the DBMS_STATS documentation for reference material.

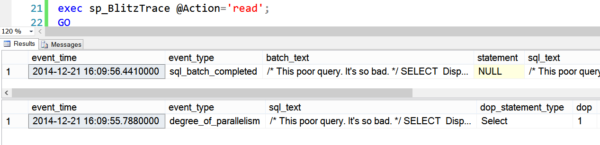

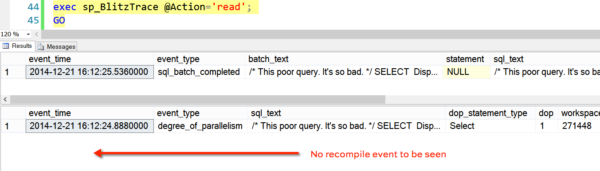

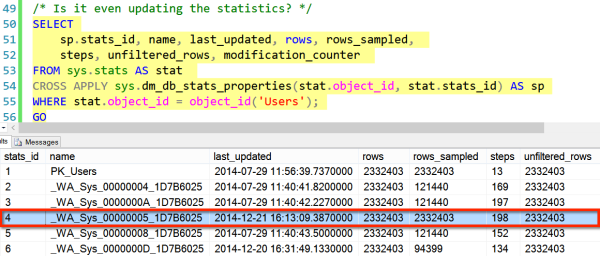

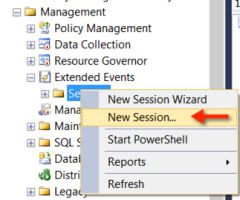

I used to click on “New Session Wizard”, simply because I had no idea what the difference was between the two options, and it was first in the list.

I used to click on “New Session Wizard”, simply because I had no idea what the difference was between the two options, and it was first in the list.