How to Do a Free SQL Server Health Check

40 Comments

Your boss or client came to you and said, “Give me a quick health check on this SQL Server.”

Step 1: Download & run sp_Blitz.

Go to our download page and get our First Responder Kit. There’s a bunch of scripts and white papers in there, but the one to start with is sp_Blitz.sql. Open that in SSMS, run the script, and it will install sp_Blitz in whatever database you’re in. (I usually put it in the master database, but you don’t have to – it works anywhere.)

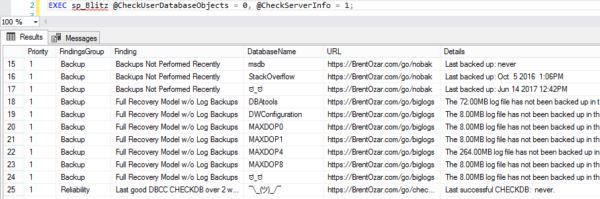

Then, run it with these options:

|

1 |

EXEC sp_Blitz @CheckUserDatabaseObjects = 0, @CheckServerInfo = 1; |

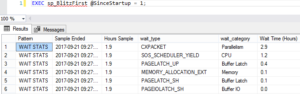

These two parameters give you a server-level check without looking inside databases (slowly) for things like heaps and triggers.

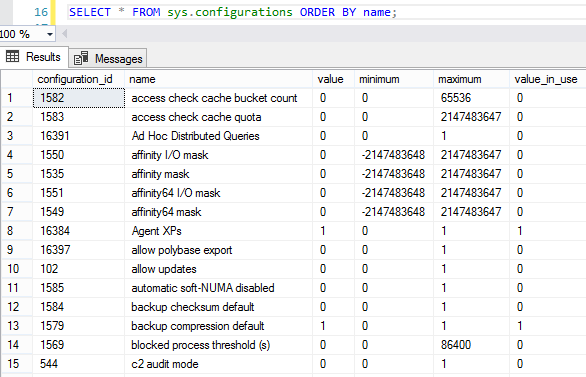

Here’s what the output looks like (click to zoom):

The results are prioritized:

- Priority 1-50 – these are super important. Jump on ’em right away, lest you lose data or suffer huge performance issues. If you’re doing a health check for a client, you should probably include a quote for this work as part of your health check deliverables.

- Priorities 51-150 – solve these later as you get time.

- Priorities 151+ – mostly informational, FYI stuff. You may not even want to do anything about these, but knowing that the SQL Server is configured this way may prevent surprises later. You probably don’t want to include these in the health check.

Each check has a URL that you can copy/paste into a web browser for more details about what the warning means, and what work you’ll need to do in order to fix it.

Step 2: Summarize the health risks.

sp_Blitz is written by DBAs, for DBAs. We try to make it as easy to consume as we can, but if you’re going to present the findings to end users or managers, you may need to spend more time explaining the results.

If you only have ten minutes, run sp_Blitz, copy/paste the results into Excel, and then start hiding or deleting the columns or rows that you don’t need management to see. For example, if you’re a consultant, you probably wanna delete the URL column so your clients don’t see our names all over it.

I know what you’re thinking: “But wait, Brent – you can’t be okay with that.” Sure I am! Your client has already hired you to do this, right? No sense in wasting time reinventing the wheel. That’s why we share this stuff as open source to begin with.

If you have an hour or two, add an executive summary in Word that explains:

- The risks that scare you the most (like what will happen if the SQL Server dies at 5PM on Friday)

- What actions you recommend in order to mitigate those risks (like how to configure better backups or corruption checking)

- How much time (or money) it’ll take for you to perform those actions

Your goal is to make it as easy as possible for the reader to say, “Wow, yeah, that scares me too, and we should definitely spend $X right now to get this taken care of.”

If you have a day, build a PowerPoint presentation, set up a meeting with the stakeholders, and then walk them through the findings. To see examples of that, check out the sample findings from our SQL Critical Care®.

And that’s it.

You don’t have to spend money or read a book or watch a video. Just go download sp_Blitz and get started.

Erik Darling: [crosstalk] Leave the OS alone with its stupid page file. Dreadful. I wish I could say dreadful the same way Gordon Ramsay says dreadful. Every time I watch Masterchef and he says something is dreadful, I’m like, “Oh it’s so good.” Then when I say dreadful it’s just like…

Erik Darling: [crosstalk] Leave the OS alone with its stupid page file. Dreadful. I wish I could say dreadful the same way Gordon Ramsay says dreadful. Every time I watch Masterchef and he says something is dreadful, I’m like, “Oh it’s so good.” Then when I say dreadful it’s just like…