There’s a 6-Month Statute of Limitations on “The Last Person.”

27 Comments

“The last person must have set it up that way.”

“The last person wrote that code.”

“The last person just didn’t configure it right.”

You can use that excuse for 6 months.

For six months, you’re allowed to get up to speed on the company’s politics, the intricacies of the app, and the responsibilities of different departments. You’re allowed to prove yourself to your peers, building up social capital so that they’ll take your recommendations and run with ’em..

You can take your time figuring out whether the last person knew what they were doing or not. You should start by assuming that you did, and give them some benefit of the doubt because they were under time pressure just as you are now. If you’re generous and curious, you can even try to contact them through unofficial channels, offer to buy them lunch, and chat about their experiences at the company.

But after 6 months, the statute of limitations is up.

After that, you’re not allowed to blame “the last person” anymore.

The last person can no longer be prosecuted for their crimes against the database, and they are absolved of any guilt. It doesn’t matter who was originally responsible last year: the person responsible is now you.

So pull yourself up by the bootstraps, write up a health check with sp_Blitz, and start working through the problems. Put the correct backups and corruption checking in place. Schedule that outage. Apply the patches. Fix those linked servers using the SA login.

Because after 6 months, if you’re not fixing these problems, the clock is already starting to tick down to when you will be referred to as “the last person”, and they’re going to roll their eyes when they talk about your inability to get the job done.

Just like you’ve been doing about “the last person” for over 6 months.

If your company is hiring, leave a comment. The rules:

If your company is hiring, leave a comment. The rules:

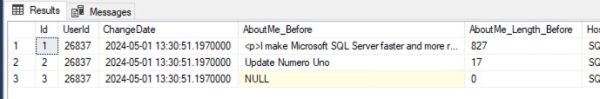

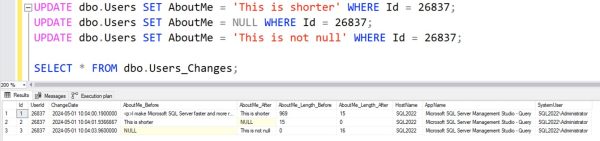

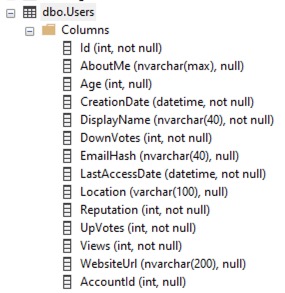

For example, in this week’s query challenge, the Users table has a lot of really hot columns that change constantly, like LastAccessDate, Reputation, DownVotes, UpVotes, and Views. I don’t want to log those changes at all, and I don’t want my logging to slow down the updates of those columns.

For example, in this week’s query challenge, the Users table has a lot of really hot columns that change constantly, like LastAccessDate, Reputation, DownVotes, UpVotes, and Views. I don’t want to log those changes at all, and I don’t want my logging to slow down the updates of those columns.