R2 makes it easier for DBAs to move databases around from server to server in much the same way virtualization admins move guest OS’s around between physical hosts. In my last blog post about SQL Server 2008 R2, I explained that databases are becoming more like virtual servers, and today I’ll talk about what that means to DBAs.

R2: Bringing Sexy DAC

SQL Server 2008 R2 still has the same concept of databases, but it’s added a new level above databases called Data-Tier Applications, abbreviated DAC because the abbreviation DTA was already too widely-known. The DAC includes the database schema plus some server-level objects required in order to support the database, like logins.

The DAC does not include the data inside your database. For deployment best practices, you should have any necessary data (configuration tables, basic lookup tables) already scripted out as part of your deployment strategy. With the DAC approach, it makes sense to put these scripts inside the database as objects. For example, you might have a stored procedure called usp_deploy that populates all of the necessary configuration tables via insert statements.

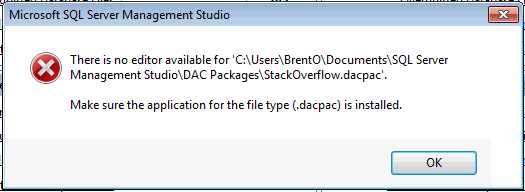

In R2’s SQL Server Management Studio, right-click on a database and click Tasks, Extract Data-Tier Application. This starts a wizard that will reverse-engineer your database schema, figure out what makes it tick, and package it in a way that you can redeploy it on another server. The information is saved in a file with a .dacpac extension, and if you try to open it with SQL Server Management Server, you’ll hit a stumbling block:

Microsoft’s taking an interesting approach here by drawing a line in the sand. The first hint pops up in Books Online:

“A DAC can be authored and built using a SQL Server Data-tier Application project in Microsoft Visual Studio. Current plans are to introduce the SQL Server Data-tier Application project type in a future beta release of Visual Studio 2010.”

Henceforth:

- SQL Server Management Studio is for production database administrators.

- Visual Studio is for database developers.

What DACs Mean for Database Administrators

If you never had a change control process and your developers just implemented changes willy-nilly in production, then the DAC approach won’t change anything. Your developers will do what they’ve always done.

If you’ve got change control processes in place, your developers probably hand you change scripts and tell you to implement them in production. If you’re ambitious, you audit their work as a sanity check to make sure their work will scale.

In the future, your developers may be creating and updating their database schema, stored procedures, functions, etc. inside Visual Studio, packaging them into DAC Packs, and handing them to you. In order for you to check their work, you’ll need to switch over into Visual Studio, or perhaps log onto their development SQL Servers to see the schema changes there. This is another nail in the coffin of the power of the DBA. From the nosql movement to the DBA-less cloud, DBAs need to be acutely aware of how things are changing.

This isn’t necessarily a bad thing; it’s worked great in the world of virtualization. As a VMware sysadmin, I didn’t need to understand what each virtual server was doing, whether it conformed to best practices, or even what was running on it. I managed them in large quantities with low overhead simply by moving things around based on the resources they needed. If a server’s needs grew, I could move them to a larger VMware host or a less-active host. I only purchased resources incrementally for the entire pool rather than micromanaging what each server needed. I didn’t do as good of a job as if I’d micromanaged each server’s configuration, but I was able to manage more servers with less manpower. Everything’s a tradeoff.

What if you, as a production DBA, could manage more instances and more databases with less time? What if, instead of looking at lines of T-SQL code, you were able to step back and see the bigger picture? What if you treated every application as a sealed, hands-off third-party app?

Perfect DAC User: Third-Party App Vendors

At Quest Software, we build multi-tier applications that store data in SQL Server databases. For example, Foglight Performance Analysis monitors the most offensive queries in your applications and gives you insight as to what developer you should shoot first.

We have basic requirements for our repository databases, but when we hand the product off to the customer, we’re relying on the honor system. We ask that you not deploy the repository with Auto-Close enabled, for example, but we have to trust that you know what that means and how to make sure it’s not enabled. If we deploy our repository as a DAC, however, we can build in policies that check for things at deployment time.

Another downside of storing our data in SQL Server is that you, the SQL Server DBA, tend to poke around. I’ve worked with DBAs who wanted to extend Quest’s database to store more stuff, or they wanted to store their utility queries in Spotlight’s work database. They say, “Hey, this QuestWorkDatabase is on all of my servers – I’ll just stash my queries in here and nobody will notice.” Next thing you know, they’re storing tables with data in there too, and then it’s only a matter of time until they break our schema.

I would love to deliver our repository as a sealed, hands-off appliance database that the DBA couldn’t break. Unfortunately, though, Books Online says:

“After deployment, the database is managed like any other database. Configuration of the database is done using common mechanisms such as the ALTER DATABASE Transact-SQL statement, the database management dialogs in Management Studio, or using the SQL Server Management Objects in the SQL Server PowerShell provider.”

The vendor in me says, “Damn! The DBAs can still hose up our schema.”

The DBA in me says, “Yay! I can still add indexes and fix bad code in vendor products!”

Next Up: DACs and The Cloud

What DACs don’t support gives us a clue about where database technology is going, and I’ll talk about that in my next SQL Server 2008 R2 blog post.

20 Comments. Leave new

Jeff Atwood would love that screenshot!

Stack Over Flow defeats SQL Server, like this post about google

Hahahaha, well, sorta. I use the StackOverflow Creative Commons database export for a lot of my testing because it’s such a fun data set to play with.

DAC? Seriously? Poor acronym choice. They already have a DAC in SQL Server; Dedicated Administrator Connection. So, now when talking about either DAC I will have to provide context, which kind of defeats the purpose of a TLA.

And as an audiophile, I automatically think Digital Audio Converter.

Have you ever used Polyserve?

Not personally, but I’ve talked to a few Quest Software customers who like it quite a bit. Polyserve gives you flexibility at the instance level, but not the database level, and requires shared storage across all of the cluster nodes. The new SQL Server Utility doesn’t have that constraint. They both have their pros and cons.

I heard encrypted procs are not included in DAC? Is it true? Have you tried to add encrypted proc to DAC?

Correct, encrypted stored procs are not supported right now in DACs.

I just found this, so thanks for the info Brent.

What is little bit confused, is the acronym DAC (DB Admin Conn) = DAC (Data-Tier Appl) – really it can be error when you discuss about DAC, and now we must be clear during discussions:

DAC – DB Admin Conn (SQL Server 2005 & 2008)

DAC – Data-Tier Appl (SQL Server 2008 R2).

…and I agree that new feature are sexy in R2, including the DAC (Data Tier Appl)!!! – LoL.

This appears to help with multiple instances of a database but i dont understand why it gets compared to Virtual Server management. For a while I have used DNS aliases for High profile databases so i can move them relatively quickly and without updating every application in the firm. How does the DAC make changing the host SQL server easier ?

JAC – good question. The DAC makes changing the host SQL Server easier because all of the objects required for the database live inside the DAC. With traditional SQL Server databases, we had objects outside the database – things like logins, linked servers, DTS packages, Agent jobs, and so forth. The DAC is a self-contained unit so it’s much easier to move them around from server to server.

“The DAC does not include the data inside your database. For deployment best practices, you should have any necessary data (configuration tables, basic lookup tables) already scripted out as part of your deployment strategy.”

Then how is one supposed to use this tool to re-deploy a database already in production? How is one supposed to use this tool to update a database already in production? Most of our development involves changing existing databases not deploying new ones. Are you supposed to create scripts to move 10 years worth of data? When you move a virtual machine you move everything on it, i.e. all the installed applications all the RDBMSs and all the data in the databases on those instances. With this dacpac, it seems that when you move your database, you can’t take the data with you so what good is it?

“The DAC does not include the data inside your database. For deployment best practices, you should have any necessary data (configuration tables, basic lookup tables) already scripted out as part of your deployment strategy.”

As Edward above quoted, How would data be deployed along with all the tables,prods,indexes using DAC?!

Is it possible to move data as well in the deployement?? or maybe there is an alternative to do that??

Ibrahim – how would you handle data conflicts – for example, if rows were present in production but not in the newly deployed copy?

I had the very same confussion the other week. Virtualization Architect spoke to me about SQL DAC. Now being a DBA I automatically thought of the Dedicated Administrator Connector. Then when he went on about deployments and builds thought hey looks like we are talking two different languages.

Do you have any recommendations on automated SQL Builds with Virtual Machine Manager. This was what the VA was trying to discuss with me. About template builds for SQL Servers.