Free SQL Server Training Next Week at GroupBy

2 Comments

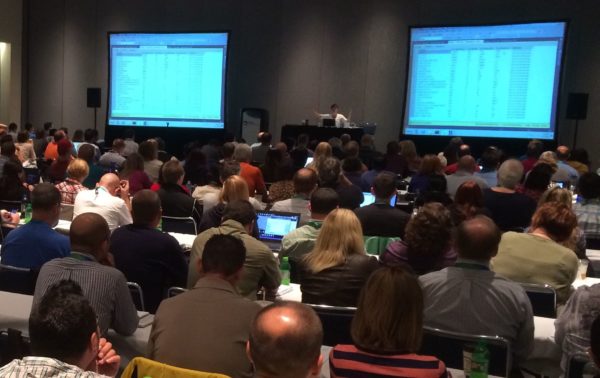

It’s time for another day of free training for the community, by the community. Here’s the lineup you voted in for next Friday’s free GroupBy.org conference:

- 8AM Eastern – Not just polish – How good code also runs faster by Daniel Hutmacher

- 9:15 – The Perfect Index by Arthur Daniels

- 10:30 – Be My Azure DBA (DSA) by Paul Andrew

- 11:45 – T-SQL Tools: Simplicity for Synchronizing Changes by Martin Perez

- 1:00PM – Make Your Own SQL Server Queries Go Faster by John Sterrett

- 2:15 – An introduction to partitioning by dbafromthecold

Register now to watch live for free. If you can’t make it, no worries – sessions will be recorded and you can watch past sessions for free.

"*" indicates required fields