What’s Different About SQL Server in Cloud VMs?

When you start running SQL Server in cloud VMs – whether it’s Amazon EC2, Google Compute Engine, or Microsoft Azure VMs – there are a few things you need to treat differently than on-premises virtual machines.

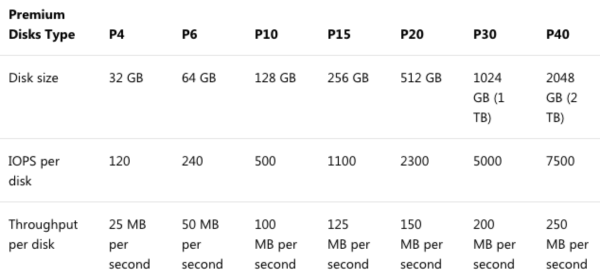

Fast shared storage is really expensive – and still slow. If you’re used to fancypants flash storage on-premises, you’re going to be bitterly disappointed by the “fast” storage in the cloud. Take Azure “Premium” Storage:

For about $260 per month, a P40 premium disk gets you 2TB of space with 7,500 IOPs and 250 MB per second – that’s about 1/10th of the IOPs and half the speed of a $300 2TB SSD. It’s not just Azure, either – everybody’s cloud storage is expensive and slow for the price.

So using local SSDs is unheard of in on-premises VMs, but common in the cloud. Ask your on-premises VMware or Hyper-V admin to give you 1TB of local SSD for TempDB in one of your guests, and they’ll look at you like you’re crazy. “If I do that, I can’t vMotion a guest from host to host! That makes my maintenance terrible!” In the cloud, it’s called ephemeral storage, and it’s so insanely fast (compared to the shared storage) that it’s hard to ignore. Not necessarily smart to use for local databases without a whole lot of planning and protection – but a slam-dunk no-brainer for TempDB.

Be ready to fix bad code with hardware. It’s so much easier in the cloud to just say, “Throw another 8 cores in there,” or “Gimme another 64GB RAM,” or “We’re hammering TempDB, and we really need something with faster latency for that volume.” On-premises, these things take planning and coordination between teams. In the cloud, it’s only a budget question: if the manager is willing to pay more, then you can have more in a matter of minutes. But in order to make that change, you really want to stand up a new VM with the power you need, and then fail over to it, which means…

Start with mirroring or Availability Groups. On-premises, you might be able to skate by with just a single SQL Server. You figure you hardly ever change CPU/memory/storage on an existing VM, so why bother planning for that? Up in the cloud, you’ll be doing it more often – and having your application’s connection strings already set up for the database mirroring failover partner or the Always On Availability Groups listener means you’ll be able to make these changes with less work required from your application teams.

Disaster recovery on demand is cheaper – but not faster. Instead of having a bunch of idle high-powered hardware, you can start with either no VMs, or a small VM in your DR data center. When disaster strikes, you can spin up VMs, restore your backups, and go live. However, you still need a checklist for everything that isn’t included in your backups: think trace flags, specialized settings, logins (since you’re probably not planning on restoring the master database), linked servers, etc. Thing is, people don’t do that. They think they’ll postpone the planning until the disaster strikes – at which point they’re fumbling around building servers from scratch and guessing about their configuration, things you would have already taken care of if you’d have budgeted the hardware (or used something like VMware SRM to sync VMs between data centers.)

You can save money by making long term commitments. I talked a lot about flexibility above, the ability to slide your VM sizing dials around at any time, but with Amazon and Azure, you can save a really good chunk of money by reserving your instance sizes for 1-3 years. I tell clients to use on-demand instances for the first few months to figure out how performance is going to settle out, and then after 3 months, have a discussion about maybe sticking with a set of instance sizes by reserving them for a year. The reservations aren’t tied to specific VMs, either – you can pass sizes around between departments. (This is one area where Google has everybody beat – their sustained use discounts just kick in automatically over time, no commitment required, but if you’d like to make a commitment, they have discounts for that too.)

Related

Hi! I’m Brent Ozar.

I make Microsoft SQL Server go faster. I love teaching, travel, cars, and laughing. I’m based out of Las Vegas. He/him. I teach SQL Server training classes, or if you haven’t got time for the pain, I’m available for consulting too.

Get Free SQL Stuff

"*" indicates required fields

7 Comments. Leave new

I’m a bit confused by “So using local SSDs is unheard of in on-premises VMs, but common in the cloud.” You say cloud storage is slow and then say SSD usage is common in the cloud. Can you please clarify what you mean by this? Do you mean: They use SSD but it’s slower for customers because it’s shared?

Pete – sorry, *shared* cloud storage is slow, and local (ephemeral) SSD is fast.

Or, you could get a bunch of disks added to your Azure VM, create a storage pool for said disks and then you get more IOPS / MBs, but this is then shared across all the disks and then you might hit the bottleneck of either network throughput or limitation of the VM on IOPS

Nice one Bren Tozar! Been following your work and I really appreciate the content, which is cover over here, Fantastic.

One thing which isn’t as well advertised in Azure is that you not only have to contend with the storage limits but also the storage throttling imposed by your VM size. You might be able to attach multiple SSDs to a VM but you will still be limited on the VM.

E.g if you have a DS4_v3 you can attach 2 P30 disks which would in theory give you 10,000 IOPS and 400 MB/s. But you will actually get 6,000 IOPS and 93 MB/s (If you have caching on or off) and 8,000 IOPS and 64 MB/s if caching is enabled.

Details on the VM throttling limits can be found here:

https://docs.microsoft.com/en-us/azure/virtual-machines/windows/sizes-general

I had issues with this about a year ago. I wish I had this info instead of trying to drag it out of Azure support.

With the exception of ephemeral drives, most cloud providers replicate your drives. A fairer comparison would be to assume you were using Raid 1 and double your on-premises storage costs. Even taking that into account, cloud storage is still astronomically more expensive than on-premises storage.