How to Remove Times from Dates in SQL Server

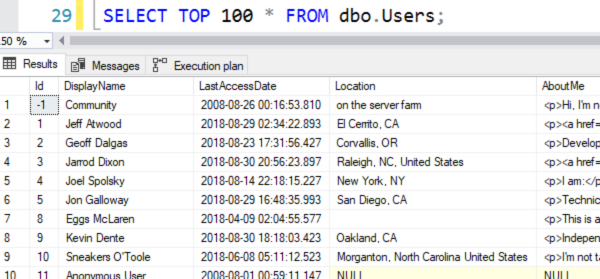

Say that you’ve got a table with a datetime column in it, like the LastAccessDate column in the Stack Overflow Users table:

And let’s say you wanna find the users who last accessed the site on September 9, 2018 – regardless of the time. Here are a few ways to do it:

Transact-SQL

|

1 2 3 4 5 6 7 8 9 10 11 12 |

SELECT * FROM dbo.Users WHERE LastAccessDate >= '2018-09-02' AND LastAccessDate < '2018-09-03'; SELECT * FROM dbo.Users WHERE LastAccessDate BETWEEN '2018-09-02' AND '2018-09-02 23:59:59'; SELECT * FROM dbo.Users WHERE CAST(LastAccessDate AS DATE) = '2018-09-02'; |

Here are their actual execution plans. I’m using SQL Server 2019, with 2019 compatibility level, and the 50GB StackOverflow2013 database.

The first two versions of the query are effectively the same, both doing a table scan.

When I CASTed the LastAccessDate as a DATE column, SQL Server did use an index on LastAccessDate. At first glance, that might seem like that’s a good thing because generally speaking, you want to use indexes to make your queries go faster.

However, there’s a dark side: when you check the output of SET STATISTICS IO ON, the third query is actually reading more pages than the first two. The combination of the index seek + key lookup is bad when we’re dealing with this many rows:

The problem is that when you CAST something as another data type, SQL Server’s estimates about the query’s output may not be as accurate. Look more closely at the estimated numbers of rows of output for each of the queries:

in the top two, SQL Server brought back 18,614 of an estimated 19,624 rows. That’s a pretty doggone good estimation!

In the last one with CAST, SQL Server only expected 6,364 rows. Because of that somewhat low estimate, it thought an index seek + key lookup would be more efficient for less rows, so it used the index, and ended up working too hard, doing too many logical reads.

You usually want accurate estimates,

and CASTing usually won’t get you there.

There are always edge cases where bad estimates deliver faster query results, but generally speaking, if you want to strip off times, you’re better off with one of these two approaches:

Transact-SQL

|

1 2 3 4 5 6 7 8 |

SELECT * FROM dbo.Users WHERE LastAccessDate >= '2018-09-02' AND LastAccessDate < '2018-09-03'; SELECT * FROM dbo.Users WHERE LastAccessDate BETWEEN '2018-09-02' AND '2018-09-02 23:59:59'; |

It’s more work on your part than simply CASTing the table’s contents as a date, but your work will be rewarded with more accurate estimates on SQL Server’s part, and that’ll lead to improvements throughout your query plan as you join to more and more tables.

Related

Hi! I’m Brent Ozar.

I make Microsoft SQL Server go faster. I love teaching, travel, cars, and laughing. I’m based out of Las Vegas. He/him. I teach SQL Server training classes, or if you haven’t got time for the pain, I’m available for consulting too.

Get Free SQL Stuff

"*" indicates required fields

34 Comments. Leave new

As ever, a great exegesis!

FWIW, we have found it useful to add persisted computed DATE-type columns for (nearly all) corresponding DATETIME/DATETIME2 columns (and, mostly, index them). We then choose on a query-by-query basis whether to use the ‘full’ DATETIME column or (more often) the DATE column as appropriate (although of course this only works when you can modify the schema!).

I think you mmean on September 2

Proposed Title: “How to Remove Times from Dates in SQL Server — DON’T! Why Explicit when you can Implicit”

Plus, sargability.

Great light weight and quick summary, I’ve shared this to our BI team as they deal with this a lot. Hopefully you’ll see some new subs coming in 🙂

The second example can fail on edge case where the date/time is in the second between 23:59:59 and midnight.

And for extra pedantic points, the very last time of day for a DATETIME2 column is 23:59:59.997 – if you end it with .999 it will be rounded up to the start of the next day, since the type is only accurate to 3 1/3 ms…

When you are getting a large number of values you can hit things like this (for my second observation, you can bring in a whole day’s data that you did NOT want, depending on how the system works…)

Thanks Parker, exactly what I came here to mention. Great article as always though Brent!

Parker,

I believe you’re referring to DATETIME, not DATETIME2. DATETIME is accurate down to 3ms, whereas DATETIME2 is accurate to 100ns. The very last time of day for a DATETIME2 is 23:59:59.9999999.

https://docs.microsoft.com/en-us/sql/t-sql/data-types/datetime-transact-sql?view=sql-server-ver15

https://docs.microsoft.com/en-us/sql/t-sql/data-types/datetime2-transact-sql?view=sql-server-ver15

You are correct – I had the type wrong! Many thanks for the catch!

Although, with DATETIME2, you can specify the accuracy if you want to – by default you get the effect of declaring DATETIME2(7), put you can use less precise values if needed.

Looks like the second example would miss the dates with fractional seconds between 2018-09-02 23:59:59 and 2018-09-03 00:00:00?

In approximately 8 years and hundreds of millions of rows, I’ve seen this happen exactly once. Yes, it’s rare but it happens. That’s why I always use Brent’s first query structure. And UTC for all DATETIME values – time zones are evil.

What about using something like:

SELECT *

FROM dbo.Users

WHERE DATEDIFF(day, LastAccessDate, ‘2018-09-02’) = 0

At least in my local database where I substituted with a different table and column, I ended up with the exact same plan and stats as your winning examples.

When I need to strip the time I do the following which seems to be performant, but a little hard to read:

SELECT DATEADD(day, DATEDIFF(day, 0, GETDATE()), 0)

Thoughts?

Conditions like that will all but guarantee you won’t be seeking values in an index. Avoid them if you can.

i always put 00:00:00.000 and 23:59:59.999 as the start and end times.

In your example , if the date in the row was 2018-09-02 23:59:59.807 for example, the row would get skipped and not appear in the result set.

Farrell –

If you are using DATETIME (not DATETIME2 as I originally said above) then the 23:59:59.999 will get rounded up to the start of the next day, since DATETIME is only accurate to 3 1/3 milliseconds.

You will only ever see DATETIME values ending with the digits 0, 3, and 7 for this reason – everything else will get rounded to one of those!

So, the last DATETIME value in a day will have a time component of 23:59:59.997 – and this has messed up comparisons beyond counting!

I’m confused. I mean, I get the explanation but I see that the first couple of queries both had query costs of 39% from the batch, and the last one only had 22% – so doesn’t that mean that the last query is better in terms of performance than the first two? What am I missing here?

Zohar – oh, I have sad news. That percentage is the estimated cost, not the actual – you can’t rely on that for performance tuning. To learn more about why, watch this class: https://www.brentozar.com/go/tunequeries

Thanks! that makes total sense now. Well, I guess you could say that today wasn’t a total loss – I’ve learned something new today!

Zohar – that’s a fantastic attitude! Love it.

I can second, third and fourth that! Those percentages almost seem designed to catch out the unwary developer. Perhaps they should say “ESTIMATED query cost”.

@Julian Yeah, I totally agree. it should absolutely say “Estimated query cost”. It’s even more confusing sinec you can choose to display either the estimated query plan or the actual query plan – which led me to assume that the query cost is either estimated or actual based on the plan you choose to display – and I’ll bet I’m not the only one…

@Brent Ozar

It would be interesting to hear your thoughts of

DATEADD(DAY, DATEDIFF(DAY, 0, dateTimeValue))and how that compares to the CAST approach.

Steve – the cool part about my blog posts is that I always use totally open databases and techniques. That way, when you’ve got ideas or questions, you can try the technique yourself and see how the results compare to what’s in the post. Give ‘er a shot – it’s the best way to learn!

I spend a good deal of time and effort trying to educate developers to match the data types in code with the data types in the database, and if there is a cast or convert, don’t put it on the database column.

SELECT *

FROM dbo.Users

WHERE LastAccessDate >= cast(‘2018-09-02’ as datetime)

AND LastAccessDate < cast('2018-09-03' as datetime);

war stories – once fixed a 2Tb table scan for a Bank that was threatening their risk reporting compliance deadline (with a potentially enormous fine) like this :-). It’s just a small fix – until it isn’t

Stupid question, but why does do the third query an index seek? I was always told, not to use a function (as the CAST) on the table side, because it kills the sargability of the query and indexes could not longer be seeked (only scanned).

Was this one of the changes in SQL 2019 (that I would have missed in this case)?

Hi Thomas, as I understand it, this is actually something that’s been around since the DATE data type was introduced back in SQL Server 2008.

SQL Server seems to be using a neat little short cut around the DATETIME and DATE data types.

I think (and someone please feel free to educate me if I’m wrong here!) DATETIME values are stored using a binary format where the upper half of the binary value is the number of day boundaries passed since the lowest date the data type supports. The DATE data type uses the same format, so SQL Server can very quickly riffle through an index of sorted DATETIME values matching them based on a DATE since the index will hold all the DATETIME values for a given DATE value in a single contiguous chunk where they all start with the same bytes for a single date value.

Query Optimizer will thus decide that its fastest option to do that is a seek + range scan on an index where the DATETIME values are first in the index key columns, then JOIN back to a covering nonclustered index, or the clustered index/heap to get any residual columns from the table to satisfy the query.

It looks like this is the case for DATE and DATETIME2, but not for the original DATETIME data type:

SELECT CAST(CAST(‘2019-01-01’ AS DATE) AS VARBINARY(MAX)) –result is 0x233F0B

SELECT CAST(CAST(‘2019-01-01 00:00’ AS DATETIME2) AS VARBINARY(MAX)) –result is 0x070000000000233F0B

SELECT CAST(CAST(‘2019-01-01 00:00’ AS DATETIME) AS VARBINARY(MAX)) –result is 0x0000A9C800000000

That being said, if the execution engine “knows” how to convert between DATE and DATETIME, it can still use indexes when joining the two data types. It will just convert the data on the fly as it’s doing the comparison.

What’s odd about this is that it’s almost like the thing that the optimiser does to turn the CAST/CONVERT into a seekable range on temporal types (which I believe didn’t happen in much earlier versions) gives it a plan with an artificially low cost estimate — all three queries could be executed via a seek and key lookup, but for some reason the third one doesn’t decide that a CI scan would be better (the reason being “Good Enough Plan Found” in my replication of this on SO2010).

The CAST/CONVERT gets “rewritten” as a > and = and = and < for semantic equivalence. I expect there's a very good (possibly very obvious) reason for that that I may be missing.

I’ve tried all these queries on my StackOverFlow database:

select * from dbo.Users where LastAccessDate >= cast(‘2018-09-02’ as datetime) and LastAccessDate = ‘2018-09-02’ and LastAccessDate < '2018-09-03';

select * from dbo.Users where LastAccessDate between '2018-09-02' and '2018-09-02 23:59:59';

select * from dbo.Users where cast(LastAccessDate as date) = '2018-09-02';

select * from dbo.Users where datediff(day, LastAccessDate, '2018-09-02') = 0;

select * from dbo.Users where dateadd(day, datediff(day, 0, LastAccessDate), 0) = '2018-09-02';

The two last ones fare a lot worse than the top 3. Number 4 is somewhere in between.

(Warnings: residual I/O: 9.737.247 rows read)

Interesting how SQL can estimate a seek on DATE part of the DATETIME index? To do any estimation it should create 2 different statistics but I doubt that it is doing it. So it some kind of a blind guess estimation?

To find out, run DBCC SHOW_STATISTICS and look at the stats on the index on the date/time field. For more details on that, check out my Fundamentals of Index Tuning class.

I think this way is better. I use for SSRS, as by default it passed date instead of datetime when you click on the calendar. don’t use between.

WHERE LastAccessDate >= ‘2018-09-02’

AND LastAccessDate = @StartDate

AND LastAccessDate < dateadd(dd,1,@EndDate);

hmm.it modified what I previously typed. let’s try again… I think this way is better. I use for SSRS, as by default it passed date instead of datetime when you click on the calendar. don’t use between.

WHERE LastAccessDate >= ‘2018-09-02’

AND LastAccessDate = @StartDate

AND LastAccessDate < dateadd(dd,1,@EndDate);

what the heck? it post differently from what I typed. some kind of auto correct?

Nah, just HTML encoding.