We Need to Talk About the Warnings In Your Query Plans.

SQL Server, thanks for coming today. Have a seat.

Yes, this might seem odd – there are a lot of us here.

And yes, we are locking the door behind you.

We all care about you – all of us care very deeply – but we need to talk about some warning signs. Specifically, the warning signs you’ve been putting in query plans.

You’ve been exaggerating things that aren’t a problem, and it needs to stop. We’ve already had an intervention about your false nested loop warnings, but your behavior has been getting worse.

Type conversion warnings are crying wolf.

In the Stack Overflow database, run this simple query:

Transact-SQL

|

1 2 3 |

SELECT CAST(Id AS VARCHAR(20)) FROM dbo.Users WHERE Id = 26837; |

We’re fetching just one row from the Users table, and Id is the clustered index. SQL Server dive-bombs directly to one row, pulls it out, and we’re done here. But look what the query plan shows:

Uh, no, SQL Server, the cast in a select – the data going out the door after the row has already been found – will not affect the cardinality estimate. I realize that you’re using the wishy-washy “may affect” language, but for that matter, you could say that Erik may turn down a free glass of Lagavulin. You’re welcome to keep trying, but I haven’t been able to get it to happen. (Disclaimer: I have not really been trying.)

“Excessive Grants” is a part-time Chicken Little, too.

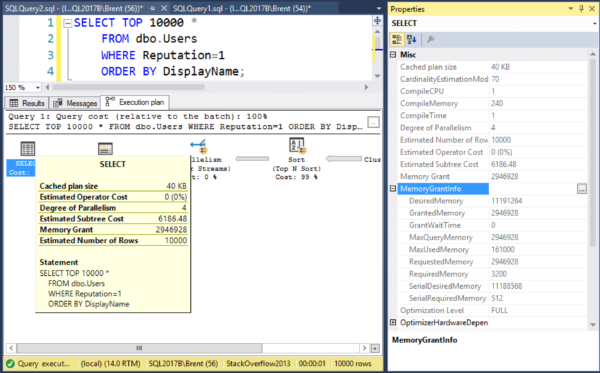

Let’s take this query plan. See the terrible yellow bang on the SELECT operator? Hover your mouse over it to see what it’s whining about:

It’s screaming that we have an “Excessive Grant, which may impact the reliability.”

The memory grant is 10MB.

On a server with 16GB of RAM.

There is no chance, barring the entire population of Earth trying to run this query at the same time on this server, that this query is going to impact the reliability of this server. Yes, I understand that only 296KB of the 10MB was used, and that from a percentage standpoint, that might seem like a big deal – but but we’re only talking 9.7MB of extra memory grant here.

Whereas this next query has no warning signs whatsoever, but…

But check out the metrics:

- Desired memory: 11GB

- Granted memory: 3GB

- Used memory: 161MB

- Wasted memory: 2.9GB every time this query runs

But there’s no warning! This is where memory grants can actually impact reliability, causing resource_semaphore waits, and this is the kind of query we need to be alerted about.

SQL Server, we know your heart is in the right place. We don’t have a problem with you – just some of your behaviors. We don’t want to get to the point where we start just ignoring your warning signs in query plans altogether because they’re so unreliable.

Related

Hi! I’m Brent Ozar.

I make Microsoft SQL Server go faster. I love teaching, travel, cars, and laughing. I’m based out of Las Vegas. He/him. I teach SQL Server training classes, or if you haven’t got time for the pain, I’m available for consulting too.

Get Free SQL Stuff

"*" indicates required fields

11 Comments. Leave new

SQL Server should just calculate ‘Wasted memory’ as a percentage of ‘Total Server memory’ and use that to throw warnings.

If I had a nickel for the time spent trying to fix “WARNING TYPE CONVERSION MAY AFFECT CARDINALITY ESTIMATE” on something like CONVERT(varchar(20), ID … well I’d have a handful of nickels. I’m not amused that I’ve wasted time on that warning and I will now treat that “may affect” warning with the attention is deserves (very little). Þakka þér fyrir Brent.

Thank you for that.

I was spending some time looking at these, wondering why is was getting the CardinalityEstimate warning.

Unfortunately, the reason I was looking at it is because I was told about warnings by sp_BlitzCache and used the query here: https://www.brentozar.com/blitzcache/query-plan-warnings/

It would probably be good if the query given there filtered out these useless warnings, or barring that at least if the page mentioned that some of these warnings may be misleading and gave us specific information on it like this page does. I hope these are the only two bad warnings. 🙂

I assume that for the CardinalityEstimate warning, if the type conversion is used to filter the results somehow, then that warning would be valid, but when simply displaying the end result, or possibly grouping, it would not.

Dang it. I hate when I make typos, and then can’t correct them. 🙁

I usually take care of the big warnings first (missing index, tempdb spills, horrible parallel joins etc). But I came here today because I saw this warning while prototyping something new and wondered whether I should dig deeper, or ignore it. I was scratching my head wondering how a SELECT CAST could affect cardinality.

Now I know. Thanks!

Hello,

when you say “Uh, no, SQL Server, the cast in a select – the data going out the door after the row has already been found – WILT NOT affect the cardinality estimate” –> do you mean “i believe it shouldn’t” or “it did not in my examples” or that “it really shouldn’t because you know the implementation”?

Because, as you may imagine, I searched for this topic because it happened to me… I got a query (longer than your example, several joins and left joins looking for some missing rows in one table related to the rest of the tables in the join)… When I just selected the columns as they were, it was something like 0.3 seconds.

When I wanted to select it as one string (whatever, I want to quickly put it in a monitoring notification, I know there are better solutions long term (i.e. format it on the higher layer), but this was supposed to be quick first ste), Anyway, when I selected the same colums, but cast the integer to string…

SELECT ” + Column1 + ‘ ‘ + Column2 + ‘ ‘ + CAST(Column3 as nvarchar(max)) FROM…

and the rest was the same,

it took ~2.5 seconds and showed that warning.

First I thought the same as you – I am converting just the values that were already selected, so it should not matter…. But I ran both queries several times and the times in both cases were pretty consistent, and the plans of those queries look different (the conversion itself does not take 2 seconds, it looks through different indexes)

using SQL server 2019

Can somebody please explain why it does that and how to prevent it (i.e. how to tell the SQL server that it really should convert/cast it AFTER the rows were already selected :))

interestingly, since the query gives me multiple results, so I wanted them to aggregate to a single output string ..

so I selected the columns raw, and performed the conversion in the aggregate

SELECT STRING_AGG(Column1 + ‘ ‘ + Column2 + ‘ ‘ + CAST(Column3 as nvarchar(max)) , ”) FROM

( Select Column1, Column2, Column3, FROM…

–the rest of the original select

) t

it gives a similar execution plan than before, which is again around 0.3 seconds

The same, but without the aggregate (just a SELECT from SELECT) is around 1.4s

it baffles me

I can’t really do free personal query troubleshooting, especially without plans. Feel free to post the query plans at DBA.stackexchange.com though and someone may be able to help.

Of course, I am not expecting a free personal troubleshooting, just a piece of general tip if you are aware of these situations (since you wrote that it should not happen, but it did). If you know what are the conditions when this is happening by design and how to elegantly circumvent them, please share.

If not, I’ll try my luck elsewhere 🙂

Please follow the instructions I’ve given you. Thanks!

And we’re still here half a decade later in SQL 2022 🙁

Very hard to run a clean sql shop when your server is throwing a thousand warnings PER SECOND on trivial statements.

Our example is:

APPLOCK_TEST(‘public’, UPPER(CONCAT(@AppLockKey, ‘,’, CAST(COALESCE(SJ_ProcessTaskParentID,SJ_ParentID) AS NVARCHAR(36)))), ‘exclusive’, ‘session’) = 1

It cannot possibly affect cardinality estimate.