Autoparameterized Trivial Queries May Not Get Partition Elimination

How’s that for a niche topic? You’re probably never going to know this, but since I had to figure it out the hard way, I’m writing this down so I don’t forget it: queries that get trivial optimization may not get partition elimination.

This post is going to be a little on the long side just because I have to set up a partitioned table. I also have to warn you that if you’re a partitioning pro, you’re not going to like the way I’m doing it in this post, but the thing is, I’m reproducing an exact client problem – setting up partitioning exactly the way their table had it. Let’s not waste time flaming the partition setup.

Setting up a partitioned table for the demo

I’m going to start with the Users table from the Stack Overflow database.

To reproduce the client issue that brought me here, let’s say we always filter users by Location and DisplayName, like this:

|

1 2 3 4 |

SELECT * FROM dbo.Users_partitioned WHERE Location = N'San Diego, CA, USA' AND DisplayName = N'Brent Ozar'; |

And say that we decided to partition the users by ranges of locations, alphabetically. I’ll create a partition scheme, function, and then copy the Users table into a Users_partitioned table.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 |

CREATE PARTITION FUNCTION pf_Alphabet (NVARCHAR(100)) AS RANGE LEFT FOR VALUES (N'a', N'b', N'c', N'd', N'e', N'f', N'g', N'h', N'i', N'j', N'k', N'l', N'm', N'n', N'o', N'p', N'q', N'r', N's', N't', N'u', N'v', N'w', N'x', N'y', N'z'); CREATE PARTITION SCHEME ps_Alphabet AS PARTITION pf_Alphabet ALL TO ([PRIMARY]); CREATE TABLE [dbo].[Users_partitioned]( [Location] [nvarchar](100) NULL, [DisplayName] [nvarchar](40) NOT NULL, [Id] [int] NOT NULL, [AboutMe] [nvarchar](max) NULL, [Age] [int] NULL, [CreationDate] [datetime] NOT NULL, [DownVotes] [int] NOT NULL, [EmailHash] [nvarchar](40) NULL, [LastAccessDate] [datetime] NOT NULL, [Reputation] [int] NOT NULL, [UpVotes] [int] NOT NULL, [Views] [int] NOT NULL, [WebsiteUrl] [nvarchar](200) NULL, [AccountId] [int] NULL, INDEX Location_DisplayName CLUSTERED (Location, DisplayName) ) ON ps_Alphabet(Location) INSERT INTO dbo.Users_partitioned ( [Location], [DisplayName], [Id], [AboutMe], [Age], [CreationDate], [DownVotes], [EmailHash], [LastAccessDate], [Reputation], [UpVotes], [Views], [WebsiteUrl], [AccountId]) SELECT [Location], [DisplayName], [Id], [AboutMe], [Age], [CreationDate], [DownVotes], [EmailHash], [LastAccessDate], [Reputation], [UpVotes], [Views], [WebsiteUrl], [AccountId] FROM [dbo].[Users]; |

Yes, you’re going to be angry that Location isn’t a great candidate for partitioning because data isn’t evenly distributed by Location, and you’re probably going to be angry about RANGE LEFT, and you’re going to have questions about different collations. Zip it. That’s not the point of this demo. This was the client’s existing partitioning strategy, and I have to do several demos for them to show different issues that we’re having with the setup. I love you a lot – no, a LOT – but I’m not going to craft a different demo for you. I’m just sharing this one demo with you because it’s easy to share publicly, whereas some of the rest I need to show can’t be shown publicly because it relates to their IP. I’m typing all this out because partitioning people are passionate about proper performance, and I just know they’re gonna flame me in the comments as if *I* designed this strategy. Anyhoo, moving on.

Running a trivial query

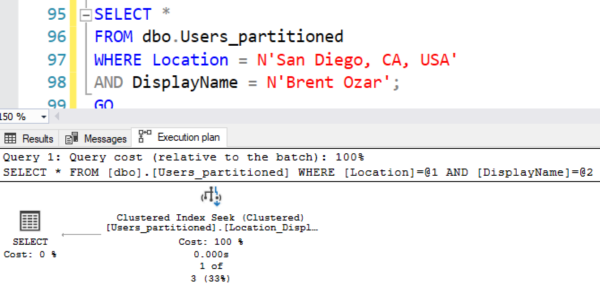

Let’s try our query to see if it divebombs into just one partition – the one containing San Diego. The actual query plan looks simple enough:

And it looks like we divebombed straight in, but right-click on the Clustered Index Seek and click Properties:

“Actual Partitions Accessed” shows that we accessed partitions 1 through 27. Another way to see it is SET STATISTICS IO ON, which shows that we read all 27 partitions of the alphabet.

|

1 |

Table 'Users_partitioned'. Scan count 27, logical reads 73 |

Why?

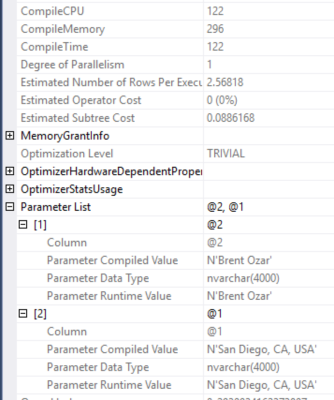

Right-click on the SELECT operator in the plan and look at the properties, and scroll down to Optimization Level. SQL Server believed this query was trivially simple, so it didn’t put much thought into building an execution plan.

Right-click on the SELECT operator in the plan and look at the properties, and scroll down to Optimization Level. SQL Server believed this query was trivially simple, so it didn’t put much thought into building an execution plan.

It’s not that SQL Server didn’t put any thought into it – note the 122ms of Compile CPU time. Partitioned tables generally see longer compilation times, even when they’re trivial. (And the more partitioned tables you have in a query, the worse this seems to get.)

Even though our query didn’t have parameters, SQL Server thought, “They’re probably going to run queries like this a few times, so I’m gonna automatically turn those literals (San Diego and Brent) into parameters.” Note the “Parameter List” in the screenshot – this is autoparameterization.

Now look, I gotta pick my battles here: I can’t teach you all of the intricacies of autoparameterization and trivial optimization inside one blog post.

Let’s set that aside, and keep going by building a stored procedure.

Put that same query in a stored procedure, and magic happens.

Instead of passing the literals in directly from the app, let’s make a stored procedure. Making a proc isn’t the only way of fixing this problem by any means, but it’s just a way of fixing it:

|

1 2 3 4 5 6 7 8 |

CREATE OR ALTER PROC dbo.usp_GetUser @Location NVARCHAR(100), @DisplayName NVARCHAR(40) AS SELECT * FROM dbo.Users_partitioned WHERE Location = @Location AND DisplayName = @DisplayName; GO EXEC usp_GetUser N'San Diego, CA, USA', N'Brent Ozar'; |

Now the logical reads tell a different story:

|

1 |

Table 'Users_partitioned'. Scan count 1, logical reads 4 |

And while the actual execution plan looks the same at first, showing a clustered index seek:

Look at the clustered index seek’s properties, and it shows that we only accessed 1 partition (partition count), partition #20:

And it isn’t because this query got full optimization, either!

It’s still trivial. That’s kinda wild.

It’s still trivial. That’s kinda wild.

The morals of the story are NOT:

- “You should put every query in a stored proc”

- “You should use variables”

- “You should add complexity to your queries to make them get full optimization”

The morals of the story ARE:

- Just because you see a clustered index seek on a partitioned table doesn’t mean it really seeked into one specific area.

- Partition elimination investigation requires looking at partitioned tables in a query plan and checking to see how many partitions were accessed.

- Just because you should get partition elimination doesn’t mean you will.

- Just because you do get parameterization in a stored procedure doesn’t mean you will get it everywhere that you run a similar query.

- Partitioning really isn’t a query performance feature: it’s a maintenance feature, making data loading & index maintenance easier, especially for columnstore tables. This whole article just plain ol’ wouldn’t have mattered on a non-partitioned index at all: we would have gotten a plain ol’ clustered index seek, less logical reads, and faster plan compilations.

- You can get faster query performance with table partitioning – but in most of the shops where I see it, it’s making query performance worse, and we have to put additional work in just to get equivalent query performance that a non-partitioned table would get.

I said you couldn’t flame me for the partition design on this table, but…you’re totally allowed to flame me for that last bullet point. That’s fair game.

Related

Hi! I’m Brent Ozar.

I make Microsoft SQL Server go faster. I love teaching, travel, cars, and laughing. I’m based out of Las Vegas. He/him. I teach SQL Server training classes, or if you haven’t got time for the pain, I’m available for consulting too.

Get Free SQL Stuff

"*" indicates required fields

5 Comments. Leave new

I’ve worked with partitions. You ALSO have to be careful about data types. Comparing SmallDatetime to DateTime for instance is NOT GOOD.

Actually, I agree completely with Brent. In all cases where my clients have used partitioning it was for data retention issues — not performance. Now, if we could just get Microsoft to have different fill factors per partition…..

One thing I’ve noticed: If you partition by date, you need not include the date field in the index. For other data types (integers specifically tested), it is required, and I would believe Brent’s varchar example. Any thoughts on why the date does not require it to be in the index? I see it hit one partition from the proc just as noted above, when the primary key (and only index) is the identity column.

Doug – for general questions, head to a Q&A site like https://dba.stackexchange.com. (Sorry, I just can’t do free Q&A here due to the volume of questions.) Thanks for understanding.

Another use for partitioning worth mentioning is to avoid deadlocks in a heavily-updated multi-tenant database. You can prevent table-level locks being taken using SET LOCK_ESCALATION = AUTO which limits escalation to the level of a tenant’s partition, as long as queries are properly constructed to include the partition key.