DBA Training Plan 8: What’s Inside Your Own Databases

In the last episode of the DBA Training Plan, we talked about what’s inside the MDF file: a series of pages that store a database’s tables. Now, it’s time to check out the contents of YOUR database to understand what’s using the most space, what’s getting used the most often, and what you might be able to clean out to make your job easier.

Let’s start by querying the DMVs.

SQL Server has tons of dynamic management objects, also known as dynamic management views (DMVs) or dynamic management functions (DMFs) depending on how they’re called. Generally, if you hear someone talking about DMVs, they’re talking about these system objects that LOOK like tables, but are really objects you can query to see what’s going on inside your database.

Try running this inside one of your databases – it doesn’t really matter which one, and it’ll go blazing fast without blocking anyone else:

Transact-SQL

|

1 |

SELECT TOP 100 * FROM sys.indexes; |

The results of sys.indexes is a bunch of metadata about your indexes: what kinds of indexes you have, what kinds of locks they allow, whether they’re filtered, and much more. But, uh, reading this stuff is painful – for example, you don’t even get the name of the tables involved.

Think of the DMVs as building blocks: you have to join lots of them together before you’ve got anything useful. In the dark ages of the 1990s and 2000s, database administrators spent a lot of time doing just that – building their own houses out of building blocks. Their queries had all kinds of bugs, didn’t account for edge case scenarios, and weren’t kept up to date with the latest additions in each new version of SQL Server.

Today, there’s an easier way: sp_BlitzIndex.

sp_BlitzIndex is a free, robust, open source script originally started by Kendra Little. Since it’s open source and lives in a public Github repo (along with a lot of other cool scripts), it’s gotten a ton of updates over the years. Today, it’s a powerful, easy way to understand what’s being stored in your database.

Download it in our free First Responder Kit, and when you open the zip file, you’ll see a list of SQL scripts. For now, we’ll just start by opening sp_BlitzIndex.sql in SSMS, and execute that in your Master database on a development server. The script only creates sp_BlitzIndex as a stored procedure – that’s all. It’ll execute instantly.

There are lots of parameters for it, but let’s start with:

Transact-SQL

|

1 |

EXEC sp_BlitzIndex @DatabaseName = 'StackOverflow2013', @Mode = 2; |

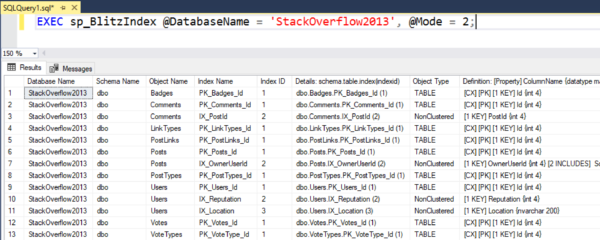

Change StackOverflow2013 to the name of a database you care about. What the Mode 2 switch does is give you an inventory of all the tables & indexes:

In the Definition column, CX means Clustered Index, and PK means Primary Key. In my screenshot, all my primary keys are clustered – but that may not be the case in your own database.

To get to know your database, copy/paste this spreadsheet into Excel and turn it into a table. Sort it by a few different columns and ask yourself a few questions:

- Sort by Rows descending, and make a note of which tables have the most rows.

- Sort by Reserved MB descending, and think about how much space is being used up by these objects. Note that some of your tables might have really wide indexes with lots of columns – you might be duplicating the size of the table many times over. (Now’s also a good time to take note of any backup tables you might have made long ago, and forgotten to delete along the way.)

- Sort by Create Date descending and look at recently created objects.

- Finally, scroll way over to the right and note the Drop & Create SQL columns. Since the DMVs have so much metadata, we can basically build the index creation scripts from scratch – making it way easier to build undo scripts as we make changes.

Since you’re running this in a development server, a lot of the columns will be empty or zeroes. Total Reads is a great example: most of your indexes probably aren’t getting any action on the development server because you’re not running the app’s full workload over there. Once you’re comfortable running sp_BlitzIndex on a development server, and you understand that it isn’t doing damage or blocking other queries, then you can take it up a level by running it on a production server. In production, you’ll get much more valuable diagnostic data.

You’re going to want to drop indexes. Hold up.

For now, just focus on getting to know what you’ve got inside these databases – after all, they’ve probably been the same way for years, and another few days isn’t going to kill you. Before you make changes to the indexes, I’ve got some more stuff to teach you about the risks and rewards.

Instead, your homework today is to run:

Transact-SQL

|

1 |

EXEC sp_BlitzIndex @GetAllDatabases = 1, @Mode = 2; |

That pulls the data for all databases at once. It’ll be slow, since it’s basically looping through each database one at a time. (We don’t have a way to quickly gather data across all databases at once.) If you have over 50 databases, we also make you pass in @BringThePain = 1 just so you understand that it’s going to take a while, hahaha.

Copy/paste the results into Excel, and don’t make changes to indexes – but DO make changes to TABLES. Before we start doing index tuning, I want you to clear out the garbage. Look at your largest tables – say, the biggest 25% by rows and by size – and look at their Reads columns. Are these tables backups that were made a long time ago in a galaxy far far away? If so:

- Ask the team if they’re still in use

- If not, rename the tables – add _ToBeDeleted_20190830 at the end of the table name (or whatever date you want) – that way, if somebody comes running in screaming that their table is gone or their query stopped working, you can just rename it back to bring it back to life

- Set yourself a reminder for that YYYY/MM/DD date to go back in and drop those tables (making sure you’ve got a good database backup first, and keep that backup around)

Why clean out the garbage first? Because these tables are slowing down your backups, restores, corruption checks, statistics updates, and much more. I’ve seen cases where just taking out the garbage cut our nightly maintenance job times in half!

In our next episode, we’ll start digging into the health & design of those indexes.

Related

Hi! I’m Brent Ozar.

I make Microsoft SQL Server go faster. I love teaching, travel, cars, and laughing. I’m based out of Las Vegas. He/him. I teach SQL Server training classes, or if you haven’t got time for the pain, I’m available for consulting too.

Get Free SQL Stuff

"*" indicates required fields

2 Comments. Leave new

I have a question regarding indexes with INCLUDE(). I see several recommended indexes that index on the same column(s), but include different columns.

E.g.

INDEX ON COL1 INCLUDE (COL2, COL3, COL4)

INDEX ON COL1 INCLUDE (COL5, COL6, COL7)

In general, would those be better suited as one index that includes columns 2-7, or as two separate indexes?

My thought process is, one bigger index saves resources involved in maintaining multiple somewhat redundant indexes, while two separate indexes would reduce what needs to be loaded into memory when a query uses the index.

On the latter point, does SQL only load into memory the columns that were needed for the query? If that’s the case it seems the single index would always be better. If not, it seems like an “it depends” situation.

Great question! It’s bigger than I can do justice to in a blog comment, but we dig into it in my Fundamentals of Index Tuning course.