Adaptive Joins, Memory Grant Feedback, and Stored Procedures

Not Exactly The Catchiest Name

There’s a TL;DR here, in case you don’t feel like reading the whole darn thing.

- Batch mode memory grant feedback works with stored procedures

- It takes several runs to adjust upwards to a final number

- It seems to only adjust downwards once (in this case by about 40%)

This isn’t perfectly scientific. It’s just one example that I came up with, and may behave differently both in the future, on other systems, and for other code.

But you gotta start somewhere, eh?

The Rest Of The Thing

I have a pretty simple stored procedure here, that qualifies both for Batch Mode Memory Grant Feedback and Batch Mode Adaptive Joins.

And I thought I had a hard time naming things.

Transact-SQL

|

1 2 3 4 5 6 7 8 9 10 11 12 |

CREATE OR ALTER PROCEDURE dbo.UsersByLastAccessDate ( @LastAccessDate DATETIME ) AS BEGIN SELECT uc.Id, uc.Reputation, p.Score FROM dbo.Users_cx AS uc JOIN dbo.Posts AS p ON p.OwnerUserId = uc.Id WHERE uc.LastAccessDate >= @LastAccessDate; END; |

For different values passed to Last Access Date, the Adaptive join changes.

This returns 622,961 rows:

Transact-SQL

|

1 |

EXEC dbo.UsersByLastAccessDate @LastAccessDate = '20160101' |

This returns 24,803 rows:

Transact-SQL

|

1 |

EXEC dbo.UsersByLastAccessDate @LastAccessDate = '20161211 01:00:00.003' |

Clearly processing these different amounts of data requires different amounts of memory.

It’s not clear from the plan which operator consumes the memory (there are no Memory Fraction indicators), but we can assume that it’s the Adaptive Join operator. In Adaptive Join plans, both the join types have a startup memory cost. This is a safety net to support the runtime decision.

Little Plan First

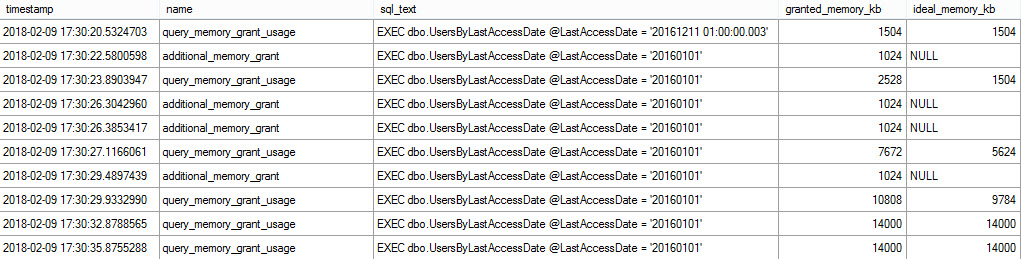

When we execute the proc with the small value first, we start off with a small memory grant, that over five iterations adjusts upwards to 14,000KB, and stops there.

In between each run, it fires off a request for more memory next time. Feedback, and all that.

I cast a pretty wide net with the stuff I was collecting while running this. Some of it caught stuff, some of it didn’t. I’m just showing you the interesting bits.

For instance, there were a bunch of rows for the spill event, but everything was NULL for them, and the spill details aren’t really pertinent here.

Whatever.

Make Profiler Great Again.

Big Plan First

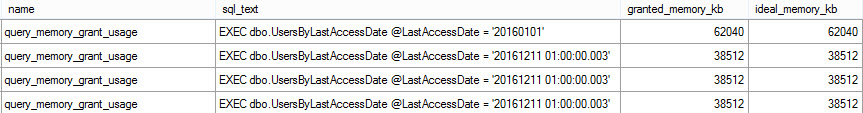

When the large plan fires first, something a bit odd happens.

It asks for a much larger grant up front than it it adjusted to last time — 14MB vs 62MB:

When the smaller plans run, the memory grant cuts down by 23.5MB, and stops adjusting from there.

On each subsequent execution, there’s a plan warning about excessive memory.

Further executions of the large plan don’t increase the memory grant. Ticking the Last Access Date back to 2010 also doesn’t cause the grant to increase upwards.

Which Is Better?

Well, if the memory needs of the query truly are 14MB of memory overall, it would seem like starting low and ticking up is ideal. I mean, unless something awful happens and your next few runs just spill and take forever and everyone hates you.

If the grant of 38MB isn’t harming concurrency, and sets a safe bound for larger values, it might not be bad if you end up there after just one run.

In either situation, you’re much better off than you are on earlier versions of SQL that can’t swap joins at run time, or adjust memory grants between runs.

Those are two of the many things that make dealing with parameter sniffing difficult.

I really hope that (JOE SACK RULES) the QO team at Microsoft keep at this stuff. Right now, it only helps for queries running in Batch Mode, which requires the Scent Of A Column Store.

Making features like this available in Row Mode, or making aspects of Batch Mode accessible to Row Mode queries…

One can dream.

Thanks for reading!

Brent says: I feel for people out there who don’t have the luxury of spare time to read blogs. You, dear reader, are going to be able to recognize these symptoms when they show up in your server, but other folks are going to be even more mystified about why their query performance is all over the place when they swear haven’t changed the queries. Don’t get me wrong, I love this feature – but in these early iterations of it, it’ll catch people by surprise.

Related

Hi! I’m Brent Ozar.

I make Microsoft SQL Server go faster. I love teaching, travel, cars, and laughing. I’m based out of Las Vegas. He/him. I teach SQL Server training classes, or if you haven’t got time for the pain, I’m available for consulting too.

Get Free SQL Stuff

"*" indicates required fields