Iomega StorCenter PX4-300d NAS Review: iSCSI Monster

I wanted to love this monster from the moment I read the spec sheets:

- Network attached storage device with 4 or 6 hot-swappable drive bays

- Available empty (so you can bring your own drives, including SSDs)

- iSCSI server with 2 network ports, jumbo frame support, VLANs

- Official VMware ESX/ESXi, Hyper-V, Windows DFS compatibility

- RAID 0/1/5/10

- Cloud support – automatically copy files to Amazon S3, Mozy, or other Iomegas over the Internet

- External USB3 drive support (for leftover USB hard drives)

- Insane number of home media support options including BitTorrent downloading, Time Machine backups for Apples, Bluetooth, automatic uploads to Facebook/YouTube/Flickr, recording server for Axis/Panasonic/D-Link webcams

- Quiet (31dBa max) and low power (based on an Intel Atom CPU and 2GB of RAM)

The full feature list is ridiculous – it’s absolutely everything I needed for my VMware lab and my backups. At around $700 from Amazon for the diskless version, I couldn’t resist. I wouldn’t recommend buying the versions that come populated with hard drives – if you ever need to replace the drives, you’re in for a rough time, and oddly, the bring-your-own-disk version doesn’t suffer from that issue.

Choosing Drives and RAIDs for the PX4 and PX6

As of this writing, the list of officially supported hard drives is pretty short:

- Hitachi Deskstar 2TB hard drive – $115

- Hitachi Deskstar 3TB hard drive – $175

- Micron RealSSD C400 128GB solid state drive – $240

Storage pools in the PX4/PX6 have to all be the same size and speed, and I didn’t really need performance, so I chose to go with four of the Hitachi 3TB drives. With those drives, RAID 5 would give me 9TB of usable storage, and the possibly-faster RAID 10 would give me 6TB. I wouldn’t recommend the 2TB drives for a reason that’ll be clear shortly.

PX6 users have 6 drives to choose from, so in that environment, it might make sense to go with four magnetic hard drives and two solid state drives. This would allow two tiers of storage: a blazin’ fast SSD mirror, and a bigger/slower RAID 5 pool. With VMware ESX/ESXi’s Storage vMotion, we can move virtual machines back and forth between the two RAID pools without taking the VM down for a reboot. It’s a fairly inexpensive way to get tiered storage, but with just 128GB in the fast pool, I’m not sure how useful this would be in practice.

Pluggin’ in the N: Network Attached Storage

The PX4-300d comes with the basics: power brick, one Ethernet cable, and the management tools CD. After adding in drives and plugging it in electricity and Ethernet, the control panel displays the IP address it fetched from DHCP. Fire up a web browser, go to that address, and you’re going to be impressed with the user interface. You can even test drive the Iomega StorCenter control panel online. (No, that’s not my Iomega, and no, you can’t delete data. I tried.)

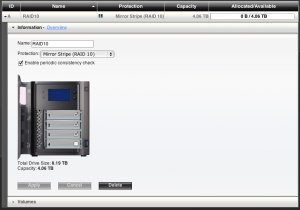

For the first minute or two, Iomega impressed the heck out of me. The device could fetch its own firmware upgrades over the Internet, and yes, it was up-to-date. I went into Drive Management, and sure enough, it offered a really easy GUI to configure RAID levels. I picked RAID 10 and clicked Apply, but Iomega warned me that I hadn’t chosen any drives yet. I’d missed the subtle little visual checkboxes inside each hard drive in the GUI, so…

I need to stop here and tell you a little about myself: I like to break things.

I didn’t say I like to take things apart and put them back together – oh no. I just like to find new ways to break ’em. I take an evil satisfaction out of knowing somebody, somewhere didn’t test their code.

So I did what I do – I checked three boxes, chose RAID 10, and clicked Apply.

As far as I know, there’s not a way to perform RAID 10 with 3 hard drives (it requires an even number of drives) but the PX4 happily started initializing the array. I sat there absolutely dumbfounded – this wasn’t a small bug, but a giant, ugly, monstrous bug. I had no idea what the PX4 might be doing to the disks behind the scenes or whether I’d have any redundancy whatsoever.

I wouldn’t be so freaked out about this if Iomega wasn’t owned by EMC, one of the biggest, most reliable companies in the storage industry. I had instant flashbacks to the click of death, the nasty sound Zip drives made before they crashed, resulting in a class action lawsuit against Iomega. Was Iomega back to its old tricks of cutting costs at our peril, or were they up to EMC’s quality standards? I took a deep breath, pretended I never saw it, and deleted the array. I set up a four-drive RAID 10 array, decided to let one bug slide, and pressed on.

While the array is initialized, the Iomega’s LCD display and banners across the top of the web UI both warn the user that performance is degraded – very nice touch. As the array is initialized, you can continue to use the storage array, and even write data to it – again, nice touch. However, the initialization process takes hours – I left mine running overnight – which means I wouldn’t recommend the 2TB drives. A PX4 with 2TB drives is $1,165, and with the 3TB drives it’s $1,405 – just $240 more for 50% more storage. Yes, you could upgrade the drives later, but it’s going to take a loooong time to swap out each drive one by one and let the array rebuild.

Some Server Changes Require Reboots

As the array initialized, I started wandering through the web control panel setting things up. I changed the name to LittleBlackBox, and I was taken aback when the PX4 asked to reboot itself. Really? Just for a name change? Okay, well, alright, nobody was on the array yet anyway.

Next up – configure remote access. The PX4 will connect to a dynamic domain name service so you can use a simple DNS name to access your data from anywhere. Sounds good, so I set it up and – you guessed it – reboot required.

Next up – configure iSCSI and jumbo frames. This one at least didn’t require a complete NAS reboot, but it did warn that “This may take a few minutes to complete. Anyone currently accessing this device will be disconnected. Do you want to continue?”

The Problem with Rebooting an iSCSI NAS

NAS reboots aren’t a problem for some home users. If iTunes stops working for a few moments, or my pictures stop uploading to the cloud, life goes on.

In a business or virtualization lab environment, it’s a massive problem. VMware will be storing the virtual hard drives for several running machines on it. In order to reconfigure many settings on the PX4/PX6, I have to:

- Shut down all of my virtual servers

- Shut down VMware

- Restart the Iomega and wait for it to come up

- Start up VMware

- Start up my virtual servers

This is a total showstopper in a corporate lab environment where multiple people might be playing with the device. I simply wouldn’t be able to count on junior sysadmins not changing settings on the Iomega, triggering a reboot, and losing all of my virtual servers. I would have to tightly control security settings on the NAS, but the only security option is a checkbox for administrative privileges: either you’re in, or you’re not.

That NAS is a Monster, M-M-M-Monster

Philosopher L. Gaga once said in a poem entitled “Monster”:

I’ve never seen one like that before

Don’t look at me like that

You amaze me

While she was referring to crazy yet well-endowed gentlemen, I’m sure she would agree that the PX4-300d is just such a monster. Its feature list is the size of a baby’s arm, but sometimes it’s got the business logic of a weiner.

The good news is that it’s got killer qualities for a virtualization lab – or perhaps even a small business’ virtualization needs. The bad news is that it doesn’t have the stability or testing to back it up.

I was heartbroken. I’d really wanted to recommend this storage device to small businesses for their staff to learn virtualization, storage vMotion, and perhaps even as a backup target. With the current version of firmware, though, I just couldn’t do it. With that in mind, I gave up on testing the network card failovers (which do work at first glance), multipathing (which doesn’t – more on that later), the speed differences with jumbo frames, and anything reliability-related. For now, it’s just a good device for geeks to use in their labs and homes, so I’ll focus the rest of the review on that.

Device Features: Shares, Apps, Time Machine, BitTorrent, More

Onboard storage is set up in a hierarchy:

- Drives are grouped together in pools (like 2 drives in a RAID 1 pair or 4 drives in a RAID 5). Pools are a fixed size.

- Pools are carved up into multiple volumes. Volumes are a fixed size.

- Volumes are carved up into multiple shares. Shares are thin provisioned.

- Applications are configured at the share level.

So in my PX4-300d, I have:

- Storage pool – 4 drives in a RAID 10 for 6TB of usable space

- “Media” volume – a 1TB volume that lives in my storage pool

- “Torrents” share – a thin provisioned share in the Backups folder. It starts at zero size, and can grow to whatever size of files I dump in there – up to 1TB. I can access the Torrents share as a folder in Windows or on the Mac.

- The Torrents onboard application added a Download folder in the Torrents share. I can copy any torrent file into this folder, and the Iomega begins downloading it.

The application user interfaces are designed by geeks, for geeks: they’re just good enough to be point-and-click usable, but not good enough that I’d want to walk family members through configuring or troubleshooting them. For example, in the Torrent configurations screen, there’s a Port box. You can manually configure a port or use the automatically supplied one, but you can’t tell if it’s actually working. There’s no “test the router” functionality built in, so I have no idea if uploads are working or not until I start seeding a torrent. There’s edit boxes for Maximum Download Speed and Maximum Upload Speed, but there’s no scale – is it KB/sec or MB/sec?

The apps have just enough capabilities to say they work, but not much more than that. Modern BitTorrent clients all have scheduling setups so that you can transfer more at night and throttle back the sharing during the day – not here.

The Amazon S3 sync app will upload your files to S3, but it won’t delete the ones that get deleted on the NAS, thereby making your storage bill steadily increase. If you use it for, say, SQL Server offsite backups, you would want to reuse your backup file names in order to get a 7-day rotation in the cloud. You’d run into a separate issue anyway – S3’s max file upload size is 5GB. Any larger files just don’t get uploaded, and you don’t get an alert. No upload/download throttling or time-of-day scheduling here either – your bandwidth will just slow to a crawl as new files are added to S3.

Time Machine works wonderfully as a backup target, but the user is presented with two cryptic fields: Apple Network Hostname and Ethernet ID. The network hostname comes from your Mac’s System Preferences, Sharing, click Edit, and it’s the part you can edit. The Ethernet ID comes from going into Terminal, type ifconfig, hit enter, and look for en0 – the next line says ether, and copy/paste the characters to the right of that. The Iomega doesn’t tell you any of this. The only thing it does tell you is the Time Machine backup folder’s size, a constant 173MB, and even that is wrong. After several days of backups, the folder sizes still showed incorrectly on the Time Machine panel of the Iomega.

But it works.

At least, I think it works.

And that’s what makes this review so hard. When the control panel tells me it’s configuring a RAID 10 array with 3 drives, I know it’s wrong. A basic error like that shakes my confidence in the entire unit. Same thing with the incorrect Time Machine folder sizes, and the wacko configuration screens. If I can’t trust what it’s showing me, how can I trust what’s happening behind the scenes? I’m not sure. This doesn’t bother me too much for home media backups, but it would bother me a lot for running my virtual machines.

Is the Iomega PX4-300d the Best VMware Storage Server?

Most business iSCSI network implementations involve a completely separate logical network for iSCSI – separate IP addresses on a separate subnet. For example, my home network is on 192.168.37.x, so I might use 192.168.47.x for my iSCSI network. The iSCSI traffic is kept away from the regular network.

Most business iSCSI setups also involve redundancy: the storage device is plugged into the network with at least two network patch cables plugged into two different network cards. If either one fails, you’re still able to talk to the storage.

At first glance, the PX4 can handle either one of those requirements, but not both. If you want two separate logical networks, then you probably want to isolate the iSCSI traffic completely. You can configure one of the PX4’s network ports into your management network (like 192.168.37.x) and plug the other into an iSCSI network. When you do that, you just lost redundancy. If you choose instead to have both of the PX4-300d’s network jacks plugged into the same network, that network is going to need to see the outside world if you want to use any of the PX4’s home-media-savvy features like BitTorrent.

This is where the good monster comes in again.

This Good Monster Has VLAN Support

This NAS supports VLANs – multiple virtual network subnets attached to the same network card. Imagine an incoming network packet that finds its way to your server, and it’s got a tiny part at the beginning saying, “I’m coming from Virtual LAN #1.” Your operating system would need to understand that it’s attached to a certain subnet and route it to Virtual Network Card #1. The next packet might come in saying, “I’m coming from Virtual LAN #2,” and your computer would understand that it’s part of your Virtual Network Card #2 – the one for iSCSI.

Granted, your one network cable is only so fast, and we can’t stuff 2Gb of traffic into a 1Gb bag patch cable. However, this does let us segregate network traffic at the switch level so that your chatty iSCSI traffic doesn’t overwhelm your regular network, and your chatty BitTorrent client traffic doesn’t overwhelm your iSCSI storage network. While it is indeed delicious to get your chocolate in my peanut butter, it’s not nearly as delicious to get your BitTorrent in my iSCSI.

VLANs require a savvy operating system like VMware ESX/ESXi and a VLAN-capable switch. I chose the $125 Cisco SLM2008-T because it supports VLANs, jumbo frames, has 8 ports, and it’s cheap. Cisco switches have a reputation for being difficult to administer, but this one comes with a pretty good web control panel. The setup process of putting in VLANs, jumbo frames, and ESXi configurations is a good idea for a future blog post, but suffice it to say that between Iomega’s web UI, Cisco’s web UI, and the vSphere Client user interface, you can be up and running with a very real-world-ish lab setup in under an hour – for some values of “running.”

Here comes the bad monster again – users can’t exclude network interfaces from iSCSI use. Every time VMware rescanned the iSCSI adapters, the Iomega happily reported back that iSCSI services were available from every single IP address it owned. I wanted to just do iSCSI over one network subnet, not both – no can do. After pulling my hair out for a few hours, I threw in the towel and switched to NFS. It worked the very first time I tried it, and it came with a nice perk: the StorCenter’s LCD display panel actually reflects the right amount of used space used on NFS volumes. iSCSI volumes show up as completely full even if there’s not a single file on ’em. (That’s not the device’s fault, but users won’t know that.)

That’s probably a good lesson for StorCenter users: the features that require zero parameters are easy, and they work like a champ.

How Fast is This Cheap NAS?

Geeks love to tweak parameters. I want to set the stripe size, caching, NIC load balancing, and flux capacitor voltage for every storage device. That’s not what the StorCenter PX4/PX6 is about: it’s just, uh, storage. Just as the other LifeLine apps don’t have much in the way of parameters, the iSCSI app checks just enough boxes to work – but that’s where the fun ends.

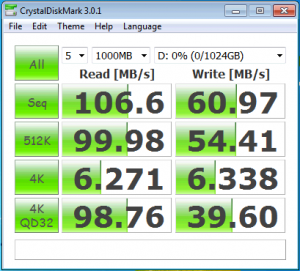

Most of my Windows-based iSCSI tests were able to saturate a 1Gbps Ethernet link with reads or with big sequential writes, and small or random writes ran around 10-60 MB/sec. I was surprised, though, that no matter what tricks I played with multipathing or multiple iSCSI volumes striped together, I just couldn’t get this thing to blow past a single NIC’s throughput. The lack of network card performance metrics on the Iomega made it a little tricky to troubleshoot, but after I checked the Cisco switch metrics, I got proof: the iSCSI traffic was only sent back through one network card on the Iomega – specifically, NIC #1.

I can write iSCSI data to both of the PX4’s network cards simultaneously. They just won’t send data back from both simultaneously – at least, not when they’re both on the same subnet. Maybe by getting even fancier with the multipathing setup and putting the two network cards on two different VLANs, in two subnets, I might be able to break past the 100 MB/sec read speed limit. (For more about multipathing, check out my SAN Multipathing series.)

But you know what? 100MB/sec is fine for me. See, if I really needed speed, I would have filled this thing with SSDs to begin with. Solid state drives excel at random access, and that’s what every Iomega StorCenter NAS is going to end up doing – lots of random access. Multiple VMs accessing the same LUN, BitTorrents being transferred, MP3s being played, these little devices are a party in a box. The drives are going to be worked hard, and I bet for most people, the real speed limit will be the random access speed of the drives anyway.

But while troubleshooting storage performance multipathing, I ran into the bad side of the monster again. I unplugged one of the Iomega’s two network cables, and I didn’t get any warnings whatsoever. The PX4 didn’t show any errors on the LCD display or the web control panel, and it didn’t even notify me via the built-in email alerts. I had to dig into the network screen to even figure out that one of the cables was unplugged. Upon reconnecting the cable, the Iomega autonegotiated to 10Mbps (not 100, not gig, but 10) half duplex. No warnings were shown on the control panel for that, either, and to make matters worse, you can’t set the Ethernet speeds. It’s autonegotiate or nothin’.

Bottom Line: It’s a Little Monster Alright.

The Iomega PX4-300d NAS seduced me with its long, strong…feature list, and I’m willing to overlook the wild infestations of crabs bugs for my lab at home. It’s the first NAS I haven’t returned back to the shop in less than 72 hours. Like the philosopher said:

Look at him, look at me

That boy is bad, and honestly

He’s a wolf in disguise

But I can’t stop staring in those evil eyes

Who should buy the StorCenter PX4/PX6? Home users that want one quiet, capable box to sit in a closet handling backups, media, BitTorrents, uploading photos to the cloud, and other menial chores. Yes, you could build your own more-capable solution for less money, but it won’t be as point-and-click easy as the Iomega. IT, DevOps, and developer managers should also pick up one of these as a reward for a well-performing team. Throw one of these under a cubicle and for less than the price of a good workstation, the entire team now has an iSCSI sandbox and shared MP3 storage off the domain and away from the corporate IT overlords.

Who shouldn’t buy it? I wouldn’t recommend the PX4/PX6 in a business environment for anything other than a lab – at least, not until versions of the firmware come out that fix the glaring UI bugs and stop users from rebooting the NAS. I would be very uncomfortable to go into a small business as a consultant, sell them a shared storage setup using a StorCenter PX4/PX6, and walk away.

Can they fix these issues with firmware updates? Yes, but only if they test the new firmware updates better than they’ve tested so far, and as of August 2011, it’s not looking good. After applying a firmware update to fix the VMware multipathing issue, I received this disturbing email from Iomega:

Thank you for downloading the recent firmware update for your Iomega StorCenter px. After release, we identified an issue with growing or expanding storage pools with the v 3.1.10. 45882 update. If you have not yet applied the update to your system, please wait. We will be releasing a new version of the firmware that resolves the issue soon. If you applied the update, you should not experience any issues unless you expand or modify the size of a storage pool. If you do experience any issues with a storage pool, please reply to Iomega Technical Support using this incident number….

M-m-m-monster…

If you decide to buy it, you can throw me a few coins by buying the Iomega PX4-300d via my Amazon link or the 6-drive version, the PX6. And hey, it’s in stock for Prime members, so you could have it tomorrow – plenty of time to play with it over the holiday weekend. I’m not sayin’, I’m just sayin’.

Related

Hi! I’m Brent Ozar.

I make Microsoft SQL Server go faster. I love teaching, travel, cars, and laughing. I’m based out of Las Vegas. He/him. I teach SQL Server training classes, or if you haven’t got time for the pain, I’m available for consulting too.

Get Free SQL Stuff

"*" indicates required fields

73 Comments. Leave new

Fine job reviewing this NAS box. However, I believe that you cheapen your work and your reputation by inserting unrelated sexual references. Do you do this to be funny, to attract web traffic or just because you’re bored?

Eddie – thanks, glad you liked the review! I use all kinds of oddball references here like my Lady Gaga references in the SQL Server 2008 R2 review and my poop opening line in my Ozar’s Hierarchy of Database Needs post. I find that by adding my personality, it does the exact opposite of cheapening my work. There’s plenty of places you can go to find boring, dry reviews with no personality. Those are a dime a dozen – cheap, in fact. Hope you can get past the humor, but if not, I understand.

I learned something from your answer, Brent.

Tobi – uh oh, dare I ask what it was? 😀

It helped me make myself be less rude when I get enraged.

Thanks for the response, and I’m glad you didn’t take my comments personally (I’ll try to do the same about yours). :^)

I honestly did not see any problems with the very veiled, non-technical content in Brent’s post. Adding that kind of stuff makes for a much more memorable read. Dull and humorless is no way to go through life…

Glenn – all of the problems you had were with the technical content, right? (rimshot)

I’m no prude, believe me. But while reading a NAS review, Brent’s reference to a giant schlong took me a little off guard.

Nice review, Brent.

I keep going back and forth w.r.t. which SAN to buy for home use. I have an old SuperMicro giant honking server, but it’s way too loud and uses too much power. I want something quieter and greener.

I’m almost ready to pull the trigger on a Microserver, run S2008R2 (or maybe even WHS 2011) on it, load it with drives, and be done with it. (loved my old HP ex475) This gives me the ability to run whatever other apps (SqueezeServer, etc.) I want, which I can’t do with most SANs. Or, if I can, it’s weirdly proprietary apps.

Looking at actual SANs, I hadn’t even considered the Iomega. I’ve been considering QNAP and Synology. (oh, I did consider Drobo, but that was quickly eliminated… yuck…)

I tend to use Synology, but I did appreciate seeing some of the pros and cons with this Iomega… It wasn’t even on my radar before this. I also was pleased to see someone else using the HP Micros as a simple vmware lab… sure wish I could get a CPU bump in them though.

Slightly off topic but related to testing VMWare installations, this session from vmWorld has a demo at the end (starting @ 45:50) about running nested vm’s. He’s got an 8 node ESXi cluster all running a bunch of vm’s inside a vm. Even has virtual san and router. All this on budget hardware with 8gb mem.

http://www.vmworld.com/docs/DOC-5146

(site requires a login, but registration is free)

Andry – yeah, I’ve seen setups like that. Allan Hirt has a pretty slick setup with multiple hosts and a SAN appliance on a small laptop, too. Pretty fun.

Hi Brent,

Nice posting 🙂

Does HP Microservers providing enough performance (CPU, memory) to run VMs on them?

I’m also thinking about buying a server for my Hyper-V VMs, but don’t know which one…

Thanks

-Klaus

Klaus – thanks! The HP Microservers are quiet, low-power, and work great with VMware ESXi 4.1. They don’t have a lot of power, but I’d rather have the quiet & low-electric-power attributes for my home setup. I’m running 2-3 Windows 2008 R2 VMs on ’em at any given time.

If you want to spend more, the HP ML110 G7 just came out. It’s not as quiet, uses more power, takes up more space, etc, but it’s on the VMware compatibility list too, and takes faster processors & more memory:

http://h10010.www1.hp.com/wwpc/us/en/sm/WF05a/15351-15351-241434-241646-3328424-5075942.html

Brent,

Thanks for the link.

I’m currently looking more into a 19″ rack mount – do you have experiences on this?

Thanks

-Klaus

Klaus – of course, that’s what I do for a living! 😀 But I’m hesitant to endorse any one server vendor publicly. Things change fast in the server world.

Brent,

I enjoyed the review, but now I am am a little mad at you…..I would love to have this sitting on my shelf at home. Oh well my Birthday is in October so I will have to start dropping hints tot he wife. Thanks for a wonderful review.

-Jeff

Jeff – ha! Well, don’t be too mad. This is what happens when you become a consultant and start approving your own expense reports. You should be mad at my manager for approving it. 😉

I don’t get the dig about having to reboot the server for config changes. These are not settings you should change often, if ever. Granted, on a linux based system like the StorCenters, (or seem to be), you ought not need to reboot for many changes at all, but it’s hardly worth a mention. If you have an admin making changes that require a reboot, and does so outside of planned downtime, you need a new admin.

The other things you brought up are notable, however.

Mike

Mike – I appreciate your enthusiasm for not making changes outside of planned downtime, but on most storage devices, these kinds of changes don’t require rebooting the entire system. EMC is pushing this as a higher-end system (as they demoed it at EMC World driving desktop virtualization environments) and this would be completely unacceptable in any desktop virtualization environment.

“The application user interfaces are designed by geeks, for geeks: they’re just good enough to be point-and-click usable, but not good enough that I’d want to walk family members through configuring or troubleshooting them.”

I do not imagine you will ever come across anything remotely related to computers that you will deem “good enough” that would motivate you to want to walk our family members through troubleshooting it. BTW, Dad is still having problems figuring out how to send a text message from his phone and will be calling you this evening.

HAHAHA, this really is my little sister, ladies and gentlemen. And she has a very valid point.

Em – yeah, I figured Dad must have borked something up with his phone. I texted him yesterday and he didn’t respond. I think I’m just gonna give them iPhones when I’m up there this weekend and be done with it.

Hi Brent,

“So I did what I do – I checked three boxes, chose RAID 10, and clicked Apply.”

It seems like EMC is using RAID 10E rather than pure RAID 10 in this box. Simply put 10 is for an even number of disks, and 10e is an implementation of 10 for an odd number of disks. So yes, you can do RAID 10(e) with 3 drives, but also 5,7,9… and it works pretty well ;o)

Sometimes the odds are good, some other the goods are odd…

Cyril

Cyril – RAID 10e requires an even number of drives. I think you’re thinking of RAID 1e, which uses an odd number:

http://en.wikipedia.org/wiki/Non-standard_RAID_levels#RAID_1E

Either way, it’s not RAID 10, and since the GUI said RAID 10, there’s a problem. I don’t even wanna guess at what it’s doing under the hood. (sigh)

Linux MD can do RAID 10 over odd number of disks. It can do this, because it’s not a real stacked implementation:

http://en.wikipedia.org/wiki/Non-standard_RAID_levels#Linux_MD_RAID_10

You still get read/write boost and do get redundancy (any drive can fail and you won’t loose data). Calling it anything *but* RAID10 would cause even more confusion.

Hubert – thanks for the note, but as you’ve noticed above, calling it RAID 10 causes confusion too. I *hope* this is what the PX4 is doing, but based on the rest of the stuff I’ve seen in the UI, I don’t have high hopes for that. At the very least, the Help button on the RAID levels should specify this.

Right, I meant 1e. The firmware might have forgotten the (e). Also I was thinking, what is a RAID 10 with 3 drives? Basically it is a RAID 10 with a dead drive. LOL

Nonetheless, good review ;o)

I would just wish that it was RAID 10 with an hotspare

You can totally do that – just buy the Iomega PX6, which has 6 drive bays, and you can do a four-drive RAID 10 with a hotspare, plus a backup spare drive or a file share drive with no protection.

Brent, nice review, very thorough!

I wonder if it was actually creating a RAID 1E array from the 3 drives. It’s like RAID 10, but allows for an odd number of drives:

http://www.techrepublic.com/article/non-standard-raid-levels-primer-raid-1e/6181460

They shouldn’t be calling it RAID 10, but I’ve heard the two used interchangeably. We run a few RAID 1E arrays with 5 drives on Promise VTraks, but their UI refers to them appropriately.

Sorry for the redundant comment… somehow I missed the last exchange on RAID 1E. I must have had it up for a while before reading and forgot to refresh… rookie mistake!

Ha! No problem, done that myself recently. Have a good weekend!

Brent,

I have been looking heavily at this PX series but we haven’t purchased one for testing yet. Trying to find a “good enough” solution for small businesses that want to have a shared storage solution for VMware with VMotion. Have you tested and reviewed any others recently that are in this general price range that are doing a better job than the PX4/PX6?

I’m having a hard time finding anything in the $1000-$3000 price range.

If I step up to the $10K range, no problem. 🙂

However, that’s generally out of the price range that a small business wants to invest.

Marshall – no, unfortunately, I haven’t seen anything in that price range worth pursuing. If you want reliability – especially in a small business scenario where there’s usually no hands-on sysadmins – I would step up to the $10k range.

Hi Brent,

Great review on the StorCenter PX4-300d. I am new to the Nas and not a lot experience with networking. I just want some type of media storage to serve a big house with lot of people and media streaming devices and I have at lease 3 to 4 TB of media to load starting out. I have been studying them for the last 3 months and have went out and purchased PX4-300d with 2 2TB Hitachi Drives a week ago and was about to open it and install until I read your article. I would like to ask you a few questions please Sir.

1. Do you think the shortcomings you mentioned in the PX4-300d can all be corrected with firmware later?

2. Do you think the PX4-300d performance good enough to stream a blueray movie using a wired gigabit connection to a TV with no performance problems with multiple people using it at sametime?

3. I am more concerned about performance and less concern with mirror backups. My data can be backed up daily or in anyway that yeilds more speed. What type of setup would you suggest to accomplish this or does this make sense.

Thanks for your time.

Robert –

1. *Can* they be corrected with firmware later? Yep. *Will* they be? I have no idea.

2. When you say “stream a blueray movie,” the trick there is how you encode it. If you didn’t compress it at all, then first look at the bandwidth required to stream a 1080p movie. It’s going to depend on the movie and the scene, but at first glance, I think your bigger problem is going to be the hard drives. Two drives aren’t necessarily going to give you enough bandwidth to pull that off – however, buffering saves the day there. As long as your client app buffers at least a few seconds of video, you should be okay. However, I’d go with 4 drives right from the start. After all, you already spent $750-$1000 on this – what’s another $150 to guarantee the performance you want?

3. If you don’t care about the data, RAID 0 (striping) is the fastest. However, if you lose a single drive, your array goes completely down until you restore from backup. Do you really wanna hassle with that? I sure don’t.

Great article. Even with the issues your thoughtful review made me purchase the px4-300d. So far it has been great. With the recent 9/16/11 software update the Time Machine and Lion compatibility issues have been resolved (apparently). Overall a great little machine, and a great review.

Yeah, only one small problem with the 9/16 firmware update.

It BRICKED my PX4-300d. Customer service was no help – “troubleshooting” was a matter of, “Uhh.. is the screen still blank? Okay, we’ll send you a warranty replacement.”

Not Happy. I agree with you 100% – I will absolutely NOT suggest this for any business purpose.. Heck, I have a hard time even considering this for home lab use at this point.

Hi Brent, can you tell me the size of the OS that Iomega uses in this Px?

Sorry for any misspellings, my native language is not english

David – no, sorry, I wouldn’t have the slightest idea. Why would it matter?

Looking into the StorCenter px4-300d to replace a file server that is on it’s last leg. I like the fact that you can replicate it and access it remotely. However, would this really be a server replacement? Their needs are simple:

Share 1 folder with a bunch of subfolders between 25 users.

Have outside workers be able to map a drive letter and access the contents of the share

I get the feeling that this might not be ready for primetime. I keep seeing words like “Bricked” and “Blue light” and “Firmware killed it”. So I am a little reluctant to purchase one.

If it’s going to cost more than $1500, going to just go the server route. Any help is appreciated.

Al – when you say “have outside workers be able to map a drive letter,” that’s really, really dangerous over the web. There are very few places that allow SMB access over the web. Instead, I’d look into something like http://www.Dropbox.com, a file sharing service.

I am in the same boat as Al. There is a server that must be replaced. They are only eight users and will need it for shared files and an old VFP application. Looking to replace it with the px4-300d with two 128GB SSDs mirrored.

Do you know if the backup will handle copying an active .PST or .DBF file left open by a user? Before the thought of the iomega device a Windows 2008 server was going to be used. It can use the shadow copy feature to provide a backup of the open files.

Eight users with three or four should be fine, but do you think the device can handle 10 to 15 active users?

Tfar – I wouldn’t trust it to back up an open file. Generally that requires an agent on the end user computers. It’d be fine as a file server for 8-15 people though.

Thanks for the review. I was considering upgrading from the Iomega StorCenter ix4-200d but will hold off now.

Brent,

Thanks for the review. Noticed the link to issues with replacing factory installed hard disks near the top of the article is broken. Can you provide more details on the issues?

Thanks.

Chuck – yeah, you can’t easily replace the hard drives. You’re best off searching their support KB for info.

BTW, found out their “backup” software isn’t something that comes with the box. It’s free IOMega software that will actually work with any NAS. Was kind of disappointed with that. There is no reporting, so if you are backing up workstations, you will not know if it fails.

Anyone have any ideas on backing up MyDocs and Desktops on 40 Workstations?

Hello Brent,

I have been debating what kind of NAS to get for quite sometime. Your findings are very helpful. Thank you. Two questions remain:

1) Am I able to check the health of disks, components, fan, temp with this device? (similar to RAIDair with Netgear)

2) Am I able to mix and match different kind/sizes of hard drives? (as long as its on the OK list)

Also, its been a while since you last reviewed this product. Is there anything else out that you like better?

Thank you very much for your help!

Pat

Hi, Patrick. There’s some basic health checking built in, and you can mix drives. Unfortunately I don’t have anything to add to the review because I ended up Ebaying it. It just didn’t meet my needs – I kept getting frustrated with the problems I mention in the review.

Hi, I recently bought the Storcenter mainly thanks to your review. I configured it with a 3 x 4 Tb HDs, and now I am trying to figure out how to best set up the Drives for my needs; I have a Raid 5 configuration, so I can use up to 8 TBs of storage.

My aims are:

– to store all my docs and stuffs (retrogaming, music, photos, documents, CD isos)

– to have a backup unit

– to be able to access any file I stored from everywhere in the world

– to share medias at home (movies for kids iPads, music …)

– to make some files availables for friends

How do you suggest to configure it ? The default setting has created some SHARES Volumes (documents, music, movies and so on …), but I don’t like it so much.

Any suggestion?

Many thanks

Subbu – I would follow the directions. Iomega’s got really good documentation for this.

Ok, I will go deeply into docs :). Thanks for the quick answer. Have you connected to an UPS ? The NAS doesn’t permit to enable write cache without an UPS ? Do you need an UPS with a Data cable ? How do you connect it to the NAS ?

No, I didn’t connect a UPS to the NAS.

Have you gotten to play with the hardware failure side of the system? I haven’t messed with it, but recently started to process some what if’s as I get more important data on my storage device.

I have the PX4 running a Raid5. The odds of having 2 drives crash simulationeously is not very common, so I feel safe there, but I’m now thinking about an aspect that’s typically not thought about. What happens if the Iomega controller board crashes? Can I pull my 4 drives out of one unit and plug them into another PX4 and have them pick up where they left off, or is that a catastrophic failure? If it’s a catastrophic failure, it sounds to me as though a RAID is merely a false hope, as it doesn’t truly protect you being that it only takes the controller card to fail to kill you in any configuration outside a full mirror setup. Should I be doing some form of backup of my RAID configuration file from the PX4 so if the PX4 motherboard fails, I can restore the config file onto a new PX4, then add my drives and be back up and running?

Brad – I sold the Iomega after the problems I explained in the article. I’ve moved on to a Netgear unit.

What unit did you get as PX4 replacement? Do you still use it?

I switched to a Netgear ReadyNAS, and then moved on to a Drobo.

Brad, I own an older ix4-200d unit, and experienced exactly the problem you describe – system board failure. Thankfully it is still in warranty and I was able to get a replacement, but getting my data off the old drives was not as simple as swapping them into the new unit – that won’t work, at least not with the IX series, and I’m almost certain not with the PX series either.

Instead I had to plug the drives into a windows box with sata connections, then used the “iRecover” software from http://www.diydatarecovery.nl to recover my information (free to make sure it’ll work and see your information, but you must purchase in order to actually pull information off the drives). See here for use with Iomega StoreCenters: http://www.diydatarecovery.nl/forum/index.php?topic=1309.0

I purchased it nearly 3 years ago now because it seemed like a good deal, cheap yet feature rich – but as Brent points out, even now the PX and IX series have their problems and ultimately is not a good solution for businesses. Brent went with Netgears, which we use at my own work, and while they too have their problems they are more business friendly and easier to recover from hardware failures.

i have iomega storcenter px4-300D i restarted it and it got stuck on 95% and in the web interface it says device is starting up its stuck! any advice?

Rod – you should contact Iomega support.

It could be the issue with one of your drives. When you take out the drive stopping unit from loading it will start. It worked for PX6. Try only one drive at a time.

I bought one of the smaller (arm based) ix4-200d a couple of years ago for virtualization and other techy things.

I was (still not) happy with it. Based on the spec sheet it looks great but fails to deliver and performs really badly (worse than this one).

Despite all the complaints I could come up with it was still at a price point I was willing to pay at the time and and I will continue to get use out of it for a couple more years. I would say it was the right choice but I will just never get one again.

can i deploy an access data base in iomega storage as shared file

and is this recommended

Samir – we don’t do Access work here, so I’m not the best guy to ask for that.

I would like to use the Iomega StorCenter PX4-300d for providing shared iSCSI lun’s to a Veritas Cluster Server environment. I understand SCSI reservation is necessary, data sheets suggest that the device provides the same. Have you any pointers/tips for a clustered environment and the device as shared storage?

I’d also like to do vMotion on it, again, any tips?

BTW – excellent writeup. an TY for responding to each of the posters queries, I rarely see rsponses by author to ALL posters!!!! wonderful work.

Kartik – thanks, glad you liked it! I no longer own the PX4-300d, though, so I don’t have good guidance on how to use it with Veritas.

px6-300d pool is there but not rebuilding completely, px6-300 keeps rebuilding…

Hello,

I have px6-300D nas with 3TB X 6 drives. I configured it with Raid 5. Few Days back it was showing a message The amount of free space on your ‘Shares’ volume is below 5% of capacity. and asked to overwrite Drive 6. So i ejected it after shutdown i put it back and started.It get started rebuilding than. At 43% it got stuck and showing red indication, It was also messaging that your storage pool failed. in web access it was messaging your storage pool has failed and above message also. I tried restart and all but nothing has worked. Then after i contacted customer care they told that your few drives (3 or 4) has failed. they asked for dump file. as i wasold firmware the dump file was not getting generated. so i upgraded firmware as per instuction. they conculed that your few hard drive has been failed and go with some data recovery solution provide. I really dont understand how would it corruprt my storage pool without any notice or mesage. If its NAS with raid protection my data must be protected. I really need my data back.

Till today i am receiving messaged like

The amount of free space on your ‘Shares’ volume is below 5% of capacity.

Data protection is being reconstructed on Storage Pool gstv. Reconstruction is 8% complete.

Reconstucting restarts at 45% starts with 0.

I wonder if reconstrcion is happeing how would my drives are failure.

Vishal – if your hard drives failed, you’re out of luck. This is what backups are for. You can try calling a hard drive repair company, but be prepared to spend thousands of dollars.

Hello Sir…..!!!

My Storage Center px4-300d-THZ8QS – Iomega StorCenter px4-300d is not taking Backup some pcs taking backup and some pcs Not taking backup Imminently need solution

I just got one of these cheap and it seems the memory can be upgraded to 4GB and you can add a very specific Broadcom dual 10Gb nic. I’ll be trying these out and report back.

Memory upgrade is kinda picky as a module that matched the exact specs of the original one didn’t work and then another one that was slightly different did work. I haven’t had any luck with 4GB modules yet even though I have seen that apparently some people did get 2x4GB modules to work for a total of 8GB.

In my research on the 10Gb nic, the drivers won’t be there for it as I didn’t see them in dmesg. However, I believe it will be possible to add 4 more regular gigabit ports via a nic so will be attempting that instead of 10Gb.