I’m Not Gonna Waste Time Debunking Crap on LinkedIn.

LinkedIn is full of absolute trash these days. Just flat out bullshit garbage. (Oh yeah, that – this post should probably come with a language disclaimer, because this stuff makes me mad.)

People wanna look impressive without actually putting in the work to gain real knowledge. They’re asking ChatGPT to write viral “expertise” knowledge posts for them, and they’re publishing this slop without so much as testing it.

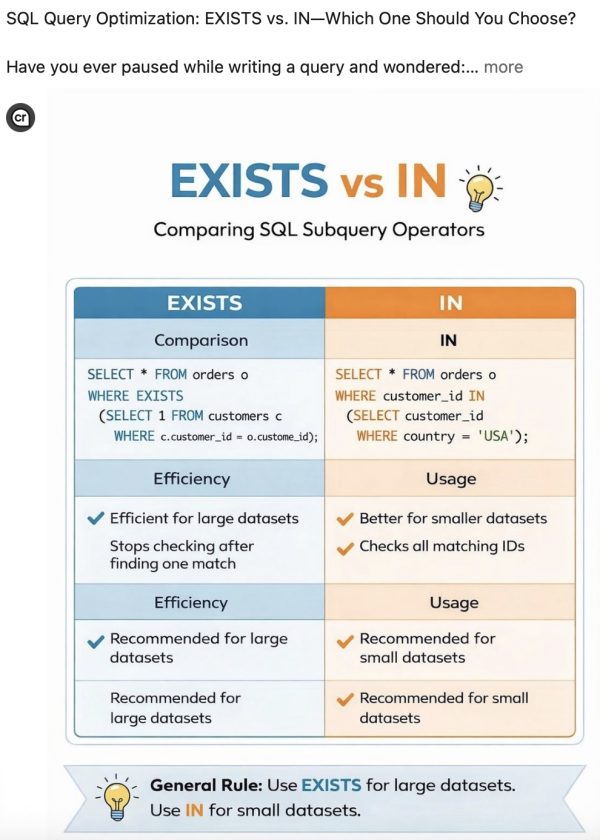

I’m going to share an example that popped up on my feed, something LinkedIn thought I would find valuable to read:

It’s pretty. It looks like it was written by an authoritative source.

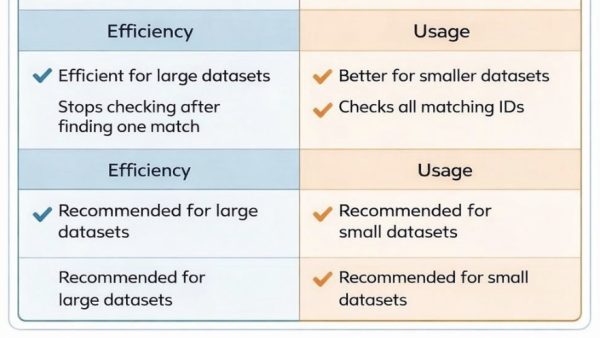

But if you drill just a little deeper, there are telltale giveaways that the author is a lazy asshole who wastes other peoples’ time. They didn’t bother to put the least bit of fact-checking in. I’m not even talking about the overall accuracy, mind you – let’s just look at the comparison table. On the left side, there are two sections marked “Efficiency”, and on the right side, two sections marked “Usage”:

That doesn’t make any sense. Then keep reading, and look at the bottom sections. On the left, they both say the same thing – but only one thing is checked:

Thankfully, the situation is much better on the right side, where, uh, both things are checked, so that’s also meaningless:

I hate this bullshit. I hate it. Haaaaate it. I work so hard to help debunk query myths and help you write better queries, and then some jerk-off like this slaps a prompt into ChatGPT, creates a pretty (but altogether full of crap) table, and it gets engagement on LinkedIn – thereby spreading misinformation all over again.

If you’re lucky, and the thought slop leader hasn’t tried to hide their source, at least LinkedIn puts a little “content credentials” icon at the top of AI-generated images. You can hover your mouse over it like this:

ChatGPT and Google Gemini are both labeling their images with hidden tags, helping sites like LinkedIn identify content that was AI-generated. However, ambitious authors can strip those tags out, trying to claim ownership of their content. (sigh) And they will, because they’re in a race to be the best slop leaders.

See, LinkedIn actually rewards bad content because commenters jump in to point out the inaccuracies, thereby making LinkedIn think the content was comment-worthy, and so it should be promoted to more viewers. Those viewers in turn don’t read the comments, and they just think the original post was merit-worthy – after all, it was recommended by LinkedIn – which spreads the misinformation further.

I love AI, and I use it every single day, but I hate the holy hell out of what’s happening right now.

So even though it drives me absolutely crazy to see this fake knowledge being passed off as truthful, I’m not gonna bother debunking it. These morons can create it faster than I can debunk it. I can’t even block these “authors” when I see them writing trash, because… they’re the very people who need to be reading my stuff! Sure, they’re slop leaders today, but tomorrow they may turn the corner and want to start actually learning SQL, and when they see the light, I wanna be there for them.

I’m just gonna keep offering you the best alternatives that I can: real-life, hands-on material that I’ve learned through decades of genuine hard work. Hopefully, you’ll continue to see my work as worthy, dear reader, and keep sharing the good stuff that you like, and keep investing in the training classes that I produce. Fingers crossed.

Related

Hi! I’m Brent Ozar.

I make Microsoft SQL Server go faster. I love teaching, travel, cars, and laughing. I’m based out of Las Vegas. He/him. I teach SQL Server training classes, or if you haven’t got time for the pain, I’m available for consulting too.

Get Free SQL Stuff

"*" indicates required fields

21 Comments. Leave new

I share your pain, Brent. And I guess I should also stop debunking the nonsense, as difficult as it is for me to leave such dangerous misinformation unchallenged.

I wish I were involved in hiring. If I had that role now, any allocation from a candidate who advertises their lack of critical thinking on LinkedIn would instantly go in the bin.

Your use of the term “slop leaders” makes me think of the adage “dont wrestle with pigs in the mud,” which youve clearly made the wise decision not too. i know Jeff Modem has taken up the torch of dealing with this and other disingenuous nonsense, but the sheer amount is making it a waste of time to your point. I also think your feelings are justified. it is bullshit.

Jeff Moden*

Hah, i didn’t spot the spelling error in the original comment. And now I wish his real lastname WAS “Modem” 🙂

It’s just a bunch of screechy noise when Jeff Modem and Baud Ward talk about Azure DB.

What bothers me just as much is that there’s no evidence of the claims. Let’s see an execution plan and results.

We both know why there’s no evidence but that is why it’s an applicable standard from a source I have zero reason to trust.

The problem is that people trust AI, and it seems that it’s usually because they don’t know better. A while back I was given some PHP code and asked to install it on a WordPress site. It didn’t work. It was supposed to work in conjunction with a new plug-in that this person had installed, so I assumed they had gotten the code from a reliable source. I looked at the error log and discovered it was calling a function that WordPress couldn’t find. I did some Googling and was told that it was an undocumented WordPress function that was being taken advantage of. I thought maybe the function was out of scope at that point in time, and tried to figure out why. Then I finally asked the person that gave it to me where the code came from. ChatGPT. It had completely made up this function, and a Google search had actually backed it up. I had wasted an hour assuming the code should work. I ended up finding another way to get the functionality that had originally been requested, but I learned a valuable lesson. Not only should you never trust AI, but you should assume it played a part in everything unless/until proven otherwise.

This whole article made me giggle. Not just the ranting which was refreshing and appropriate, but also the checking of the boxes and layout of the examples. Yes to small datasets. yes again to small datasets. Clearly this post looked pretty and laid out in a way that could be believed, but again to your point – if you even look at it, clearly its garbage. I appreciate your comedic feedback and ongoing expertise in this changing landscape. You are a wonderful voice in an increasingly AI based non-voice world. Your experience is a breath of fresh air.

two words: Thank You

It’s not just that the LinkedIn author is lazy enough to use ChatGPT to create content, but they’re too lazy to proofread that content in the simplest way. The number of authors who seem to actively refuse to do a quick final scan with their Mk1 Eyeball before posting something astounds me. It just makes them look stupid.

“slop leader” — I’m stealing this lol

No worried Brent. You’ve earned your credits. I’ll keep following you until either of us retires.

No worries Brent. You’ve earned your credits. I’ll keep following you until either of us retires.

I feel your pain. You are 100% correct.

I got a 429 rate limit error message, the first time i clicked on this post lol, on my second try it was successful.

Thanks as always Brent

Interesting how people can be convinced so easily to “believe” (sigh, where are the cynics). Yes hard to resist disputing stuff but as you so aptly point out it shows up faster than can be corrected!

I get this type of stuff submitted to SQL Server Central and I’m constantly rejecting it. It’s made my life a bit more of a PIA.

I might be whacking some legitimate human-written stuff, but if it doesn’t look good early, I’m just getting rid of it.

I bet “AI machines” are now grouped together in a comedy club laughing at us humans.

OK, so I probably missed this – but where is a good source to get the real story to help me and devs code?

They also wrote “c.customer_id = o.custome_id”

Spelling errors grind my gears the most.

Thank you for saying what I’ve been thinking! It is infuriating to read that crap. My brain is correcting their crap at every step. But then I think, why would I bother actually responding to their crap. It will just boost their visibility and readership, allowing more people to read their crap. And they clearly aren’t going to take constructive criticism on not posting more crap in the future, or they wouldn’t have posted that crap in the first place. We just have to wade through all the crap, and choose carefully which crap-storms we really want to battle.

The IN query doesn’t even have a FROM part ?