Most of the time, you’ll hear advice that you should select as few columns as possible so that SQL Server can do less work.

However, one of my clients hit a neat edge case scenario where SELECT * queries actually ran faster than picking a subset of columns.

I can’t share the exact query, but I can share a query that can at least illustrate the underlying concept.

If you’ve seen my “But It Worked in Development!” – 3 Hard SQL Server Performance Problems session from the 2017 PASS Summit, you may remember that SQL Server has some odd behavior when it comes to estimating how much data will come out of a query. Starting at around the nine minute mark, I run this query:

|

1 2 3 |

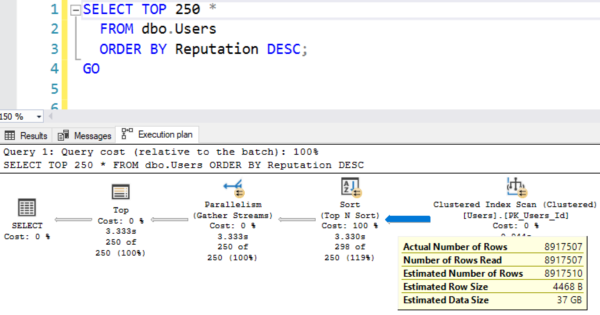

SELECT TOP 250 * FROM dbo.Users ORDER BY Reputation DESC; |

The Stack Overflow database doesn’t ship with an index on Reputation by default, so SQL Server needs to sort all of the users by reputation in order to find the top 250. The Sort operator in this plan is going to need a memory grant:

In order to calculate the memory grant needed by the sort, SQL Server looks at the amount of data that it thinks is coming out of the Users table. That guess, as I talk about in the PASS Summit session, is wildly incorrect because it’s based on the data type sizes, not the data that’s actually in the table. Because it thinks this table has 37GB (it really only has 1GB), SQL Server overestimates the memory grant:

SQL Server desires a memory grant of 49GB, but “only” grants 10GB because that’s the max that my SQL Server will allow due to its hardware size. The query only uses 1.5GB of memory – the sort has plenty of memory in order to do its job. Too much, in fact, but who’s counting?

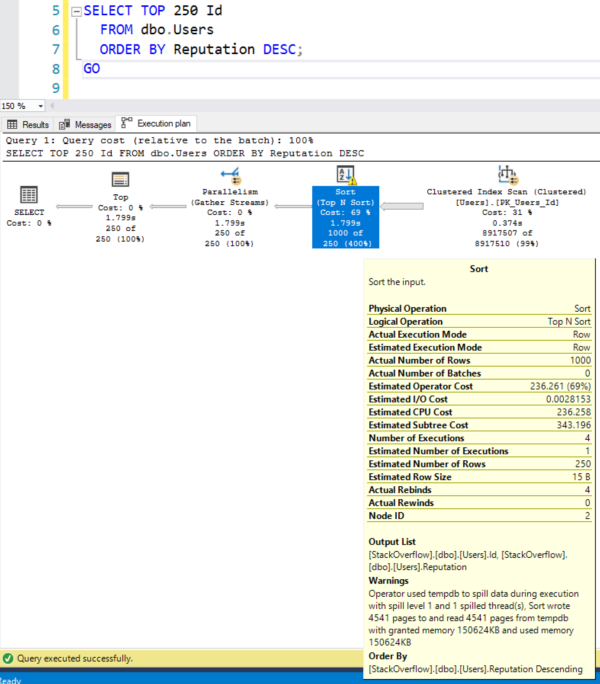

But if we change it to just SELECT Id…

Instead of using SELECT *, then now SQL Server is going to estimate that a much lower amount of data is coming out of the Users table, and now the sort spills to disk:

Because SQL Server underestimated the memory grant this time, only asking for 600MB of RAM.

In this query’s case, the spill doesn’t really matter – the SELECT Id is still faster. I’d say that’s true in 99.99% of cases out in the real world, too: you only want to select the specific columns you need. However, this is just a neat introduction to a complicated issue: trying to get memory grants to be high enough to handle your data, but not so high that they lead to RESOURCE_SEMAPHORE poison waits under concurrent load like I talk about in the PASS Summit session.

5 Comments. Leave new

Hi Brent, a little bit of a tangent, but…

I’ve not had a chance yet to really dig into Query Store and its capabilities, but from what I can see in the MS docs about Query Store’s DMV’s, I’d be able to dig into sys.query_store_plan to find plans with this sort of issue, right?

You’re right, that is a bit of a tangent. 😉

Which one we should use * or column name in select count query?

I believe you’ll see the same performance as long as the specified column is the leading column in an index.

Rinku – that’s kind of unrelated to this post, so head on over to https://dba.stackexchange.com and post it there.