Index Tuning Week: Fixing Nonaligned Indexes On Partitioned Tables

Unquam Oblite

This post will not change your life, but it will help me remember something.

When you decide to partition a table to take advantage of data management features, because IT IS NOT A PERFORMANCE FEATURE, or you have an existing partitioned table that takes advantage of data management features, because IT IS NOT A PERFORMANCE FEATURE, you may have or end up with nonclustered indexes that aren’t aligned with the partitioning scheme.

This is a bad thing because it will break those precious data management features. Of course, there are edge cases where you may want a nonaligned index, but at that point you’re probably just living with legacy partitioning that someone thought they’d use for performance even though IT IS NOT A PERFORMANCE FEATURE.

Options To Fix Things

The heading is a lie. There’s only one way to fix things, but I always forget it.

The tricky part is that you can’t use ALTER INDEX REBUILD... to do it. Your only hope is to use DROP EXISTING, or just create a new index and drop the old one.

Here’s an example. Let’s set up a table.

Transact-SQL

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 |

USE Crap; CREATE PARTITION FUNCTION pfunc ( INT ) AS RANGE RIGHT FOR VALUES ( 0, 1, 10, 100, 1000, 10000, 100000, 1000000, 10000000 ); CREATE PARTITION SCHEME pscheme AS PARTITION pfunc ALL TO ( [PRIMARY] ); CREATE TABLE dbo.ptable ( id INT PRIMARY KEY CLUSTERED ON pscheme(id), filler_bunny VARCHAR(5) ); INSERT dbo.ptable WITH(TABLOCK) ( id, filler_bunny ) SELECT x.n, 'DETH' FROM ( SELECT TOP (1000 * 1000) ROW_NUMBER() OVER (ORDER BY @@SPID) FROM sys.messages AS m CROSS JOIN sys.messages AS m2 ) x(n); |

The good news is that if you create an index with no direction, it will default to aligning with the partitioning scheme.

Transact-SQL

|

1 2 |

CREATE INDEX ix_fun_boy_one ON dbo.ptable(id); CREATE INDEX ix_fun_bunny_one ON dbo.ptable(filler_bunny); |

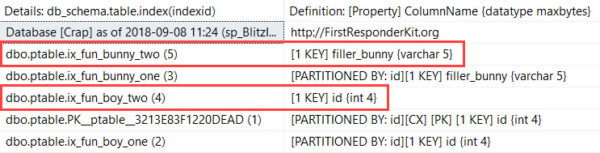

How do I know it’s so? Because sp_BlitzIndex tells me!

Transact-SQL

|

1 2 3 |

EXEC dbo.sp_BlitzIndex @DatabaseName = N'Crap', @SchemaName = N'dbo', @TableName = N'ptable'; |

But if you’re a silly goose — you know the type — the same kind of person who runs around creating nonclustered primary keys and then wondering why we call their tables heaps? You might create an index like this, or an index like this may be hanging around from before the table was partitioned.

Transact-SQL

|

1 2 |

CREATE INDEX ix_fun_boy_two ON dbo.ptable(id) ON [PRIMARY]; CREATE INDEX ix_fun_bunny_two ON dbo.ptable(filler_bunny) ON [PRIMARY]; |

Our new indexes are not partitioned! No good can come of this.

So Here’s The Part I Always Bungle

Index rebuilds are great for changing things about an index other than fragmentation. There are all sorts of lovely options.

Unfortunately, rebuilding on a partitioning scheme isn’t one of them.

Fun. Big fun.

Transact-SQL

|

1 2 |

CREATE INDEX ix_fun_boy_two ON dbo.ptable(id) WITH (DROP_EXISTING = ON) ON pscheme(id); CREATE INDEX ix_fun_bunny_two ON dbo.ptable(filler_bunny) WITH (DROP_EXISTING = ON) ON pscheme(id); |

Your other option would be to create indexes already aligned with the full syntax.

Transact-SQL

|

1 2 |

CREATE INDEX ix_fun_boy_three ON dbo.ptable(id) ON pscheme(id); CREATE INDEX ix_fun_bunny_three ON dbo.ptable(filler_bunny) ON pscheme(id); |

All Lined Up

I forget this a lot because I so rarely see clients using partitioning, at least in a meaningful way.

The conversation always goes something like:

- “Did you know this table is partitioned?”

- “No, wait, yeah, someone set that up years ago to fix [some problem].”

- “So you’re not swapping partitions in and out?”

- “Nope.”

- “Do you want to?”

- “Nope.”

- “Cool, moving along!”

In those cases, it’s not worth going through the trouble to align those indexes, especially is one of them is the clustered index. You may be looking at a maintenance window. If your database is part of log shipping, mirroring, or an availability group, processing large index changes can really raise hell.

Thanks for reading!

Related

Hi! I’m Brent Ozar.

I make Microsoft SQL Server go faster. I love teaching, travel, cars, and laughing. I’m based out of Las Vegas. He/him. I teach SQL Server training classes, or if you haven’t got time for the pain, I’m available for consulting too.

Get Free SQL Stuff

"*" indicates required fields

19 Comments. Leave new

I’ve seen this where a unique constraint is used to enforce business logic, but the unique constraint does not include the partitioning column.

@Erik: The MSDN does mention one scenario where database partitioning is a performance feature: Columnstore Databases. You can leverage database partitioning in order to guarantee that certain queries will experience segment elimination. See https://docs.microsoft.com/en-us/sql/relational-databases/indexes/columnstore-indexes-design-guidance?view=sql-server-2017#use-table-partitions-for-data-management-and-query-performance , aptly titled, “Use table partitions for data management and query performance.”

There are semi-supported ways to ensure segment elimination without table partitions, but table partitions work better. Of course, none of this is appropriate unless working with databases containing at least 1 million rows per partition. See also https://blogs.msdn.microsoft.com/sqlserverstorageengine/2016/10/04/columnstore-index-should-i-partition-my-columnstore-index/

Brian — is this something you’re using currently?

Yes, though I admit that the partitioning wasn’t actually necessary for performance; the data naturally arrives and is processed in an order conducive to segment elimination. The MSDN explicitly documents that table partitioning can be used to improve performance by ensuring segment elimination. I prefer documented, solutions to hacks relying on implementation details, even if those implementation details are semi-documented.

I’ll note that enough people rely on either natural data ordering or temporary indexes to ensure segment elimination that Microsoft is considering providing explicit support via https://feedback.azure.com/forums/908035-sql-server/suggestions/32896345-major-columnstore-performance-enhancement-alter-i . However, even this wouldn’t satisfy me; my preference was to have something that would be enforced as data arrived.

I’ll note that adding Columnstore databases was motivated by severe performance concerns (primarily with Disk usage). We spent a month or so of developer time investigating non-Microsoft columnstore databases. Then Microsoft started offering columnstore databases in SQL Standard. Shifting to columnstore databases ended up being a magic bullet. While there was probably a week or so of testing/experimentation, the actual solution itself was largely turnkey. Among other benefits, it dropped disk consumption by over 90%.

I’ll note that there are data management headaches 10+ years in my database’s future. Adding partitioning now will allow me (or my successor) to mitigate those headaches.

Very cool. You should totally blog about your experiences with it, when you need it, and when you don’t, when it hurts, etc.

I like reading MSDN blogs, but quite often they’re based on very small data sets (like Adventure Works, or Wide World Importers), and they don’t really show how people out in the real world use a feature.

Right now, there’s so few people blogging about this stuff it would be nice to have another voice out there (especially on Standard Edition, with its CS limitations on memory).

Microsoft has called partitioning for row store a performance feature in various places, so I’m always a bit cautious…

Thanks!

Any time someone suggests partitioning a row store as a performance feature, my default response is to apply the Socratic method:

1. Why do you think partitioning will improve performance?

1.No: That won’t improve performance at all because of X,Y,Z.

1.No2: Let’s test it with a toy example (performance is not improved)

2. Wow, that will improve performance a lot. It will also have [list of costs]. However, it’s still worth it. That said, let’s see if we can divide the data by [column used in partition function] without partitioning the table. Let’s just add:

2PCC. A persisted computed column instead. Here, I’ll convert your partition function into a giant case statement(*). Now, let’s add that column to the left of every index.

2RC. [whatever column the partition function is using] to the left of every index instead. We’re already using this column in the database, so adding it to our indexes won’t be hugely expensive.

3. I realize that step 2 will slow down anything which touches the database…but so would selecting the correct partition, so hopefully we’ll be fine. Now, we’ll just stick that column to the left of every index and we’re done.

Usually some variation on this series of questions either results in a viable alternative to partitioning or helps explain why partitioning is a bad idea. I’ll note that step 2 doesn’t work when discussing CCI, since CCIs don’t have keys.

(*)In practice, a case statement is overly general. More likely the computed column would be something simple like stripping the day from a date or rounding to the nearest 100.

Warning: Both proof by contradiction and the Socratic method come across as very condescending. If working with someone one-on-one, don’t use these methods as-is.

I’m with Brian. Use table partitioning because I receive data at fixed intervals so I made this the partitioning key, which means I can load a staging table and switch data into the final table very quickly. We use clustered columnstore and query individual intervals so we get a big benefit on partition elimination. The final table itself is also very deep (not so wide) so being able to incrementally update stats on individual partitions is also helpful.

TLDR; it’s a performance feature when you have a lot of data and are combining it with clustered columnstore.

David — usually, when I’ve seen partition elimination happen, it has put partitioned table query performance on par, but not really better than, an unpartitioned table with similar indexing.

I don’t disagree that it’s helpful with column store (for segment elimination, which you didn’t mention), but this post isn’t really about column store.

I’m also glad to see you use it for data management 🙂

Thanks!

@Erik: You repeated used “IT [partitioning] IS NOT A PERFORMANCE FEATURE” in all capital letters. So of course readers felt obligated to post about exceptions.

The same thing happened (unfairly: Brent was careful to describe exceptions in his post) when Brent told everyone to stop shrinking their databases ( https://www.brentozar.com/archive/2009/08/stop-shrinking-your-database-files-seriously-now/ ).

Brian — yep, and I’m being polite about it. I’m not even asking you guys to back up anything you’ve said about it improving performance 😉

“I’m not even asking you guys to back up anything you’ve said about it improving performance”

The reason we’re not backing up our claimed performance improvements is because there aren’t any performance improvements; it’s a loss. If done right (i.e., ensuring that each partition has at least 1 million rows and that each partition lines up with the types of queries you’re running), it’s a very small loss.

All else being equal, partitioning a columnstore table will make it slower, especially on older versions of SQL ( see http://www.nikoport.com/2017/04/05/columnstore-indexes-part-103-partitioning-2016-vs-partitioning-2014/ ),

Partitioning a database “properly” will STILL slow it down ( see http://www.nikoport.com/2017/01/06/columnstore-indexes-part-94-use-partitioning-wisely/ , specifically https://i0.wp.com/www.nikoport.com/wp-content/uploads/2017/01/Query-1-Execution-Times.png ).

Really, the only case where partitioning will improve performance of column-store is on a pathalogical case: The data is being loaded in an order that is completely independent of your partition function. This is pretty rare; the kinds of tables that need partitioning tend to partition on things like dates and identity columns.

On the bright side, a well-chosen partition function will only make performance slightly worse, and makes query performance a bit less dependence on data-load order. I don’t like relying on implementation details, even when the risk of being wrong is low and the cost of being wrong is “only” performance.

Brian — okay, cool, so my original point still stands? It’s not a performance feature, and the exception you’ve been pointing out is… not an exception?

I’m just trying to understand your point of view. This is why I suggested you blog about it, too. There’s probably a lot of nuance and detail that aren’t gonna come through in blog comments.

Thanks!

Congrats, you’re now the top Google hit for “Unquam Oblite”

Peter, I… I’ve always dreamed of this moment.

And here it is.

I came across this article while researching aligned indexes. It surprised me that there were a lot of comments about partitioning not being a performance related feature if you’re not swapping them in and out. Our experience has been quite the opposite within our ETL environment in the cloud (especially if you are using Azure SQL Server where you cannot change the default recovery model to simple or bulk-logged.

In our case, we get many files that need to be landed and merged (INSERT, UPDATE, and DELETE) into a master file table. If you want to support parallel BCP and parallel CRUD operations into the same table, partitioning is quite useful because it allows you to use partition level locking instead of table level locking which would cause other processes to WAIT. This GREATLY improves performance of BCP and merge operations in both the landing table and persistent master table.

If you have a simple or bulk-logged recovery model, you can get concurrent BCP operations with the BU lock, but in cases where you use Azure SQL Server, this is not supported so the only way to get parallel BCP operations is to use partitioning. For normal merge operations, regardless of the recovery model, using partitions to acquire a partition level lock is absolutely a great performance option. If you don’t then, each merge operation will wait due to the table level locking that typically occurs with these types of operations.

Another great performance benefit is that you can truncate a specific partition in the landing table so there is no logging instead of doing a delete statement.

Geekn – yeah, that’s because we focus on SQL Server here, not Azure SQL DB. They’re quite different products with different performance tuning requirements, as you’ve learned.

Microsoft Dynamics has non-aligned indexes on the (partitioned) AuditBase table and it’s a pain in the rear!

I inherited a table where each year of data has between 150 and 400 million rows. The table has two nvarchar(max) columns as well. The plan is to migrate the database to Azure SQL Hyperscale (not my idea).

We are negotiating with the customer to keep only last three years of data in the active database and archive the rest. Fortunately, the vast majority of queries include Unix date column as a greater than in the Where clause. The database is on AlwaysOn AG. At this point I cannot rebuild the clustered index online anymore.

The table must be partitioned. I decided to partition by year on Unix data column, leave the primary key not aligned nonclustered, and align all other indexes. All partitions will be on the PRIMARY FG because the database will soon migrate to Azure SQL. The new partition will be added during the year rollover process, and the oldest partition archived and dropped in the end.

I’d appreciate any input or comment. (I hereby give you the unconditional license to hurt my feelings.)

Sure, for personalized advice on production systems, click Consulting at the top of the screen.