There’s an important rule for tuning stored procedures that’s easy to forget: when you’re testing queries from procedures in SQL Server Management Studio, execute it as a stored procedure, a temporary stored procedure, or using literal values.

Don’t re-write the statemet you’re tuning as an individual TSQL statement using local variables!

Where it goes wrong

Stored procedures usually have multiple queries in them. When you’re tuning, you usually pick out the most problematic statement, maybe from the query cache), and tune that.

You don’t always want to run all the other queries. Maybe some of them insert, update, or delete records. Or maybe they just take too much time to run while you’re testing.

How Local variables cause trouble

Let’s say the first statement in this stored procedure is the #1 query on our server.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

IF OBJECT_ID('dbo.GetCities') IS NULL EXEC ('CREATE PROCEDURE dbo.GetCities AS RETURN 0') GO ALTER PROCEDURE dbo.GetCities @StateProvinceID int AS SELECT DISTINCT City FROM Person.Address WHERE StateProvinceID=@StateProvinceID; /* imagine lots of other statements here */ GO |

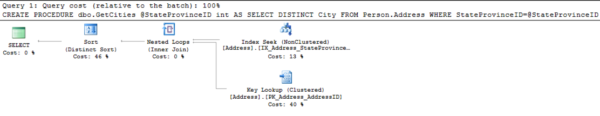

We’ve found the statement’s execution plan in the cache:

I right click on the plan and find from the bottom of the XML statement that it was compiled for the value @StateProvinceID=80.

I want an easy way to test it, so I quickly modify it to run as a single statement, using a local variable:

|

1 2 3 4 5 6 |

DECLARE @StateProvinceID int = 80 SELECT DISTINCT City FROM Person.Address WHERE StateProvinceID=@StateProvinceID; GO |

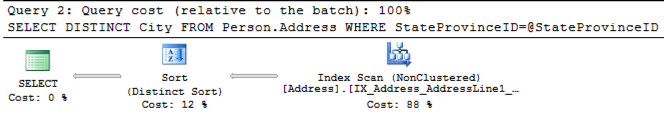

And I get a totally different execution plan! The original plan from the cache had nested loops, while now I’m seeing a nonclustered index scan:

This is confusing because I very carefully pulled the value it was compiled for from the original execution plan.

The problem is not the value I’m using for @StateProvinceID. The problem is not my statistics. The problem is local variables.

Local Variables are Weird

Local variables effectively “anonymize” the value being passed in on a parameter in SQL Server. It’s like you’re using Optimize for Unknown all the time.

That makes your local code behave differently than your stored procedure. It’s frustrating and confusing. It can lead you to create the wrong indexes, resort to index hints, or start using RECOMPILE hints everywhere.

Better Option: Temporary Stored Procedures

Here’s an easy alternative that not many people know about: Create a temporary stored procedure, like this:

|

1 2 3 4 5 6 7 8 9 10 |

CREATE PROCEDURE #GetCities @StateProvinceID int AS SELECT DISTINCT City FROM Person.Address WHERE StateProvinceID=@StateProvinceID; GO exec #GetCities @StateProvinceID=80; GO |

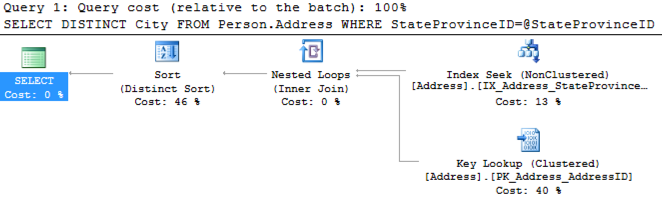

Hey, look, my nested loop plan is back, just like I found in the execution plan cache:

This is much like a temporary table: it’s scoped to my session. It lets me quickly and easily test and execute this statement in the context of a stored procedure without modifying my application database.

I prefer this option for testing because it helps me remain conscious that I need to test the procedure with multiple values of the parameter and make sure it stays fast with common values.

(Note: I’m not saying you should use temporary stored procedures in your production code. These are just useful for the tuning process when you don’t want to modify the original application code.)

Another Option: Literal Values

If I’m in a hurry and I just need to tune the procedure for one value, I’ll simply do something like this:

|

1 2 3 4 |

SELECT DISTINCT City FROM Person.Address WHERE StateProvinceID=80; GO |

By removing the parameter altogether I get a plan optimized for this specific StateProvinceID, and it’s my nested loop plan again:

Whenever I change the StateProvinceID value, I’ll get a different execution plan for each specific value.

Local Variables Sneak Up on You

Trust me: this is hard to remember out there in the real world. I’ve caught myself more than a few times rewriting a statement with a local variable, only to discover that I’m getting a different execution plan than I expected.

Whenever you’re rewriting a problem statement that’s run as part of a larger parameterized query, remember that temporary stored procedures can help your tuning process.

20 Comments. Leave new

Great example for using temporary procedures!

Just be sure that your SET settings are the same, your have proper schema references on all objects, the query text is copied verbatim (including case and white space) – otherwise you could still end up getting a different plan, and be wasting cycles optimizing a query plan that doesn’t happen in your *real* procedure.

Oooo, Aaron, that’s a great point!

Funny you should mention temp stored procedures.

I just started a new gig (SQL developer not DBA) where I don’t have SA on the servers but suspect they are not setup properly. I don’t have rights on master, so I modified all the spBlitz procs to be temp and run them as such.

Very cool!

Thank you. For some reason I forgot you could do a temporary proc, and this is a great way for me to do a code review better. This is especially true when you’re working on environments where you can’t trust a nonprod to really mimic prod and you need to run the read-only pieces against prod before giving your final approval.

I know many will cringe at that method, but sometimes it’s the only way to be sure when you’re in a situation to deal with dated nonprods several times smaller on horrible hardware (I lost a fight). For those of you unsure of this, please read over it carefully to make sure it’s read-only, wrap it in a transaction that always ends in a rollback, and still know that you’re adding risk.

As for sp_blitzindex, this is also a better solution than me editing it to be script instead of a proc.

I didn’t know you could create a temporary stored procedure.

I’ve learned something new today, thanks.

Just having a play around with this and I see that (just like the temporary table) it’s also possible to create a global temporary stored procedure too i.e. ##GetCities, which is then accessible from other sessions and which dissolves when the creating session is closed.

Haha, I didn’t know *that*! Wow!

Yup, and I’m now trying (and failing!) to think of a good usage scenario for this 🙂

I have one! Setting up a quick example of blocking to make sure monitoring works.

I have another.

Create a SQL Agent job which is scheduled to run once every hundred years, and have it create an entire schema of global temporary tables and global temporary stored procedures.

Use “WAITFOR DELAY 24:00” at the end of the job to leave it running for precisely 24 hours, during which time all other connections will be able to access the objects.

Then when the day is over, the job completes, the sessions closes, and the entire schema disappears into the mist.

http://www.imdb.com/title/tt0046807/?ref_=fn_al_tt_1

Ok, I grant you that your usage scenario is a little more practical Kendra, but still…

Hey Kendra, thought I’d check this out after it got a mention on “the list” today. Just wanna say great article.

Oh, thank you! That means a lot.

Thanks for recommending this post during the webcast today. I was able to more accurately simulate the performance of the stored procedure while troubleshooting. I also read through your Optimize for…Mediocre? post, which offered helpful suggestions for increasing the performance of the stored procedure. Thanks for sharing your knowledge and insights with us!

Is it useful if I just build a dynamic query indside the procedure and feed that at the end of the sp? What are the perils of doing this?

Example:

Begin

declare @qry

set @qry = ‘SELECT DISTINCT City

FROM Person.Address

WHERE StateProvinceID=’ + @StateProvinceID+”

exec (@qry)

End

In short the perils are more compilation (cpu use) and plan cache bloat (memory use). Jeremiah wrote a great post on why you might want to do something like this specifically to get SQL Server to use a filtered index, and he talks about some of the tradeoffs there: https://www.brentozar.com/archive/2013/11/filtered-indexes-and-dynamic-sql/

Is there a way to sniff out local variable at run time? I have a long running SP that updates rows based on local variables that store values from another table. I tried using Query Profiler and it shows only @nameofvvariable and not the value. I would like to know where exactly is the stored procedure progress and how many rows are left to go through. For example a loop that stops at @maxvalue and gets incremented @minvalue. While @min <=@max. I like to find out the @min and @max value.

This can be pretty painful. A lot of folks add specific logging code to the procedure so they can do this more easily.

After Searching the internet. I decided to use sp_trace_generateevent and custom event in Profiler. I can start and stop and not have SP return a value every time with a select statment.

http://weblogs.sqlteam.com/mladenp/archive/2008/10/16/Custom-user-configurable-SQL-Server-Profiler-events.aspx

Thank you, I’d never heard of temporary procedures. I sometimes see people modifying production sprocs temporarily and then forgetting to revert them back. This could be a good alternative to avoid that, in some scenarios.

[…] I link people to this post by Kendra and this post by Paul when I need to point them to information about what goes wrong with local […]