Email servers restrict how big your file attachments can be. I frequently ask my clients to send me trace files and diagnostic logs that are hundreds of megabytes (even compressed), and doing this over email just doesn’t work. In the old days, we set up FTP servers to move files around, but FTP uploads aren’t intuitive for most users.

Web services like Filedropper.com and drop.io sprouted up to help alleviate this pain. Users just go to those sites, upload the file, and they get a URL they can share with anyone to download the file. They’re easy and useful, but they reserve the best features – like big file sizes or privacy – to paying users.

Now you can host your own file-upload service on your web server and use Amazon S3 for the cloud back end. The whole thing is pretty simple:

Step 1 – get an Amazon S3 account. I’ve long been a fan of Amazon’s Simple Storage Service (S3), a cheap cloud file server. Bandwidth is free until November 1st, 2010, after which it’s just $.15 per GB, and storage costs are $.15 per GB.

Step 2 – download the HTML files – Ricky Matata did the hard work of building a form with a Flash-based file upload component and hooking it up to Amazon S3.

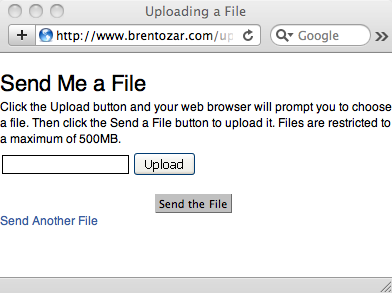

Step 3 – edit the parameters in the files – there’s a few parameters for things like the maximum file size you’ll allow, the number of files you’ll allow, and your Amazon S3 credentials. If you wanna get fancy, you can also edit the form itself – I simplified mine so that it only shows a file upload and nothing else.

Step 4 – upload the files to your web site & S3 – and presto, you’re done. You can point your clients to the web page whenever they need to send you files. As users upload files, they’ll be available in your Amazon S3 account – you can download them via the web. Depending on how you set up your privacy on your S3 folders (aka buckets), the public can also download those files – handy if you want to share files with multiple people.

Good coders might add things like email alerts so that they get an email whenever someone uploads a file for them, or perhaps automatic deletion of the uploaded files after X days. I’m not a good coder, and I’m quite happy with this setup. I just tell the clients to let me know after they’ve sent me the files I needed.

13 Comments. Leave new

this does the same thing but less fuss, no? http://www.airdropper.com/

Only for files under 100mb. I tried em already. 😉

That Windows XP style Upload button makes me a sad panda. The text isn’t even antialiased. 😛

Hahaha, yeah, that definitely doesn’t have the look/feel you’ve come to expect from the web powerhouse that is BrentOzar.com.

Very cool Brent. This is very easy to implement and a nice alternative to services like yousendit.

One thing to be careful with though, this script will overwrite any files that already exist on S3. So if one client sends you a file named ERRORLOG.zip and then another client sends you ERRORLOG.zip, your first file is gone. At a minimum I’d suggest creating a separate S3 bucket just for this uploader, and only sharing the link with people you trust.

Thanks! For me, that was a plus – I wanted clients to be able to upload their own files again and again without errors, replacing things each time.

Pretty cool, but as Andy said, why use one of those web-based services you mentioned? I like Filesdirect, persoanlly: 2GB uploads even on the free plan, 128-bit SSL encryption, plus customizable upload and download pages!

As I replied to Andy, they didn’t handle big enough uploads on the free plans. I have to shuffle databases and SQLDiag files around, and those easily go up into multiple gigs.

The html files from step 2 aren’t there anymore 🙁

Ouch, sorry! I’ll try to track down the author and see if I can get permission to post their files here.

Any updates on the files being posted here? as the actual source is unreliable 🙁

Vikash – sorry, no. Your best bet is to contact the author directly rather than me. Thanks!

Nice one..

Its really nice to use Amazon S3 and its services. I would like to say something if it is not related then ignore it.

Amazon S3 provide upload of big files size ranging upto 5TB you can use multipart upload as well you can assign permission to your user to upload the data in your buckets and as per your requirements.

My own very tool will help you to implement it.

Thanks and Regards

http://www.bucketexplorer.com/

Kirti