tl;dr – I do not recommend this book.

I was so incredibly excited when it was originally announced. A book published by VMware Press, written by prominent VMware, SQL, and storage consultants? GREAT! So much has changed in those topics over the last few years, and it’s high time we got official word on how to do a great job with this combination of technology. Everybody’s doin’ it and doin’ it and doin’ it well, so let’s get the best practices on paper.

When it arrived on my doorstep, I did the same thing I do with any new tech book: I sit down with a pad of post-it notes, I hit the table of contents, and I look for a section that covers something I know really well. I jump directly to that and I fact-check. If the authors do a great job on the things I know well, then I’ve got confidence they’re telling the truth about the things I don’t know well.

I’ll jump around through pages in the same order I picked ’em while reading:

Page 309: High Availability Options

Here’s the original. Take your time looking at it first, then click on it to see the technical problems:

OK, maybe it was bad luck on the first page. Let’s keep going.

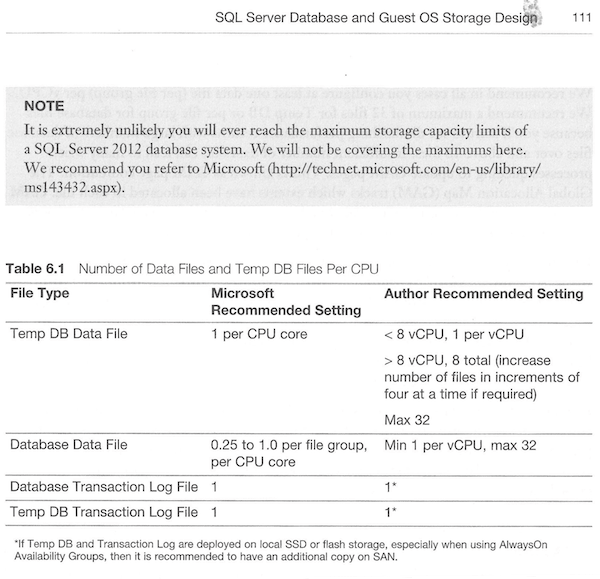

Page 111: Database File Design

The “Microsoft Recommended Settings” are based on a 2006 article about Microsoft SQL Server 2005. I pointed this out to the book’s authors, who responded that Microsoft’s page is “published guidance” that they still consider to be the best advice today about SQL Server performance. Interesting.

Even so, the #3 tip in that ancient Microsoft list is:

3. Try not to “over” optimize the design of the storage; simpler designs generally offer good performance and more flexibility.

The book is recommending the exact opposite – a minimum of one data file per core for every single database you virtualize. That’s incredibly dangerous: it means on a server with, say, 50 databases and 8 virtual CPUs, you’ll now have 400 data files to deal with, all of which will have their own empty space sitting around.

I asked the authors how this would work in servers with multiple databases, and they responded that I was “completely wrong.” They say in a virtual world, each mission critical database should have its own SQL Server instance.

That doesn’t match up with what I see in the field, but it may be completely true. (I’d be curious if any of our readers have similar experiences, getting management to spin up a new VM for each important database.)

So how are you supposed to configure all those files? Let’s turn to…

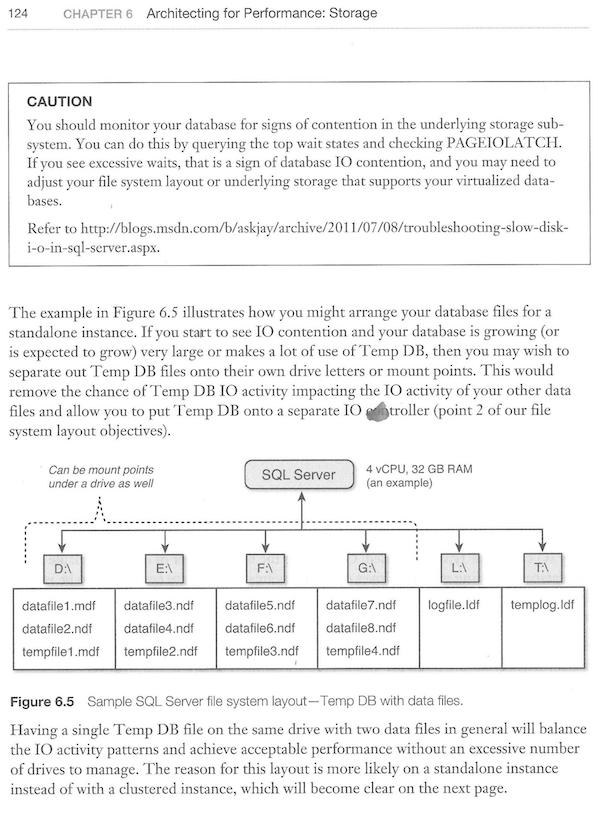

Page 124: Data File Layout on Storage

Imagine this setup for a server with dozens of databases. And imagine the work you’d have to do if you decide to add another 4 or 8 virtual processors – you’d have to add more LUNs, add files, rebalance all of the data by rebuilding your clustered indexes (possibly taking an outage in the process if you’re on SQL Server Standard Edition).

What’s the point of all this work? Let’s turn to…

Page 114: You Need Data Files for Parallelism

No, you don’t need more data files for parallelism. Paul Randal debunked that in 2007, and if anybody still believes it, make sure to read the full post including the comments. It’s simply not true.

I asked the authors about this, and they disagree with Paul Randal, Bob Dorr, Cindy Gross, and the other Microsoft employees who went on the record about what’s happening in the source code. The authors wrote:

You can’t say Microsoft debunked something when they still have published guidance about it…. If in fact if your assertions were accurate and as severe then we would have not had the success we’ve had in customer environments or the positive feedback we’ve had from Microsoft. I would suggest you research virtualization environments and how they are different before publishing your review.

(Ah, he’s got a point – I should probably start learning about SQL on VMware. I’ll start with this this guy’s 2009 blog posts – you go ahead and keep reading while I get my learn on. This could take me a while to read all these, plus get through his 6-hour video course on it.)

So why are the authors so focused on micromanaging IO throughput with dozens of files per database? Why do they see so many problems with storage reads? I mean, sure, I hear a lot of complaints about slow storage, but there’s an easy way to fix that. Let’s turn to page 19 for the answer:

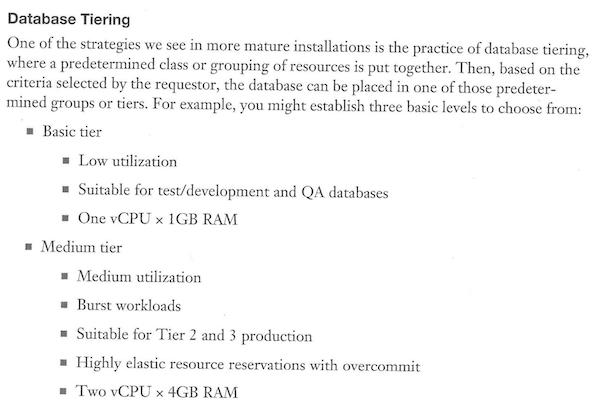

Page 19: How to Size Your Virtual Machines

Ah, I think I see the problem.

To make matters worse, they don’t even mention how licensing affects this. If you’re licensing SQL Server Standard Edition at the VM guest level, the smallest VM you can pay for is 4 vCPUs. Oops. You’ll be paying for vCPUs you’re not even using. (And if you’re licensing Enterprise at the host level, you pay for all cores, which means you’re stacking dozens of these tiny database servers on each host, and managing your storage throughput will be a nightmare.)

In fact, licensing doesn’t even merit a mention in the Table of Contents or the book’s index – ironic, given that it’s the very first thing you should consider during a virtual SQL Server implementation.

In Conclusion: Wait for the Second Edition

I’m going to stop here because you get the point. I gave up on the book after about fifty pages of chartjunk, outdated suggestions, and questionable metrics (proc cache hit ratio should be >95% for “busy” databases, and >70% for “slow” databases).

This is disappointing because the book is packed with information, and I bet a lot of it is really good.

But the parts I know well are not accurate, so I can’t trust the rest.

76 Comments. Leave new

>>They say in a virtual world, each mission critical database should its own SQL Server instance.

We definitely have more than 1 DB on a VM instance….

Yeah it’s hard to imagine one DB per instance. Separating the disks/mount points for each database on that critical instance so they don’t cause cascading outages… that sounds more useful.

Still, I didn’t know and don’t have a stance on any of the more technical issues above. That’s for the architects to worry about 🙂 I do sympathise with the struggle though. Just a few weeks ago I was in an argument where someone said, “If you cared about performance you wouldn’t be putting SQL Server in a VM”.

But we got interrupted by a phone call from 2005 asking for its DBA back.

In highly automated environments I have seen an instance per application or business unit, but one database per VM/SQL Server instance seems extremely excessive and unnecessarily complex.

I have watched some of Jeff and Michael’s presentations from VMWorld and thought that they were quite informative. Many of their recommendations from those presentations held up well in lab testing.

This book is on its way too me and I will still be reading it, but I am disappointed to be hear that these oversights and out dated recommendations made it into the book.

Kyle – agreed, I was really shocked by the book’s contents. The authors have great reputations in the Oracle and VMware community, and I was really looking forward to seeing what they produced for SQL Server.

Denis – yeah, that’s been my experience as well.

I used to work for one of the biggest public companies in the world and I actually saw it, I even configured, single SQL instances for only one mission critical database.

Complex? Yes. Expensive? Of course. But if it goes down, it won’t go down with something else. This is something that medium and small size companies can’t afford but for someone who has worked for billion dollar size companies out there, is a possible scenario. The exception for the rule was when the application required several databases to operate or when the application itself was not considered MC. And that was established based on how many millions in lost revenue an hour downtime could generate.

By the way, there was a lengthy and bureaucratic process behind that before granting the MC status and/or decide if it was going to run alone in its own SQL instance or not.

Very interesting review.

So I went to the Amazon site and noticed the book was just published on August 4th, but already has two 5-star reviews dated August 6th and 10th.

I’m a little skeptical that someone was able to read the book so quickly and give a proper review. That means the reviewers received the book ahead of time. Now that makes me skeptical of their objectivity.

Steven – yeah, my thoughts exactly. My Amazon review is pending moderation, should be up shortly.

Brent, let us know when your review is up on Amazon, so we can upvote it as a useful review (certainly in response to the 2 existing reviews).

Dan – thanks! Perfect timing, it just went live:

http://www.amazon.com/Virtualizing-SQL-Server-VMware-Technology/product-reviews/0321927753/ref=cm_cr_dp_see_all_btm?ie=UTF8&showViewpoints=1&sortBy=bySubmissionDateDescending

171 upvotes for your review on Amazon. The power of the Interwebz 🙂

The review is up.

Thanks Brent, you made my day, I laugh a lot when ii rread the book content. I will buy this book and read when i am free. Haha

Hamid – you’re welcome, glad you enjoyed it!

Interesting timing for this post seeing as how we are in the midst of planning our migration to virtual servers on VMware. You hit the nail on the head – if licensing wasn’t mentioned, that’s a huge oversight seeing as how that’s one of the most compelling reasons to virtualize SQL Server to begin with. I can’t fathom creating all those data files, and then “manually/proactively” monitoring their growth rates? Holy administrative nightmare batman, and not just for the DBA. Thanks for the link to Paul’s post also – that was very informative.

Let me see if I understand this correctly. I’m supposed to manually manage all these file locations and sizes in the name of optimizing parallelism, then give them crippling amounts of RAM (1GB for basic tier, 4GB for medium tier)? That’s pure insanity.

Doug – and if I was really a good consultant, I would promote this book because it would bring us in a whole lot more business. Folks would be so frustrated because they’d put so much work into micromanaging these files, and yet performance would still be awful.

But you would promote it under a Pseudonym, let’s say Orent Beezar, otherwise it would fall back on you

Interesting to see those view snippets of the book. We had a consultant come in a few months ago from the company who manages our infrastructure. He tried to tell us that we should align any of our virtual SQL Servers with the same recommendations outlined on page 111. He claimed that SQL Server will never use more then 1 CPU or 4-8 GB of ram regardless of the database load. Also if we aligned the virtual resources we would see performance increases. He nearly hit the floor when he say our virtual OLAP environment (2 guests each with 300GB of ram and 32 vCPU completely consuming a dedicated host).

Luckily he lost a lot of credibility quickly here and no one bought into his recommendations – at least when it came to our virtual SQL boxes.

Ian – wow, I’m stunned that a consultant would ever make a statement like that. Yowza. Although on the bright side, it means folks like us will always have work. (sigh)

Excellent article and thought provoking how many other books are misleading people, on that note what books would you recommend around this topic ( or others for that matter , obviously yours) if there are any?

Martin – thanks, glad you liked it. I actually don’t have a book I would recommend on virtualizing SQL Server with current versions of VMware, sadly, and that’s why I was so excited about the book.

…and we trust Brent more than we trust author.

If you won’t write your own books, would you kindly do a fine-tooth-combing of every-SQL-Server-book-ever-written, and write a detailed review? I’ll buy you a beer for your efforts.

Many thanks.

On the topic of SQL Server + VMware – yes, I trust Brent (and Paul Randal). I don’t know the author, but I do understand the points Brent brought up and they are very valid points. You will find many SQL Server experts agree on those very topics, not that Brent needs such confirmation from the DBA community.

Allen – thanks sir. You know, it’s funny, when I read something like this book, I really start to question my own experiences. Before publishing the review, I had several discussions with other community folks (and of course the authors) as a sanity check. When I read these types of guidelines (1vCPU, 1GB RAM) I really wonder if it’s me, or if the world has gotten success with that type of approach.

Justin – thanks sir. It’d better be a really big book.

We are in the process of deploying SQL Server 2012 EE on VMWare, I have been looking for good resources. Thanks for qualifying this book, won’t bother buying it.

Here’s to hoping Allan HIrt’s (@sqlha) new book covers visualization.

Oops. meant “virtualization”. Spell check got me again. 🙂

Yeah, it will, but from an HA perspective, not a performance management perspective.

Speaking of which… You wouldn’t happen to have an inside scoop on when that might be getting released, wouldja’? 😉

Jerrod – hahaha, no, but I pester him on a regular basis. Allan’s great. He’s working hard on it and I can’t wait to see the results.

Thanks for reviewing this book Brent. I was also interested in picking this up, but with so many issues on just those few pages I’ll wait. I pulled our VMware Admin over to look at your review too, we both got a good laugh at the notion of 1GB of RAM on a VM and having one VM Client per database.

Where I work we have a ‘large’ SQL Server cluster designed by a Microsoft consultant in order to group all the small boxes into a big cluster (3 years later still uses less than 20% of resources, what a waste).

The CPD failover design consists on a VM for each database and they want it to have the same amount of resources assured at VMware level, so that means we need to waste double amount of resources just in case.

Maybe we didn’t get the best consultant but this is with what we have to deal with in the every day

OTOH, we do have SQLServer VMs with 1vCPU and 1GB of RAM and they work as expected. Believe me that they have enough with that but also believe me, that even those being production DBs, the amount of work they do is minimal.

And from a VMware admin POV, it’s easier to start low and give more resources as needed. Unused vCPUs tend to produce ‘CPU Ready’ problems.

Urko – I’d be curious to hear what the end users thought about those VMs with 1vCPU and 1GB RAM, and hear about how you do DBCCs and backups on those VMs. Sounds like a great blog post or case study!

Great review Brent.

I found it funny-sad how one can find that many issues in a few pages.

We have a growing VM SQL deployment here and are trying to move to it as much as we can. When it comes to spec’ing out the boxes, it is always “interesting” when vendors want physical box requirements on a VM. it takes some work effort to trim them down to what we, the DBA’s, think is enough 🙂 In the beginning, when we had not much experience, we let some of these go that way. Talk about wasted resources in vCPU cycles! Thankfully we’ve gotten either rid or trimmed down as possible.

On the back flip, VM’s with 1vCPU 1GB RAM, has been a no-go for any we tried. As you mentioned in another post res[ponse, doing any “intensive” task, like DBCC, backups, or even RDP’ing to the box just kills it…

Oh cool, one CPU and gig of RAM. Can’t wait to play Myst on that.

Does this mean I can run an awesome production SQL Server on my Galaxy S5?

This is why I tug on my collar when my boss asks if I’ve read anything about using VMotion on a SQL cluster.

Seems like they were confusing SQL Server with MySQL? I do have some (xsmall) MySQL VM’s with 1 vCPU and 4gb RAM. And because the licenses are so much cheaper, and we’re SOA, the MySQL is mostly one service db per instance. That is certainly not the way any of my SQL Server instances are set up.

Nice writeup and informative as always Brent.

John

I agree that virtualizing SQL does mean that you need to size VMs appropriately which is different to physical servers. I am actually a VCP5-DCV and I can understand (understand their point only I would never recommend this) why they would say put one database on each VM running SQL, as I am sure I heard that many years ago.

VMware do sell their software expecting you to use their HA features and pretty much tell you to forget Microsoft Clustering (I have done most of the VMware sales and technical sales training) etc, however I have never seen these recommendations in the field.

Licensing costs would be a nightmare let alone having to patch every single instance of SQL server for every application you are running on your virtualized environment. Good for only having a single application become unavailable should a sql instance reboot itself (or some other outage) leaving all your other applications available, but a massive NIGHTMARE should you need to patch windows and SQL on those VMs (hint you WILL need to patch them).

Currently 124 people have said your review has helped although I would imagine it hasn’t helped sales which is a shame as there is very little available elsewhere. It does however leave me without a decent book on virtualizing SQL server which is annoying and highly sought after. At this rate I might have to do some research and write one myself and I much prefer writing code. 🙂

Charles – thanks for stopping by. Yep, the licensing and patching would be pretty tricky, especially if you’re using any kind of third party monitoring or backup or management software for all these VMs.

Thanks for continuing to expose this crap. Our profession needs better empirical standards.

James – you’re welcome, glad I could help!

MEM/CPU recommendations: Actually our vmware guy said that VM’s usually have overallocated vcpu’s (can be checked via operations manager if needed) and they might actually benefit on having just one vcpu’s instead of many. It was something to do with clock rating and how stuff are scheduled in hosts. I wonder if this is explained in the book in the parts you did not read.. :/ . I think vmware does alot of memory operations behind the scene (compression etc), I don’t know if that has anything to do with recommendations made in the book, but memory recommendations seem odd..Haven’t seen these kind of values in any production machine.

RPO/RTO values: I don’t understand these, RPO time:depends on the DB settings and that has to be discussed with customer what they need. ..RTO: in failover cluster I would say ~20s to failover and then you have to add the time application takes time to recover. Never watched/experienced this is in client side so i cannot confirm the exact time needed. IF you had MSSQL2014 AND CSV usage (not mentioned in the charts tho’), I think that would save up time. But these are not the numbers I would tell my customers because they must be able to give time to users when to log back to application and boy if we give wildly optimistic numbers > those users that try to log in applications that are not available when you said >they would WILL call you 🙂

Anyways, this was a book that I was thinking of buying but if you have to second guess everything you read, I think I will wait for another book to come out. Maybe you should write your book on the matter 🙂

Jaana – the 1vCPU recommendation is “mansplained” in the parts I did read, but it completely ignores basic SQL Server tasks like DBCCs, backups, and index rebuilds. In a world where we don’t have to maintain databases, sure, 1vCPU can work. Back here in reality…

RPO and RTO *goals* depend on what the customer needs. RPO and RTO *deliverables* depend on how you set up the technology. The chart on page 309 says that RPO is different between SQL 2008R2 failover clusters and SQL 2012 failover clusters, and this is simply and demonstrably not true. It’s not even debatable – it’s just flat out wrong.

Jaana – I agree with your VMware guy as most VMs are actually over provisioned as there is a difference between what a system needs as a VM compared to a physical box. I imagine that Operations Manager can help resolve that issue of over provisioning but have not yet used it to see how it allocates resources to VMs running SQL Server. The main reason that vCPUs are over provisioned is due to how VMware schedules the physical CPU resources (as well as how people still perceive a core to be in the physical world). I believe that each vCPU is actually a representation of time, of the physical processor, so giving a VM more vCPUs might sound like it will give it more time to run (and it does per se) but those slices have to all be allocated together so your VM may actually find that it has to wait longer for 2 slices to become available at once, rather than just waiting for one slice which the scheduler could allocate much faster and much easier. If 4 vCPUs are allocated the scheduler will have to wait for 4 time slices to become available before it can give them the access it needs to the physical processors causing an even longer wait time before it gets to do some processing causing the VM to appear like it is working much slower. Depending on the edition of SQL will also determine how many vCPU cores it can use too (its sees physical and virtual cores in the same way), which have been slowly increasing over time. I believe enabling hyper threading on the physical CPU can also present some issues but I’m happy to be corrected on any of the above.

Charles

Charles – that’s called co-scheduling, and it’s been constantly reduced since vSphere 4.1. The 4.1 whitepaper was pretty good, and the 5.1 whitepaper is even better. Time to get your learn on:

http://www.vmware.com/resources/techresources/10345

This is also covered in my Virtualization, Storage, and Hardware for SQL Server DBAs training: https://www.brentozar.com/training-videos-online/virtualization-sans-and-hardware-for-sql-server/

Brent – cheers for the link its a bank holiday here so needed some more stuff to read 🙂

thanks

Brent,

I can’t say I am surprised. I worked for five years as a DBA for a federated cluster of SQL Servers/databases that used replication to supported a global shipping application for tracking shipping containers.

Due to issues with the “Experts” from VMware recommendations they could never migrate any of these SQL servers to a VMware host.

At one point we had to ask for another support tech because the one we were working with said it was a waste of time and resources to place the tempdb, log files, and data files on different vhd’s and different san lun sets. He swore that the SQL server process could not read/write data fast enough for this to make a difference.

IMHO: VMware has some very bad information the collected during unique and custom SQL 2000 and 2005 early adopter implementations and mixed it with some poor responses from the support available at that time. It is embarrassing that they refuse to listen to any rhyme or reason that comes from current published fact and implementations.

Hi Brent, would you recommend any books about virtualizing SQL Server with Hyper V 2012? I know everyone gets very excited about VMWare, but we just dont have the funds for it. We are just about to start virtualizing our SQL Server 2005 Std Edition, and I dont see many books written about this using HyperV, mainly VMWare. Or is it because VMWare is so much better than HyperV that its not worth it?

Cheers

Naz – it’s just due to market share. If you’re a book author, you don’t really make money on books – you do it to gain consulting revenue. If most folks are using VMware, then that’s what you want to write for. For Microsoft Hyper-V books, you’ll want to look at Microsoft Press (who publishes books for a different reason.) Enjoy!

Thank you for this article!

I had a chat with a colleague for over ridiculous things in the book.

You’ve just confirmed our view.

I will read it with caution

I’m currently reading the book, and while it’s disappointing to read your review, I believe it can still be a useful tool–as long as one is aware of its shortcomings. The book, like any other source of information, should be vetted for accuracy and completeness against other sources. To be effective, our toolkit should always include a variety of tools and information sources (if you only have a hammer, everything looks like a nail.) Each tool should be carefully scrutinized to identify strengths and weaknesses, and we should never rely exclusively on the judgment of others to determine the value of a tool. Even the best tool is only as good as the person wielding it–a master carpenter with an old crappy hammer is still going to build better furniture than the first-time homebuyer who bought the “best” hammer at the home improvement store?

I would also like to respond to some of the comments posted regarding the impracticality of managing an environment where each application or database had its own SQL instance. This really is dependent on a variety of factors–what “problem” you’re trying to solve with virtualization, how much preparation and planning is put into architecting the VMware environment, the design of your database, etc. This is my opinion, but it’s an informed opinion based on my own experience running a completely virtualized SQL 2008 EE distributed database environment for the past 4 years.

Most organizations follow a common path to virtualization–dipping their toes into the water and virtualizing the least critical applications first, with server consolidation and cost savings the primary business drivers. We took a slightly different route to virtualization–we dove head-first off a cliff into the ocean! We virtualized the most complex, performance intensive workload we had—the database tier of our web-based SaaS platform—our most valuable technology asset!

The primary drivers in our case were almost completely opposite of everyone else’s. We virtualized for performance and scalability—we didn’t care about consolidation or cost savings (okay–the CFO and finance guys cared about the money –they were in the middle of an IPO after all!) Virtualizing the database tier and switching to a distributed architecture allowed us to scale vertically and horizontally, rapidly and efficiently, and keep pace with the business’ rapid growth. No more slow, painful migrations to new physical hardware to increase capacity or performance!

Virtualization allowed us to easily upgrade the underlying hardware, and increase the total pool of available resources without having to take the platform off-line. It allowed us to allocate additional vRAM, vCPU, or storage resources on the fly (This does require a reboot of the VM, but a virtual reboot is MUCH faster than a physical server reboot!) Admittedly, cost was NOT the primary consideration –the finance guys did set limits—we couldn’t spend money like drunken sailors in Bangkok (as guidelines go, that left a lot of room for interpretation—they really should have been more precise.)

Each VM contains a single SQL 2008 EE instance containing a pair of user databases–one of which is an “archive” database containing data written once and then rarely ever accessed again. Each vm is configured with 8 or 12 vCPU (depending on the load on that particular server), 56 GB RAM (48 GB allocated to SQL–being doubled to 96 GB next week.) There is a 1:1 ratio of virtual to physical memory and CPU resources (using memory reservations, etc.) The SQL VMs sit on top of VMWare ESXi HA clusters, and policies are configured so that there are always sufficient unallocated physical resources available on the physical hosts within a cluster to maintain the 1:1 ratio of physical to virtual resources if a VM fail-over occurs (Each physical host can support at least 2 of the SQL VMs without oversubscribing memory or CPU.)

The disk space for the VMs all resides on an EMC SAN with tons of SSD & FibreChannel drives and multiple I/0 paths. O/S files & application binaries, data files, transaction logs, tempdb, and pagefile are all segregated on different LUNs. The physical ESXi hosts are all connected to a fully redundant 10 GB network with dual NICs, and separate VLANS segregate data, VMware management, and vMotion traffic Four years later, and the original 16 SQL Server VMs have grown to 22. We have also completely virtualized our web and application tiers, Exchange servers, and most of our utility and other servers. There are almost no physical Windows servers left in the environment.

We’re currently in the planning stages of the next iteration of the architecture—SQL 2014, with 256 GB of RAM per SQL instance, Cisco UCS with the new, faster processors for the ESXi host servers, and upgrading to Cisco 7000 series switches in order to extend the virtualization to the network layer, and eventually make our D/R site the second node in an active/active geographically load-balanced extend our HA configuration to the site level. Exciting times?

Tony – well good news: if you like buying books to fact-check them, then I can see why you like this book. You’ll have a great time with it. Enjoy!

Brent, I’ve truly enjoyed this review. An additional small item to consider under the section “Data File Layout on Storage” for the TempDB file location selection during a SAN-to-SAN replication. Normally, the granularity for replication is on a per-LUN basis. Having the TempDB on its separate LUN allows the SAN admin to ignore it during replication. Decreasing replication bandwidth utilization in an environment where multiple virtual SQL server are spawned on a per application-database basis. Besides, as the enterprise grows, it is nice to storage-vmotion TempDB LUNs into their own flash/SSD RAID groups with ease (isolating database file IOs on their own RAID groups instead of sharing the same storage pool with every other server).

I had a chance to read the entire book. While their are minor flaws I think it’s a pretty good book. A dba should never take every recommendation in a book.

Robert – great, thanks for your comment. I would disagree about the word “minor” obviously, given the things I covered in this review alone, but I found a lot more challenges than that. Just gotta stop and cut my losses after finding dozens of errors.

Sad, I was looking forward to that book as well. Having recently had my first child (ok I didn’t my wife did) I have realised one thing. SQL environments are like babies. They are all unique, they all have their own specific requirements and respond to problems in different ways. There are general rules for looking after them up (do this but DON”T do that) and if you add a little bit of “thinking outside the box” you can generally solve most problems. If not call Brent…

Hi Brent,

I’ve been working with SQL Server since 15+ years and I agree with your review.

I am new to VMware, have you any suggestion on a good book to start with ?

Thanks

A.

Alberto – unfortunately, I haven’t seen a SQL Server virtualization book I can recommend. Rather than looking for that specific niche, I’d take a step back – what are the problems you’re trying to solve?

Hi Brent, thanks for your reply. What I’m trying to understand is basically this:

– customer with VMware infrastructure (vSphere, mMotion)

– a mirroring session (sql2L14) with no automatic failover is in place

– i am planning to add witness and suggest application changes necessary to support automatic failover

– Alwayson AG would be another option, but since customer no need to redirect read-only queries or backups I don’t think it’s worth it (= cluster between VMs.. brrrrr)

So… I was looking for a book to better understand interactions, tradeoffs between SQL-native solutions and VMware-native solutions.

Am i on the right way ?

Alberto – that’s not really a VMware question. The solutions you’re choosing between would be the same whether they’re on bare metal physical or in a virtual environment. Because of that, I’d start with Books Online (the manual) and read about the differences between the high availability and disaster recovery mechanisms you’re considering.

Hey Brent – I see David Klee write a 5 star review! I’ve taken a few of his classes at SQL Saturdays and he is excellent. Why do you think you two are so far apart on opinions of this book?

Ernie – David believes that as long as you know which parts of the book are correct and which ones aren’t, then it’s a great book.

I believe that people buy books to LEARN correct things. After all, if you already knew this stuff, why would you buy the book?

Brent,

With all respect, I think that your review is harsh and far from accurate. You rated it 1 star, which to me (I think I mentioned it before in Amazon) is for a book that is just plain garbage. This book is not garbage, at least not to me.

My review, if I recall well, was the 1st one and one with highest rating. I do agree that the book is not perfect (no book is perfect either) but there is good material in the book too. And I read all the book already by the way so I can assure you that.

Now, if you are expecting a 100% accurate paper book with no errors, I think that your expectations are too high. Even when buying MS cert books I know that I need to do my homework and validate the content because errors will be there anyway.

You, David and Denny are my favorite SQL/VMware experts but unfortunately I may have to agree on disagree regarding the rating on this book.

Now, I hope you will not censor my comment now 🙂 … I am just expressing my opinion.

Ernie-

Some of the people that agreed with Brent don’t even bought it. They simply went by Brent’s reputation (which I kind understand) or read excepts of the book and stayed away from buying due the 1 star rating, which again, to me is equal to a garbage book.

Jose – we don’t censor comments for opinions here. All opinions are welcome, yours included. Diversity of opinion makes the world a better place.

I don’t disagree that there is good material in the book. However, the book is simply flat out bad for people who don’t go in with a very high level of knowledge about VMware and SQL Server. For example, there’s not even a single mention of licensing, and if you follow the 1-vCPU recommendations without considering licensing first, you’re flushing money down the drain.

I stand wholeheartedly by the errors I pointed out above. Note that David Klee has also said he’s working with the authors to bring out a revised version of the book, and he’s helping them correct the errors. I look forward to that book – but it’s a shame this first version ever had to see the light of day.

And I stand wholeheartedly on my opinion too 🙂

But don’t get me wrong, I am not saying that the book does not have errors. It’s just that a 1 star rating seems to be too harsh. In fact, when you see the rating distribution, you can count 15% of votes as 1 star reviews. There are no 2 or 3 star reviews at the time of my post. Only two of the 15%, you and other user, rated it at 1 star.

So most people agree, at least in terms of rating, that this is a 4 star or higher book. While only 15% agree that is a 1 star book, you being one of them. That tells me, at least in terms of rating, that majority of people disagree with your 1 star rating even though they also accept there are some errors too. Don’t you find that interesting? Why so many users rated it so high then?

Jose – take a closer look at the number of people who marked my review as helpful, versus the rest of the reviews. It would appear that you, not me, are in the wrong. 😉

Brent,

I do believe that most people agreed with your opinion based solely on your name and reputation, and not on actual reading. This is something I can only express as an opinion though, I can’t confirm it as a fact.

However, it is still interesting that over of 200 people, as it seems that agreed with your 1 star review, not even half posted or created their own review. This can be due laziness or just because they simply did not buy the book and never read it. I personally believe is the 2nd.

Jose – I will assume that that’s a compliment about my name and reputation. Thanks for stopping by and chatting!

Although I’m desperate for this information, I’m going to wait for the 2nd edition. I don’t have the knowledge to know what is useful and what is not (hence the reason for needing the book!). At least I know the update will have additional eyes on it!

Jose – they are agreeing that is review is helpful, not agreeing that the book isn’t worth reading. I rarely, if ever buy a technology book without reading reviews (mostly on Amazon of course), skimming the table of contents and thumbing through a few pages. Brent did that for us in one post, so he certainly saved me some time and would get a +1 from me as well – not because he is Brent, but because the content he provided was useful. The thing that differs technology from “other” books is that there are scores of books on each topic so good reviews are priceless.

Hi Brent!

I just came across your book review, I JUST got a signed copy from Michael yesterday at the BC UserCon VMUG held in Vancouver yesterday.

I’m incredibly disappointed now that I came across your Amazon Review; you’re right, people buy these books to learn and I’m not a SQL guy, I do Virtualization and I was hoping this book would point me down the right path for being able to talk intelligently to SQL Admins and Stake Holders so we could rid them of the last bits of physical infrastructure they have around.

I’ll read the book, but I’ll have no idea which SQL related bits are important or outright wrong!

Where can I go from here to pick up some good foundation knowledge?

I know there isnt any other equivalent books, but what can I do to be more knowledgeable about databases and their operation, as well as best practices?

Thanks!

—

Jeremy Carr

Jeremy – sorry to be the bearer of bad news there.

What pain points are you trying to learn to solve, and that’ll help guide my recommendations?

I have nothing specific at the moment, but from time to time we come across potential business in virtualizing some critical workload.

In the majority of SQL or application virtualization, its dead easy and doing like for like parameters works just fine, we can then right size vCPU or memory and for the majority of customers everything is perfect.

Now and then we come across a customer who tried virtualizing their workloads and the performance isn’t there, they can do like for like, or over provision, etc and the synthetic tests or dev tests on essentially empty hosts leaves a lot to be desired.

I was hoping this book would say: Do 123, ABZ, or XYZ and you’re good and this is how you can vet it and work with the SQL DBAs for tuning.

Whenever we come across database servers we pull in our DBA consultants, but our DBA consultants don’t know virtualization usually and we don’t know databases — someone needs to bridge the gap; either the DBA needs to know how to optimize for virtual environments or the Infrastructure guy needs to know how to optimize for databases.

I’m trying to bridge that gap so I can successfully help our customers meet their business objectives.

Thanks Brent!

—

Jeremy Carr

Jeremy – It sounds like you’re really more after performance tuning knowledge in general. In that case, I’d probably start with the performance tuning books here – the versions are out of date, but the concepts are all still sound: https://www.brentozar.com/archive/2008/08/recommended-books-for-sql-server-dbas/

So, what is a good technical reference for some one who’s new to VMWare (6.5) ? Are the basic concepts between hyper-V and VMWare the same ?

VMWare and SQL Server, of course !!!